Trustworthy Agent UX: Designing Interfaces People Will Actually Rely On

The conversation around Artificial Intelligence has reached a fever pitch. We hear promises of autonomous AI agents poised to revolutionize every industry, handling complex tasks with minimal human input. Yet, a dose of reality is setting in. Recent industry analysis, including a sobering August 2025 report from MIT, reveals a significant gap between hype and reality. The study highlighted that a staggering 95% of enterprise generative AI pilot projects are failing to deliver a measurable return, forcing leaders to manage expectations for the near term.

This isn’t a declaration that AI has failed. Far from it. The consensus is that agentic AI will be incredibly valuable, but its true impact is on a five-year horizon. We are in what MIT economist Erik Brynjolfsson once termed a “productivity paradox”—a period where transformative technology is adopted, but productivity gains lag due to the need for organizational and process reinvention. For agentic AI, the most critical reinvention is in the User Experience (UX).

Powerful models can determine what an AI agent could do, but carefully crafted agent UX will determine whether people will actually use it. As we navigate this crucial period of expectation management, the focus must shift from raw capability to reliability and trust. The path to unlocking the value of agentic AI is paved with user-centered design that prioritizes clarity, control, and confidence. This is how we design interfaces people will actually rely on.

For more insights on navigating the early stages of AI adoption and the productivity J-curve, explore this detailed analysis from the MIT Initiative on the Digital Economy.

The Core of Trust: Why Agent UX is Different

Traditional UX design focuses on guiding a user through a workflow. Agentic UX, however, is about designing a partnership. When an AI agent can act on a user’s behalf—sending emails, managing finances, or controlling operational systems—the stakes are infinitely higher. The user is no longer just clicking buttons; they are delegating responsibility.

This shift makes trust the single most important design principle. If users don’t trust the agent, they will disengage, override its actions, or abandon the tool entirely, rendering even the most powerful AI useless. Building that trust isn’t about creating a friendly chatbot persona; it’s about applying rigorous human factors engineering to the interface to ensure predictability, transparency, and user control.

Key Pillars of a Trustworthy Agent UX

- Transparency: Can users understand what the agent is doing and why?

- Control: Do users feel they have the final say and can intervene when necessary?

- Reliability: Is the AI’s behavior consistent and its outputs accurate?

- Explainability: When the agent makes a decision, is the reasoning clear and accessible?

These pillars are the foundation upon which every other feature is built. Without them, you’re not designing a collaborative tool; you’re building a black box that users will rightly fear.

Essential UX Patterns for Building Trustworthy Agents

To move from abstract principles to concrete design, we must adopt specific UX patterns tailored for AI agents. These patterns are not just about aesthetics; they are functional components that make the agent’s inner workings visible and manageable for the user.

1. Crystal-Clear Status and Observability

Users should never have to guess what the agent is doing. Anxiety builds when a system is working invisibly. Good agent UX provides a constant, easy-to-understand view of the agent’s state.

- Live Status HUD (Heads-Up Display): Implement persistent visual cues that indicate the agent’s current mode. Simple indicators like “Listening,” “Thinking,” “Processing,” or “Executing Task” can dramatically reduce user uncertainty.

- Progress Indicators: For multi-step tasks, go beyond a simple loading spinner. Show a checklist or a visual timeline of the subtasks. This pattern allows users to see progress, anticipate the next steps, and understand where a potential failure occurred.

- Action Previews: Before executing a significant action (like sending an email to 100 customers), the agent must show the user what it’s about to do. A side-panel preview or a modal window displaying a draft or a summary of actions allows the user to confirm or cancel, ensuring they remain in control.

2. Accessible Reasoning Traces and Explainability (XAI)

Explainable AI (XAI) is a critical field focused on making AI decisions understandable to humans. In agent UX, this translates to embedding the “why” directly into the interface. Trust grows when users see the logic behind the agent’s suggestions.

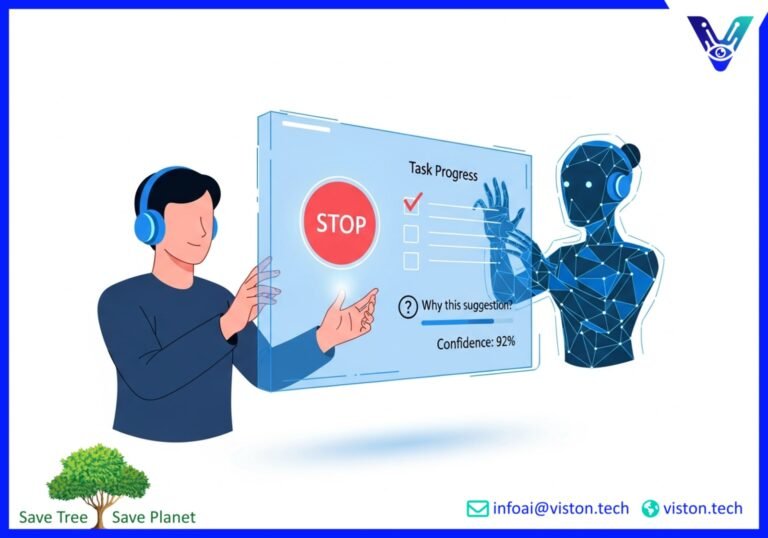

- Show Your Work: Instead of just presenting a result, provide access to the reasoning. This can be as simple as a “Why this suggestion?” link that reveals the key data points or rules the agent considered. For example, “This sales lead was prioritized because they match your ideal customer profile and recently visited the pricing page.”

- Source Citations: When an agent provides information, it should cite its sources. Linking directly to the internal document, database entry, or public webpage it used builds credibility and allows for easy verification.

- Confidence Scores: Not all AI outputs are created equal. Displaying a confidence level (e.g., “85% confident”) helps users calibrate their trust. It signals when an agent’s suggestion should be treated as a strong recommendation versus a tentative idea that requires more scrutiny.

3. Granular User Controls and Agency

Autonomy without control is terrifying. A trustworthy agent empowers users by giving them clear and accessible controls to guide, correct, and, if necessary, stop the agent’s actions.

- The Big Red Button (Emergency Stop): Every agentic system needs a prominent and instantly accessible “Pause” or “Stop” button. Knowing they can halt the process at any time gives users the confidence to let the agent work.

- Granular Permissions: Don’t ask for blanket approval. Allow users to set specific permissions for what the agent can and cannot do. For instance, a user might permit an agent to draft emails but not to send them, or to read calendar data but not to create new events.

- Always-Available Undo: Perfection is impossible, which makes reversibility mandatory. Providing a clear “Undo” option for the agent’s last action is one of the most powerful trust-building features. It transforms a potentially disastrous mistake into a minor, correctable inconvenience.

For a deeper dive into UX patterns for human oversight, Benjamin Prigent offers an excellent framework for keeping users in the loop on his blog.

Designing for When Things Go Wrong: Handling Failure Modes

Even the most advanced AI will make mistakes. How an interface handles these failures is just as important as how it operates under normal conditions. A well-managed failure can actually enhance user trust, while a poorly handled one can destroy it.

AI failures often fall into common categories: hallucinations (fabricating information), biases from training data, or simply misinterpreting an ambiguous user request. Your UX must be designed to anticipate and mitigate these gracefully.

Strategies for Graceful Failure Recovery

- Offer Alternative Paths: When an agent gets stuck or cannot fulfill a request, it shouldn’t just return a generic error. The interface should provide helpful next steps. This could be suggesting a different way to phrase the request, offering to switch to a manual workflow, or providing an option to connect with a human expert.

- Clarify Ambiguity: If a user’s request is unclear, the agent should ask clarifying questions rather than guessing. For example, if a user says, “Schedule a meeting with Alex,” a good agent would respond, “I found two people named Alex in your contacts: Alex Smith and Alex Johnson. Which one did you mean?”

- Enable Easy Feedback: Integrate simple feedback mechanisms, like thumbs-up/thumbs-down icons, next to the agent’s outputs. This not only helps improve the model over time but also gives users a voice, making them feel like part of the process rather than just a passive recipient of the AI’s actions.

The Actionable Design Checklist for Trustworthy Agent UX

Use this checklist as a starting point to evaluate and improve the user experience of your AI agents. It synthesizes the key principles of transparency, control, and explainability into actionable design requirements.

For more detailed principles and a printable checklist, check out this comprehensive guide on Designing Trustworthy AI Agents.

Visibility and Transparency

- [ ] Is the agent’s current status (e.g., listening, processing, acting) always visible?

- [ ] Are reasoning traces or explanations for key decisions easily accessible?

- [ ] Does the agent cite its sources or show the data it used?

- [ ] Is it clear what the agent can and cannot do (scope transparency)?

Control and Safety

- [ ] Is there a clear, immediate way for the user to pause or stop the agent?

- [ ] Does the agent preview high-impact actions before executing them?

- [ ] Is there a simple “Undo” function for recent actions?

- [ ] Can the user configure granular permissions for the agent’s capabilities?

- [ ] Are high-risk actions (e.g., deleting data, spending money) protected by a secondary confirmation step?

Failure Modes and Resilience

- [ ] When the agent fails, does it provide helpful next steps or alternative paths?

- [ ] Does the agent ask for clarification when faced with ambiguity?

- [ ] Is there an easy, in-context way for users to provide feedback on the agent’s performance?

- [ ] Is there a clear hand-off protocol to a human user if the agent gets stuck?

Learning and Feedback

- [ ] Can users easily correct the agent’s mistakes?

- [ ] Does the interface capture structured feedback to help retrain the model?

- [ ] Does the agent confirm its understanding of complex goals before starting a long task?

The Future is Collaborative

The initial hype around all-powerful, fully autonomous AI agents is giving way to a more pragmatic and ultimately more valuable vision: AI as a collaborative partner. The road to realizing this vision runs directly through user experience design. By prioritizing agent UX principles like transparency, control, and explainability, we can bridge the trust gap and build interfaces that people will not only use but rely on to amplify their own capabilities.

The next five years will be defined not by the companies with the most powerful models, but by those who best understand the nuances of human factors and design AI agents that earn their place as trusted digital colleagues.

Ready to build an AI-powered solution that your users will trust and adopt? The team at Viston AI specializes in creating secure, transparent, and effective AI agents that deliver real business value. Contact us today to learn how we can help you navigate the future of work.

Frequently Asked Questions (FAQs)

1. What is Agent UX?

Agent UX (User Experience) is a specialized design discipline focused on the interaction between humans and AI agents. Unlike traditional UX, which often deals with static interfaces, agent UX must account for autonomous, proactive, and adaptive AI behaviors. Its primary goal is to build user trust and ensure effective collaboration through principles like transparency, user control, and explainability.

2. Why is trust so important for AI agents?

Trust is crucial because AI agents are often delegated tasks with real-world consequences, such as managing communications, finances, or business operations. If users don’t trust an agent to act reliably and in their best interest, they will not use it, or they will constantly supervise and override it, defeating the purpose of automation. Trust is the foundation for user adoption and realizing the ROI of agentic AI.

3. What is “explainability” in the context of AI UX?

Explainability (often abbreviated as XAI) in AI UX refers to designing an interface that can show users why an AI made a particular decision or recommendation. Instead of being a “black box,” the system reveals its reasoning in simple, human-understandable terms, such as showing the data it used or the rules it followed. This transparency is a key driver of user trust.

4. How can I show an AI agent’s status effectively?

Effective status indicators are clear, persistent, and easy to understand at a glance. Use a “Heads-Up Display” (HUD) with simple text like “Listening,” “Thinking,” or “Executing.” For longer tasks, use visual progress bars or checklists that break down the agent’s action plan into visible steps. This keeps the user informed and reduces the anxiety of not knowing what the system is doing.

5. What is the best way to handle AI agent failures in the UI?

The best practice for handling failures is to “fail gracefully.” Instead of showing a dead-end error message, the interface should provide constructive help. This includes offering alternative ways to complete the task, suggesting rephrased commands, providing a clear path to human support, and always offering an “undo” option. A well-managed failure can actually build trust by showing the user that the system is resilient and they are still in control.

6. What is the “emergency stop” and why is it essential for agent UX?

The “emergency stop” is a prominent, always-accessible UI control (like a “Pause” or “Stop” button) that allows a user to immediately halt all actions of an AI agent. It is essential because it provides a critical safety net, giving users the confidence to let the agent operate autonomously. Knowing they can intervene at any moment removes the fear of the agent “going rogue” and is a fundamental requirement for building user trust.

7. What are reasoning traces?

Reasoning traces are the trail of logic and data an AI agent followed to reach a conclusion. In UX, this is often presented as a simplified log or a visual flow that shows the key decision points. For example, a trace might show that the agent first queried a customer database, then analyzed recent support tickets, and finally drafted a follow-up email based on that information. Exposing these traces helps users understand and verify the agent’s actions.

8. How do human factors apply to AI agent design?

Human factors is the scientific discipline of understanding the interactions between humans and other elements of a system. In AI agent design, it involves studying how people perceive, understand, and trust autonomous systems. Applying human factors principles ensures that the agent’s interface is designed with human cognitive limits and psychological needs in mind, leading to safer, more intuitive, and more effective human-AI collaboration.