<

Retrieval-Augmented Generation (RAG): The Missing Enterprise Link

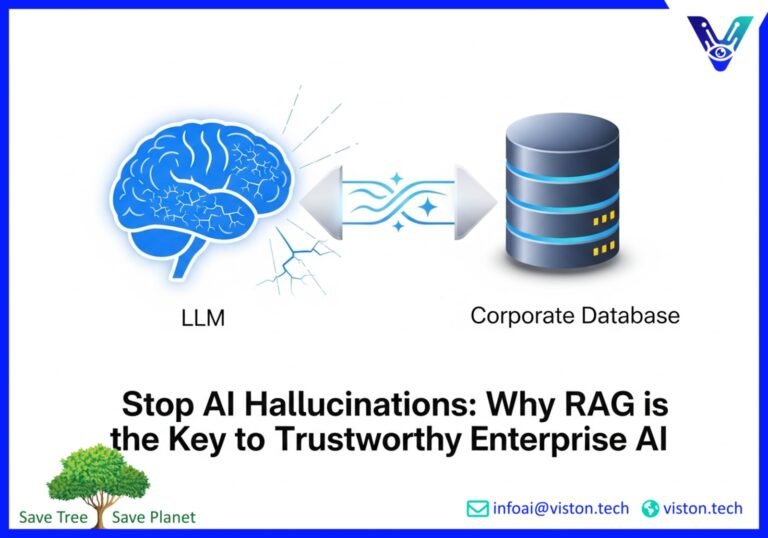

Large Language Models (LLMs) are transforming industries. They can write emails, generate code, and even create marketing copy. But for enterprises, there’s a critical challenge: these powerful AI tools can sometimes invent information, a phenomenon known as hallucination. This makes relying on them for mission-critical tasks a significant risk. What if there was a way to harness the power of LLMs while ensuring factual accuracy and trustworthiness? Enter Retrieval-Augmented Generation (RAG), the technology that is rapidly becoming the backbone of enterprise AI.

For any C-Suite executive, IT leader, or product manager, understanding RAG is no longer optional. It’s the key to unlocking reliable, secure, and context-aware AI within your organization. By 2025, enterprises are increasingly adopting RAG systems to enhance accuracy, leverage real-time knowledge, and maintain robust security protocols. This blog post will demystify RAG, explain why it’s the missing link for enterprise AI, and show how a sophisticated approach like hybrid search is turning LLMs into dependable knowledge workers.

The Hallucination Problem: Why LLMs Go Off-Script

Imagine asking an AI assistant for the latest quarterly sales figures, only for it to confidently provide a number that is completely fabricated. This is the reality of LLM hallucination. Standard LLMs are trained on vast but static datasets from the public internet. Their knowledge has a cutoff date, and they lack access to your company’s private, up-to-the-minute information. When faced with a question they can’t answer from their training data, they often make a statistically plausible, yet factually incorrect, guess.

For businesses, the consequences of these fabrications can be severe:

- Erosion of Trust: Inaccurate information quickly undermines user confidence in AI tools.

- Damaged Brand Reputation: Public-facing chatbots or content generation tools that produce false information can harm your brand’s credibility.

- Significant Business Risks: Decisions based on flawed AI-generated data can lead to poor outcomes in finance, legal, and other critical departments.

This is precisely the problem that retrieval-augmented generation is designed to solve. RAG connects LLMs to external, authoritative knowledge bases, ensuring that their responses are grounded in verifiable facts. Think of it as giving your LLM an open-book exam instead of relying on its memory alone. By retrieving relevant information before generating an answer, RAG dramatically reduces hallucinations and transforms the LLM into a reliable enterprise tool.

The RAG Stack: Building a Foundation of Trust

A successful RAG implementation is more than just plugging an LLM into a database. It’s a sophisticated system with several key components working in concert. This “RAG stack” is what turns a generic model into a specialized, high-performing asset for your enterprise.

The core components of a modern RAG architecture include:

- Data Ingestion and Processing: This involves loading and preparing your enterprise data. Documents, from PDFs and Word files to web pages and database entries, are broken down into manageable chunks.

- Indexing and Embedding: These chunks are then converted into numerical representations called embeddings. These embeddings are stored in a specialized database, often a vector database, which allows for fast and efficient searching based on semantic meaning.

- The Retriever: When a user asks a question, the retriever searches the vector database to find the most relevant chunks of information. This is where the magic of vector search and hybrid search comes into play.

- The Generator: The retrieved information is then passed to the LLM, along with the original query. The LLM uses this context to generate a factually grounded and relevant response.

This architecture is highly flexible and can be tailored to specific enterprise needs. For more on the evolution of RAG and its components, you can explore resources like this in-depth article from Forbes.

Indexing and Grounding: The Power of Hybrid Search

The effectiveness of a RAG system hinges on the quality of its retrieval process. If the retriever can’t find the right information, the LLM will still be left to guess. This is why the choice of search methodology is so critical.

Vector Search: Understanding Meaning

Vector search excels at understanding the semantic meaning or intent behind a query. It can find relevant information even if the user’s wording doesn’t exactly match the text in the documents. For example, a vector search can understand that “company leave policy” is related to “time off and vacation days.”

The Limitations of Vector Search Alone

However, pure vector search can sometimes struggle with precision. It may not perform well with specific keywords, product codes, or industry jargon. This is a significant drawback in an enterprise setting where precision is often paramount.

Hybrid Search: The Best of Both Worlds

This is where hybrid search comes in. Hybrid search combines the semantic understanding of vector search with the precision of traditional keyword-based search (like BM25). This fusion allows the RAG system to:

- Improve Accuracy: By leveraging both keyword and semantic matching, hybrid search provides more relevant and comprehensive results.

- Handle Diverse Queries: It can effectively handle both broad, conceptual questions and highly specific, keyword-driven searches.

- Boost Reliability: For enterprises, this means fewer irrelevant results and a higher degree of confidence in the retrieved information.

Leading technology platforms are increasingly recognizing that hybrid search is not just an advantage but a necessity for robust enterprise AI. This approach ensures that the LLM is “grounded” in the most accurate and relevant data available, making its responses trustworthy and actionable. For a deeper dive into search technologies, check out this insightful piece on AI search.

Quality Evaluation and Monitoring: Ensuring Ongoing Performance

Implementing a RAG system is not a one-time setup. To maintain trust and ensure high performance, continuous evaluation and monitoring are essential. The complexity of RAG, with its multiple components, means that a change in one area can have cascading effects on the entire system.

A robust evaluation framework for RAG should include:

- Defining Success Metrics: What does a “good” response look like for your use case? Key metrics often include factual accuracy (faithfulness to the source), relevance of the answer, and conciseness.

- Creating a Test Dataset: A high-quality set of questions and ideal answers that are representative of real-world user queries is crucial for testing and iteration.

- Component-Level and End-to-End Evaluation: It’s important to evaluate both the individual components (like the retriever’s performance) and the overall quality of the final generated response.

- Monitoring in Production: Once deployed, the system should be continuously monitored for performance, latency, and cost. User feedback loops can also provide invaluable insights for ongoing improvement.

By investing in a thorough evaluation and monitoring strategy, enterprises can ensure their RAG systems remain reliable, accurate, and valuable over time. This commitment to quality is what separates a successful enterprise AI implementation from a mere proof of concept.

The Future is Hybrid and Grounded

The journey of enterprise AI is moving beyond the initial excitement of generative models to a more mature phase focused on reliability, security, and tangible business value. Retrieval-Augmented Generation (RAG) is at the heart of this transition. By grounding LLMs in verifiable enterprise knowledge, RAG addresses the critical issue of hallucination and unlocks a new level of trustworthiness.

Furthermore, the evolution towards sophisticated retrieval techniques like hybrid search is making these systems even more powerful and precise. For C-suite leaders, IT professionals, and innovators across industries, embracing RAG is not just about adopting new technology; it’s about building intelligent systems that you can trust to drive your business forward. The future of enterprise AI is not just intelligent; it’s grounded in your reality.

Ready to transform your enterprise’s relationship with AI? The expert team at Viston AI specializes in developing and deploying cutting-edge, reliable AI-powered solutions, including advanced RAG systems with hybrid search capabilities. We can help you turn your enterprise data into a trustworthy source of intelligence.

Contact Viston AI today to learn how we can help you build the future of your business with AI you can trust.

#RAG #RetrievalAugmentedGeneration #VectorSearch #HybridSearch #EnterpriseAI #LLM #AIStrategy #DigitalTransformation

Frequently Asked Questions (FAQs)

1. What is Retrieval-Augmented Generation (RAG)?

Retrieval-Augmented Generation (RAG) is an AI architecture that enhances the accuracy and reliability of Large Language Models (LLMs). It connects the LLM to an external knowledge base, allowing it to retrieve relevant, up-to-date information before generating a response. This process “grounds” the model in factual data, significantly reducing the chances of hallucination.

2. How does RAG solve the problem of LLM hallucinations?

LLMs sometimes “hallucinate” or invent information because they are responding based on patterns in their training data and lack access to real-time, specific knowledge. RAG addresses this by first retrieving factual information from a trusted enterprise data source (like internal documents or databases). The LLM then uses this retrieved context to formulate its answer, ensuring it is based on verifiable facts rather than just statistical probability.

3. What is the difference between vector search and hybrid search in a RAG system?

Vector search is excellent at understanding the semantic meaning and context of a user’s query. It can find relevant information even if the keywords don’t match exactly. Hybrid search combines the strengths of vector search with traditional keyword-based search. This dual approach provides more comprehensive and precise results, as it can handle both conceptual queries and those that rely on specific terms, product codes, or acronyms common in enterprise environments.

4. What are the main components of a RAG stack?

A typical RAG stack includes several key components: a data ingestion and processing pipeline to prepare your documents, an indexing and embedding mechanism to create a searchable knowledge base (often in a vector database), a retriever to find relevant information using techniques like hybrid search, and a generator (the LLM) to create the final, context-aware response.

5. Why is grounding important for enterprise AI?

Grounding is the process of connecting an LLM’s responses to specific, verifiable data sources. For enterprises, this is crucial for building trust and mitigating risk. Grounded AI systems are more accurate, reliable, and transparent because their outputs can be traced back to the source information. This is essential for applications in regulated industries, customer support, and any scenario where factual accuracy is non-negotiable.

6. Can RAG work with my company’s private and proprietary data?

Yes, one of the primary benefits of RAG is its ability to securely connect with your enterprise’s internal knowledge bases. This allows the AI to provide accurate answers based on your private documents, policies, and data without that information being exposed or used to train the foundational LLM. Secure implementation is a key consideration in enterprise RAG deployments.

7. How do you evaluate the quality of a RAG system?

Evaluating a RAG system involves assessing both the retrieval and generation components. Key metrics include the relevance and accuracy of the retrieved context and the factual correctness, relevance, and clarity of the final generated answer. This is typically done using a “golden dataset” of questions and ideal answers, along with ongoing monitoring of the system’s performance in a live environment.

8. Is implementing a RAG system difficult for a non-technical team?

While the underlying technology is complex, many platforms and service providers, like Viston AI, are making RAG more accessible. A partner with expertise in AI can handle the technical implementation, allowing your team to focus on defining the business problem and identifying the right knowledge sources. The goal is to create intuitive tools that your employees can use without needing to understand the intricate workings of the AI. For those interested in a more hands-on approach, there are also tools that simplify the process, as detailed in this beginner-friendly guide.