Viston delivers enterprise-grade ML Operations (MLOps) solutions that eliminate deployment bottlenecks and accelerate time-to-value for machine learning initiatives. With 15+ years of AI infrastructure expertise and 2,860+ global clients across the USA, UK, Germany, France, Australia, and wider Europe, we implement scalable processes for model deployment, monitoring, and maintenance that align with governance requirements and business objectives. Our end-to-end MLOps platform integrates seamlessly with existing data ecosystems while providing the reliability, auditability, and performance metrics that C-suite executives and compliance teams demand.

Implementing robust ML Operations (MLOps) frameworks is critical for organizations seeking to industrialize artificial intelligence and extract measurable business value from data science investments. Without proper operational infrastructure, machine learning models remain isolated experiments rather than scalable business assets. MLOps bridges the gap between data science innovation and production reliability through standardized processes, automated workflows, and continuous monitoring that ensures models perform consistently across diverse deployment environments.

Continuous integration and deployment workflows specifically designed for machine learning artifacts, including model versioning, experiment tracking, and automated testing frameworks that validate model performance before production release across USA, UK, Germany, and Australia data centers

Advanced observability tools that track prediction accuracy, data distribution shifts, feature importance changes, and performance degradation with automated alerting and remediation workflows that maintain model effectiveness in dynamic business environments

Comprehensive audit trails, explainability frameworks, bias detection, and regulatory compliance tools that satisfy GDPR, HIPAA, SOC 2, and industry-specific requirements across financial services, healthcare, and regulated sectors throughout Europe and North America

Cloud-agnostic deployment architecture supporting AWS, Azure, Google Cloud, and on-premises infrastructure with automatic scaling, resource optimization, and cost management that adapts to fluctuating inference demands across global operations

Viston implements end-to-end MLOps infrastructure that standardizes model development, deployment, monitoring, and governance across enterprise AI portfolios. Our platform integrates with existing data science tools while providing production-grade reliability, automated workflows, and compliance frameworks that accelerate AI adoption throughout USA, European, and Australian operations.

Version-controlled deployment workflows with containerization, blue-green deployments, canary releases, and automated rollback capabilities ensuring zero-downtime model updates across production environments.

Real-time tracking of prediction accuracy, inference latency, data quality, feature drift, and business KPIs with configurable dashboards and automated alerting for proactive issue resolution.

Built-in audit trails, model documentation, explainability tools, bias detection, and regulatory compliance features satisfying GDPR, HIPAA, and industry-specific requirements across global operations.

Intelligent resource allocation, cost management, auto-scaling, and multi-cloud orchestration maximizing infrastructure efficiency while maintaining performance SLAs for enterprise workloads.

Enterprise banks implement continuous integration pipelines for credit scoring models with automated validation, A/B testing, and regulatory compliance documentation across USA and European lending operations.

Payment networks deploy streaming ML inference with sub-100ms latency, automatic model retraining on emerging fraud patterns, and explainability frameworks for dispute resolution across global transaction networks.

Hospital systems operationalize clinical decision support models with HIPAA-compliant infrastructure, continuous monitoring for prediction accuracy, and integration with electronic health record systems throughout USA and UK facilities.

Multinational retailers deploy demand forecasting and pricing models with automated retraining on sales data, competitor intelligence integration, and real-time inference across thousands of stores in USA, Germany, France, and Australia.

Industrial manufacturers implement IoT sensor data pipelines feeding ML models for equipment failure prediction, with edge deployment for factory floors and automated alerting workflows reducing unplanned downtime across European and North American facilities.

Telecom operators deploy propensity models with automated feature engineering, daily retraining schedules, and integration with marketing automation platforms driving retention campaigns across USA, UK, and Australian subscriber bases.

Consumer goods manufacturers implement multi-echelon inventory optimization models with automated retraining on point-of-sale data, promotional calendars, and external signals optimizing stock levels across global distribution networks.

Enterprises operationalize large language models with guardrails, safety filters, cost optimization, and continuous evaluation frameworks powering chatbots and virtual assistants across multilingual support operations in USA, Europe, and Australia.

Digital publishers deploy personalization models with real-time feature serving, online learning capabilities, and A/B testing infrastructure optimizing engagement metrics across web and mobile applications in global markets.

Establishing effective machine learning operations (MLOps) requires more than deploying models—it demands comprehensive infrastructure that addresses the unique challenges of AI lifecycle management. Unlike traditional software, machine learning systems exhibit probabilistic behavior, data dependency, and performance degradation over time. Viston’s MLOps platform specifically addresses these complexities through purpose-built automation, monitoring, and governance frameworks.

Our approach integrates three critical dimensions: technical infrastructure that automates deployment and monitoring workflows; governance frameworks that ensure compliance, explainability, and ethical AI practices; and organizational enablement that upskills teams and establishes best practices. This holistic methodology accelerates AI maturity from experimental initiatives to industrialized capabilities generating measurable business value across USA, UK, Germany, France, Australia, and broader European markets.

The business impact of robust MLOps extends beyond technical efficiency to strategic competitive advantage. Organizations implementing Viston’s MLOps solutions achieve 67% faster time-to-production for new models, enabling rapid response to market dynamics and competitive pressures. Automated monitoring and drift detection maintain prediction accuracy above baseline thresholds, preventing the silent model degradation that erodes business value over time.

Compliance and governance capabilities reduce audit preparation time by 80% while providing real-time visibility into model behavior, explainability, and fairness metrics that satisfy regulatory requirements across GDPR, HIPAA, and industry-specific frameworks. Infrastructure optimization delivers 40% cost reduction through intelligent resource allocation, containerization, and multi-cloud orchestration that maximizes hardware utilization without compromising performance.

Background: A multinational investment bank operated siloed credit risk models across regional lending operations, resulting in inconsistent risk assessment, manual validation processes, and regulatory compliance challenges. Each geography maintained separate model versions with limited visibility into performance metrics and no standardized deployment procedures. The absence of centralized MLOps infrastructure created bottlenecks in model updates, prevented knowledge sharing across regions, and increased regulatory scrutiny from fragmented audit trails.

Challenge: The bank needed to standardize credit risk modeling across USA, UK, Germany, France, and 41 additional markets while maintaining regional regulatory compliance, accelerating model deployment cycles, and providing real-time performance monitoring. Existing manual processes required 6-8 weeks for model updates, lacked comprehensive audit trails, and couldn’t adapt quickly to changing economic conditions or emerging risk patterns.

Solution: Viston implemented an enterprise MLOps platform with automated CI/CD pipelines for model deployment, centralized experiment tracking, and regional compliance frameworks. We established containerized model serving infrastructure, automated validation suites, and real-time monitoring dashboards tracking prediction accuracy, feature drift, and business KPIs. The platform integrated with existing risk management systems while providing explainability tools for regulatory reporting and automated documentation for audit preparation.

Results: The bank achieved 73% reduction in model deployment time, standardized credit risk assessment across all regions, and established comprehensive audit trails satisfying Basel III and local regulatory requirements. Real-time monitoring detected data drift events 6 weeks earlier than manual processes, enabling proactive model retraining that maintained prediction accuracy above 94% throughout economic volatility. The platform now supports 180+ production models processing 500,000+ daily credit decisions with 99.7% uptime.

Testimonial: “Viston’s MLOps platform transformed our credit risk operations from fragmented regional initiatives to a cohesive global capability. We now deploy model updates in days instead of months while maintaining the compliance rigor our regulators expect. The automated monitoring saved us from potential losses by detecting drift patterns we would have missed through quarterly manual reviews.”

Background: A large healthcare network developed promising machine learning models for diagnostic imaging, treatment recommendations, and patient risk stratification but struggled to deploy these innovations beyond pilot programs. Clinical AI initiatives remained isolated in research environments due to concerns about HIPAA compliance, integration complexity with electronic health records, and lack of monitoring infrastructure to ensure patient safety in production settings.

Challenge: The network required MLOps infrastructure that satisfied strict healthcare regulations, integrated seamlessly with existing clinical workflows, provided real-time performance monitoring for patient safety, and enabled rapid deployment of validated models across 250 hospitals and clinics throughout USA and UK operations. Traditional software deployment approaches couldn’t address the unique requirements of clinical AI including explainability for physician acceptance, continuous validation against patient outcomes, and audit trails for medical liability protection.

Solution: Viston designed a HIPAA-compliant MLOps platform with encrypted model serving, comprehensive audit logging, and integration frameworks for major EHR systems. We implemented clinical validation workflows requiring physician review before production deployment, real-time monitoring comparing model predictions against actual patient outcomes, and explainability interfaces providing clinicians with decision rationale. The platform included automated compliance checks ensuring all deployed models met regulatory requirements and organizational governance policies.

Results: The healthcare network deployed 45 clinical AI models into production serving 3.2 million patients annually. Diagnostic imaging models achieved 89% sensitivity for cancer detection while reducing false positives by 34% compared to previous screening protocols. Treatment recommendation engines improved care plan adherence by 28% through personalized patient communication strategies. Real-time monitoring detected performance degradation in a sepsis prediction model 18 days before it would have impacted patient care, enabling immediate retraining and preventing potential adverse outcomes.

Testimonial: “Clinical AI requires different operational standards than business applications—patient safety cannot be compromised. Viston understood these unique requirements and built MLOps infrastructure that gives our clinicians confidence in AI-assisted decision making while providing our compliance team with the audit trails and governance controls they need. We’ve accelerated innovation deployment from concept to bedside care.”

Background: An international retail chain relied on static pricing rules and manual markdown decisions, resulting in suboptimal inventory clearance, missed margin opportunities, and inconsistent customer experiences across USA, Germany, France, and Australia markets. Data science teams had developed sophisticated demand forecasting and dynamic pricing models but lacked infrastructure to deploy these models to individual stores and update pricing in real-time based on local market conditions, competitor actions, and inventory levels.

Challenge: The retailer needed MLOps capabilities supporting real-time inference for 2,800 stores, automated model retraining incorporating daily sales data and external signals, and integration with existing point-of-sale and inventory management systems. The solution required handling regional pricing regulations, currency conversions, and market-specific demand patterns while maintaining prediction accuracy across diverse product categories from fashion to electronics.

Solution: Viston implemented a distributed MLOps architecture with edge inference capabilities at store level, centralized model training and validation, and automated deployment pipelines ensuring consistent pricing strategy across all locations. We established feature engineering workflows incorporating competitor pricing data, weather patterns, local events, and economic indicators. The platform included A/B testing frameworks enabling controlled rollout of pricing strategies and real-time monitoring tracking revenue impact, inventory turnover, and customer response metrics.

Results: Dynamic pricing models increased gross margins by 8.3% while improving inventory turnover by 22% across all markets. Automated markdown optimization reduced end-of-season inventory write-offs by $47 million annually. The platform processes 180 million daily pricing decisions with sub-200ms latency, enabling real-time price adjustments responding to competitor actions within hours instead of weeks. A/B testing capabilities validated 23 pricing strategies before full deployment, preventing potentially costly mistakes and accelerating innovation adoption.

Testimonial: “Viston’s MLOps platform turned our pricing strategy from reactive manual processes to proactive data-driven optimization. We’re now competing effectively against e-commerce giants by matching their dynamic pricing capabilities while leveraging our physical store advantages. The automated retraining keeps our models accurate through seasonal shifts and market disruptions that would have broken traditional approaches.”

Background: A global manufacturing conglomerate experienced costly unplanned equipment downtime across 85 facilities producing automotive components, industrial machinery, and consumer electronics. While factories collected extensive IoT sensor data from production equipment, this data remained underutilized due to lack of analytics infrastructure and operational processes to act on predictive insights. Maintenance operations followed fixed schedules regardless of actual equipment condition, leading to unnecessary service interruptions and missed opportunities to prevent failures.

Challenge: The manufacturer required MLOps infrastructure supporting edge deployment for real-time inference on factory floors, integration with existing industrial control systems and maintenance management software, and automated model retraining as equipment aging patterns and operational conditions evolved. The solution needed to handle high-frequency sensor data streams, operate reliably in industrial environments with intermittent connectivity, and provide maintenance teams with actionable predictions including failure probability, remaining useful life estimates, and recommended interventions.

Solution: Viston designed an edge-optimized MLOps platform with distributed inference at factory level, centralized model training on aggregated data from all facilities, and automated deployment pipelines supporting containerized models on industrial gateways. We implemented time-series feature engineering workflows processing temperature, vibration, acoustic, and operational data, and established monitoring frameworks tracking model accuracy against actual failure events. The platform included mobile interfaces for maintenance technicians and integration with work order management systems enabling automated scheduling of preventive interventions.

Results: Predictive maintenance models reduced unplanned downtime by 54% across all facilities, saving $83 million annually in lost production and emergency repairs. Maintenance efficiency improved by 37% through optimized scheduling based on actual equipment condition rather than fixed intervals. The platform monitors 127,000 pieces of production equipment processing 2.4 billion sensor readings daily with 96.8% prediction accuracy for failures within 30-day windows. Model retraining workflows automatically incorporate data from new equipment types and operational conditions, maintaining accuracy as manufacturing operations evolve.

Testimonial: “We had sensor data but lacked the operational infrastructure to turn that data into proactive maintenance decisions. Viston’s MLOps platform delivered both the technical capabilities for edge AI deployment and the organizational processes to integrate predictive insights into our maintenance workflows. The ROI exceeded our projections within 8 months as we prevented critical equipment failures that would have shut down entire production lines.”

Background: A major telecommunications provider serving 28 million subscribers across USA, UK, and Australia struggled with customer retention despite investing in data science capabilities. Churn prediction models existed but required manual execution, generated outdated predictions, and lacked integration with marketing automation platforms needed to execute retention campaigns. The delay between model execution and intervention campaigns reduced effectiveness, and data scientists spent 60% of their time on operational tasks instead of model improvement.

Challenge: The provider needed automated MLOps workflows that continuously retrained churn models on fresh customer interaction data, generated daily churn risk scores for the entire subscriber base, and triggered personalized retention campaigns through existing marketing platforms. The solution required handling complex feature engineering from call detail records, billing history, customer service interactions, and usage patterns while maintaining GDPR compliance for European operations and providing explainability for customer retention teams.

Solution: Viston implemented an end-to-end MLOps pipeline with automated feature engineering from operational data sources, scheduled model retraining workflows incorporating concept drift detection, and real-time inference generating daily churn risk scores. We established integration frameworks with marketing automation platforms enabling automated campaign triggering based on churn probability thresholds and customer value segments. The platform included explainability dashboards showing key drivers of churn risk for each customer, enabling personalized retention offers and monitoring frameworks tracking campaign effectiveness and model lift.

Results: Automated churn prediction pipeline reduced customer attrition by 18% through timely personalized interventions. Marketing efficiency improved by 43% as retention campaigns targeted high-risk valuable customers instead of broad untargeted outreach. The platform generates 28 million daily churn risk scores with automated retraining cycles maintaining 87% prediction accuracy despite changing market conditions and competitive dynamics. Data science team productivity increased 2.7x as automation eliminated manual operational tasks, enabling focus on model innovation and business impact.

Testimonial: “Viston’s MLOps platform transformed our approach to customer retention from reactive crisis management to proactive relationship building. We now intervene before customers have decided to leave, and the automated pipeline ensures we’re always working with current insights. The business impact exceeded our expectations, and our data science team finally has time to focus on innovation instead of operational firefighting.”

Background: A rapidly growing e-commerce platform serving USA, Germany, France, and Australia markets developed sophisticated product recommendation and search ranking models but struggled to scale these capabilities to their expanding user base. Manual deployment processes created 3-4 week lag times for model updates, feature engineering required extensive data science intervention, and lack of monitoring infrastructure meant performance degradation went undetected until customer engagement metrics declined significantly.

Challenge: The platform required MLOps infrastructure supporting real-time personalization for 45 million users with sub-100ms latency requirements, automated feature engineering from clickstream data and user behavior signals, continuous model evaluation and automated retraining, and A/B testing capabilities to validate personalization strategies before full deployment. The solution needed to handle traffic spikes during promotional events, maintain consistent user experience across web and mobile channels, and provide business stakeholders with visibility into recommendation performance and revenue impact.

Solution: Viston designed a high-performance MLOps platform with distributed model serving across multiple regions, real-time feature stores providing low-latency access to user profiles and product attributes, and automated training pipelines incorporating online learning for rapid adaptation to user behavior changes. We implemented sophisticated A/B testing frameworks with statistical significance monitoring, multi-armed bandit algorithms for exploration-exploitation optimization, and revenue attribution models tracking recommendation contribution to conversions. The platform included automated canary deployments minimizing risk of degraded user experience and rollback capabilities enabling rapid recovery from unsuccessful model updates.

Results: Personalization engine now serves 2.8 billion daily recommendations with 47ms average latency and 99.99% uptime through seasonal traffic peaks. Conversion rates improved by 31% and average order value increased by 23% through more relevant product suggestions and optimized search ranking. Automated A/B testing validated 14 recommendation algorithms over 6 months, accelerating innovation velocity while preventing deployment of underperforming models. Model update cycle time reduced from 3-4 weeks to 48 hours, enabling rapid response to emerging product trends and competitive actions.

Testimonial: “Scaling personalization requires more than good algorithms—it requires production infrastructure that delivers consistent low-latency experiences to millions of concurrent users. Viston’s MLOps platform gave us both the technical capabilities and operational workflows to compete with e-commerce giants. The automated testing and deployment capabilities transformed our ability to innovate rapidly while maintaining the reliability our customers expect.”

Transform machine learning experiments into production business assets driving measurable competitive advantage. Viston’s enterprise MLOps platform eliminates deployment bottlenecks, automates operational workflows, and ensures sustained model performance through continuous monitoring and governance. With 15+ years of AI infrastructure expertise, 2,860+ global clients, and proven implementations across USA, UK, Germany, France, Australia, and wider Europe, we deliver the reliability, scalability, and compliance your organization requires.

Automated deployment pipelines and standardized workflows reduce model production cycle time by 60-80%, enabling organizations to realize business value from data science investments in weeks instead of months. Version control, experiment tracking, and automated testing frameworks eliminate manual coordination overhead while maintaining quality standards across diverse model types and deployment targets in USA, European, and Australian operations.

Real-time tracking of prediction accuracy, data drift, feature importance, and business KPIs prevents silent model degradation that erodes AI effectiveness over time. Automated alerting and remediation workflows enable proactive intervention before performance degradation impacts business outcomes, while continuous retraining capabilities adapt models to changing market conditions, customer behaviors, and operational environments across global deployments.

Intelligent automation of model deployment, monitoring, and maintenance tasks reduces manual operational effort by 75-85%, freeing data science and ML engineering teams to focus on innovation rather than repetitive workflows. Infrastructure optimization through containerization, auto-scaling, and multi-cloud orchestration reduces compute costs by 35-50% while maintaining performance SLAs for production workloads serving millions of daily predictions.

Comprehensive audit trails, model documentation, explainability frameworks, and bias detection capabilities satisfy GDPR, HIPAA, SOC 2, and industry-specific regulatory requirements across financial services, healthcare, and regulated sectors. Automated compliance checking prevents deployment of models violating governance policies, while centralized visibility into model inventory, ownership, and performance metrics streamlines regulatory reporting and audit preparation throughout USA and European operations.

Cloud-agnostic deployment architecture and containerization enable seamless scaling from pilot projects to enterprise production workloads serving millions of users across global markets. Multi-cloud orchestration and edge deployment capabilities support diverse infrastructure requirements from centralized data centers to distributed factory floors and retail locations, while standardized MLOps processes enable consistent operational excellence across expanding AI portfolios.

Centralized experiment tracking and model registries provide data scientists, ML engineers, and business stakeholders with shared visibility into model development progress, performance metrics, and deployment status. Standardized workflows and self-service deployment capabilities reduce dependencies between teams, accelerating innovation velocity while maintaining governance controls and quality standards across organizational boundaries.

Automated monitoring detects data quality issues, feature drift, and model performance degradation before they impact business operations, while canary deployments and automated rollback capabilities minimize risk of failed model updates. Explainability tools and bias detection frameworks identify potential fairness issues and unintended model behavior, enabling proactive intervention that protects brand reputation and reduces liability exposure across customer-facing applications.

Intelligent workload scheduling, containerization, and auto-scaling maximize infrastructure efficiency by allocating compute resources based on actual inference demand patterns rather than peak capacity requirements. Cost monitoring and resource allocation dashboards provide visibility into infrastructure spending across model portfolios, enabling data-driven optimization decisions that reduce total cost of ownership while maintaining performance SLAs.

Reduced deployment cycle times and automated testing frameworks enable rapid experimentation and validation of new modeling approaches, algorithms, and business strategies. Organizations implementing robust MLOps capabilities deploy 3-5x more model updates annually compared to competitors relying on manual processes, accelerating competitive response and market innovation across USA, UK, Germany, France, Australia, and global markets.

Partnering with Viston AI means tapping into a team of seasoned AI experts who accelerate your transformation and deliver custom solutions aligned with your strategic goals.

Together, we drive measurable business impact, ensure scalable, future-proof implementations, and mitigate risks to keep you ahead of the competition.

Unlock 35-42% efficiency gains with specialized domain agents. Our 2025 guide details how to build and deploy industry-specific AI for healthcare, finance, retail, and manufacturing to transform your core business operations. Read More

#AIAgents #DomainSpecificAI #HealthcareAI #Fintech #RetailTech #ManufacturingAI #BusinessAutomation

The future of software development is here. Agentic coding pipelines use autonomous AI to plan, code, test, and deploy software, revolutionizing your SDLC and transforming the developer experience (DevEx).

Read More

#AgenticCoding #DevEx #SDLCAutomation #AIinDev #FutureOfCode #CodeGeneration #AITesting #SecureCoding #CI/CD

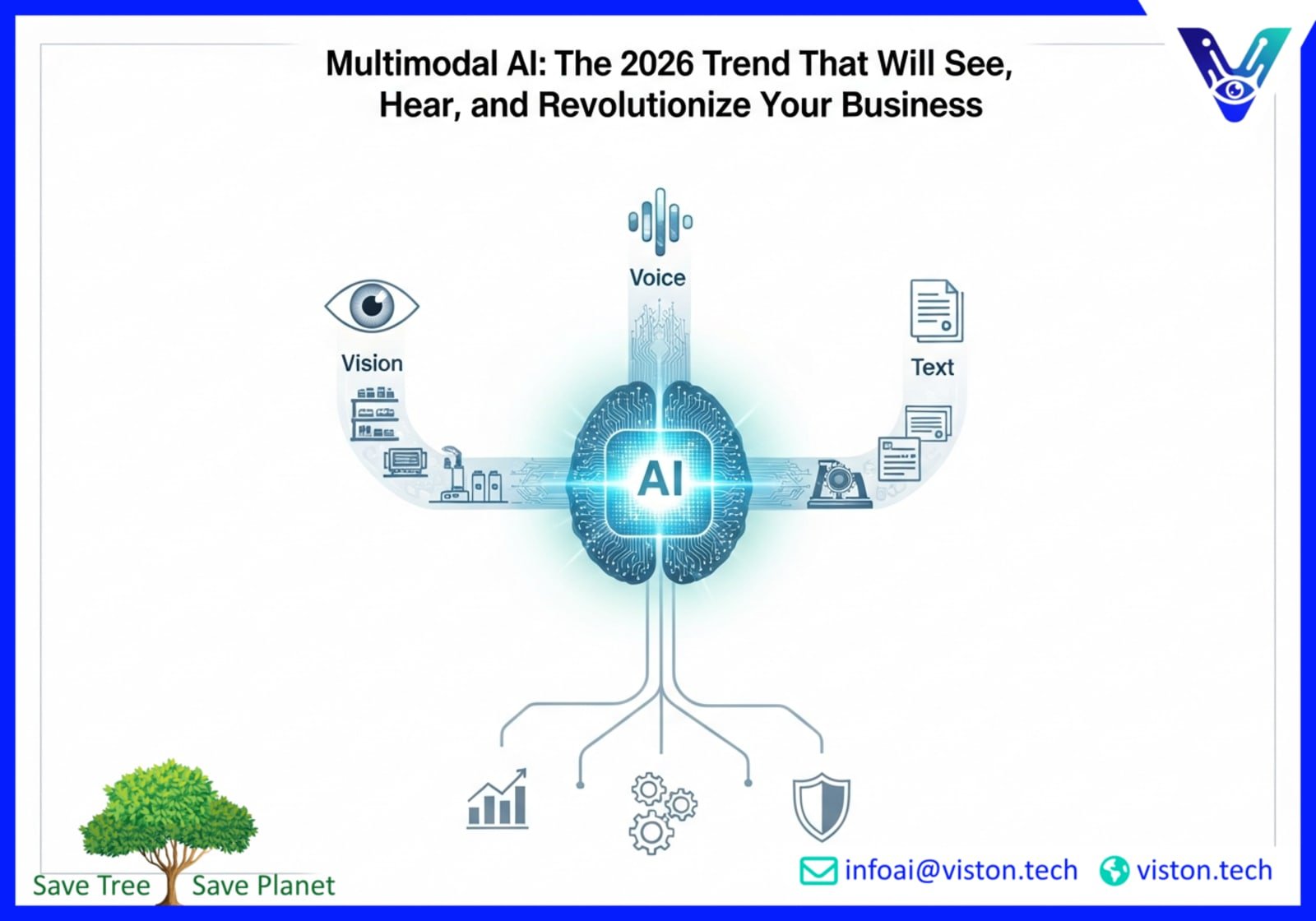

Unlock the future of business with multimodal agents. This 2026 AI trend merges text, voice, and vision to automate complex workflows, boosting efficiency, accuracy, and decision-making for a powerful competitive advantage. Read More

#multimodalagents #voicevision #AIforbusiness #futureofAI #enterprisetech #digitaltransformation #VistonAI #AIin2026 #complexworkflows #AIsolutions

Revolutionize your IT service desk. Learn how AI agents are evolving from intelligent ticket triage to full, autonomous case resolution, dramatically improving efficiency and user satisfaction. Read More #AIAgents #ITSM #ServiceDesk #Automation

Charged based on actual usage, such as per request, per GB of bandwidth, or per page scraped, with no fixed commitment.

A one-time fee is charged for a specific project, regardless of volume or duration, based on scope and complexity.

Billed based on the time spent developing, running, or maintaining the scraper, often used for custom or consulting-heavy projects.

pay a recurring fee (monthly or annually) for access to scraping services, often tiered based on usage limits like the number of requests, pages scraped, or data points extracted.

Begin with comprehensive stakeholder engagement to understand business objectives, success metrics, and requirements. Conduct thorough analysis of existing workflows, data sources, and technical constraints. Establish clear project scope, timeline, and success criteria while ensuring alignment between AI strategy and business goals.

Collect, clean, and prepare high-quality data from all relevant sources. Perform exploratory data analysis (EDA) to uncover patterns and insights. Establish robust data pipelines, ensure data quality standards, and create the foundation for AI model training. This step prevents the "garbage in, garbage out" scenario.

Select appropriate AI models based on problem requirements and data characteristics. Develop, train, and fine-tune models using iterative approaches. Focus on achieving optimal balance between accuracy, interpretability, and performance. Implement version control and documentation for model assets.

Rigorously test models against unseen data and validate performance metrics. Conduct scenario testing, edge-case analysis, and A/B testing. Integrate the AI solution into existing IT infrastructure through APIs, containerization, or cloud deployment. Ensure security, scalability, and compliance requirements are met.

Deploy the validated AI solution into production environment with proper monitoring systems. Provide comprehensive training to end-users and IT staff. Implement authentication, security protocols, and compliance measures. Focus on smooth integration with business workflows and user adoption.

Establish ongoing monitoring systems to track model performance, business impact, and user feedback. Implement continuous learning loops for model improvement. Regular assessment of KPIs, retraining schedules, and adaptation to changing business needs. Ensure long-term value delivery and system reliability.

MLOps extends DevOps principles to machine learning systems by addressing unique challenges including data versioning, model reproducibility, feature engineering, prediction monitoring, and concept drift. While DevOps focuses on code deployment and infrastructure management, MLOps encompasses the complete ML lifecycle from data preparation through model training, validation, deployment, and continuous monitoring. Unlike traditional software that behaves deterministically, ML models exhibit probabilistic behavior dependent on training data characteristics and may degrade over time as real-world conditions change, requiring specialized monitoring and retraining workflows.

MLOps implementation timelines vary based on organizational maturity, existing infrastructure, and scope of deployment. Initial platform deployment establishing core capabilities including model deployment pipelines, basic monitoring, and governance frameworks typically requires 8-12 weeks. Full enterprise rollout incorporating advanced features, integration with legacy systems, edge deployment capabilities, and organizational enablement generally completes within 4-6 months. Organizations can realize measurable value from automated deployment and monitoring workflows within the first 30-60 days while incrementally adding sophisticated capabilities including automated retraining, A/B testing frameworks, and explainability tools over subsequent implementation phases.

Organizations implementing comprehensive MLOps capabilities typically achieve positive ROI within 6-12 months through multiple value drivers. Direct cost savings from automated workflows and infrastructure optimization generally reduce ML operational costs by 40-60%. Accelerated time-to-production enables 3-5x increase in deployed model volume, multiplying business value from data science investments. Improved model performance through continuous monitoring and automated retraining drives 15-30% improvement in business KPIs including revenue, cost reduction, and customer experience metrics. Reduced compliance risk and audit preparation costs provide additional value particularly relevant for financial services and healthcare organizations operating under strict regulatory requirements.

Enterprise MLOps platforms provide comprehensive compliance capabilities including audit trails tracking model lineage from training data through production deployment, automated documentation of model development and validation procedures, explainability frameworks demonstrating decision rationale for regulatory review, and bias detection identifying potential fairness issues before production deployment. These features specifically address GDPR requirements for automated decision-making transparency, HIPAA standards for protected health information security, SOC 2 controls for data security and availability, and industry-specific regulations including Basel III for financial services and FDA requirements for medical device software throughout USA, UK, Germany, France, and broader European operations.

Modern MLOps platforms support extensive integration with popular data science tools and frameworks including Python-based libraries (scikit-learn, TensorFlow, PyTorch), notebook environments (Jupyter, Databricks), experiment tracking tools (MLflow, Weights & Biases), and data versioning systems (DVC, Pachyderm). Viston’s MLOps platform specifically provides APIs and connectors enabling data scientists to continue using familiar development tools while benefiting from automated deployment, monitoring, and governance capabilities. Integration with enterprise data infrastructure including data warehouses, data lakes, feature stores, and business intelligence platforms ensures MLOps workflows access required data sources and deliver predictions to downstream applications and decision-making systems.

MLOps platforms operate across diverse infrastructure environments including public cloud (AWS, Azure, Google Cloud), private cloud, hybrid architectures, and edge computing deployments. Minimum requirements typically include containerization capabilities (Docker, Kubernetes), compute resources for model training and inference, storage for model artifacts and training data, and network connectivity for distributed deployments. Specific infrastructure sizing depends on model complexity, inference volume, latency requirements, and data volumes. Viston’s MLOps solutions support flexible deployment architectures from fully managed cloud services minimizing infrastructure management overhead to on-premises deployments satisfying data residency requirements for regulated industries throughout USA, Europe, and Australia markets.

MLOps platforms combat model degradation through continuous monitoring, automated drift detection, and retraining workflows. Monitoring frameworks track multiple performance dimensions including prediction accuracy against ground truth labels, data distribution shifts indicating feature drift, feature importance changes suggesting relationship evolution, and business KPIs measuring real-world impact. When monitoring detects performance degradation below acceptable thresholds, automated workflows trigger model retraining on recent data, validate updated models against holdout datasets and business metrics, and deploy improved models through established CI/CD pipelines. Organizations implementing these capabilities maintain prediction accuracy within 2-5% of baseline performance despite market dynamics, customer behavior evolution, and competitive actions that would severely degrade unmonitored models.

Successful MLOps implementation requires collaboration between data scientists who understand model development and ML engineers who design production infrastructure. Organizations with established data science teams can implement MLOps capabilities by augmenting existing talent with ML engineering expertise, while companies early in their AI journey benefit from external consulting and implementation support. Viston’s MLOps implementations include knowledge transfer ensuring client teams can independently operate deployed infrastructure, along with ongoing support for advanced capabilities and scaling challenges. Many organizations establish center of excellence teams responsible for MLOps platform operation, enabling distributed data science teams to focus on model development while benefiting from standardized deployment and monitoring infrastructure.

Edge MLOps capabilities extend centralized model training and management to distributed inference environments including retail stores, factory floors, vehicles, and IoT devices. Key features include model optimization for resource-constrained edge hardware, containerization enabling consistent deployment across diverse edge environments, offline operation supporting intermittent connectivity, and centralized management providing visibility and control over distributed model deployments. Edge MLOps workflows train models on aggregated data from centralized infrastructure, optimize model size and inference speed for edge hardware constraints, deploy models to distributed edge locations through automated pipelines, and monitor edge model performance with intelligent aggregation reducing data transmission requirements while maintaining operational visibility.

Enterprise MLOps platforms implement defense-in-depth security including encryption for data at rest and in transit, role-based access control limiting model access to authorized users, network segmentation isolating production infrastructure, secrets management protecting credentials and API keys, and audit logging tracking all infrastructure access and model operations. Additional security measures include model artifact signing preventing unauthorized modifications, vulnerability scanning of containerized deployments, compliance with industry security standards (SOC 2, ISO 27001), and integration with enterprise identity management systems (Active Directory, LDAP, SAML). These capabilities protect intellectual property invested in model development while satisfying security requirements for financial services, healthcare, and other regulated industries operating throughout USA, UK, Germany, France, Australia, and global markets.