Prompt Engineering at Scale: Best Practices for Production-Ready AI Systems

The world of work is changing fast. Artificial intelligence (AI) is no longer a futuristic concept; it’s a present-day reality transforming industries. In fact, the demand for AI fluency in job postings has surged, growing an incredible sevenfold in just two years. At the heart of this transformation is a new, essential skill: prompt engineering.

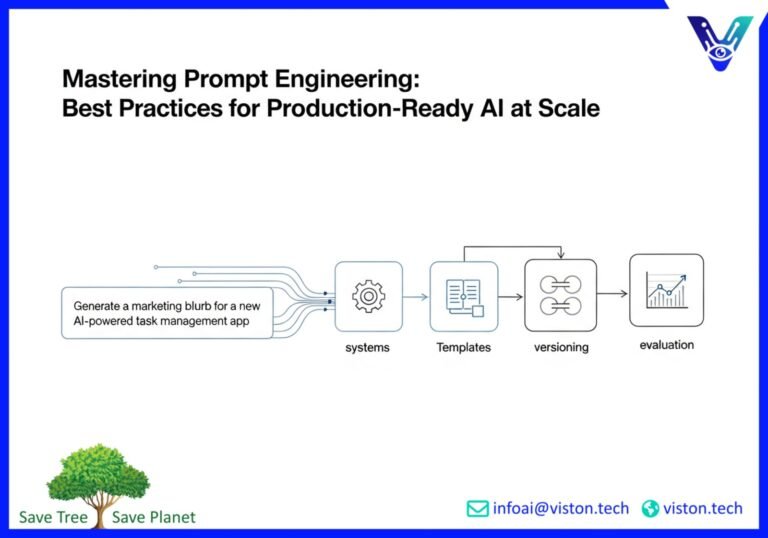

Mastering how to communicate with Large Language Models (LLMs) is now a core professional competency. It’s the key to unlocking the full potential of AI, moving from simple queries to building robust, production-ready AI systems that drive real business value. This guide will walk you through the best practices for scaling your prompt engineering efforts, from foundational principles to advanced strategies, ensuring your AI initiatives are stable, efficient, and impactful.

The Fundamentals: Crafting a Strong Foundation

Before diving into complex techniques, it’s crucial to master the basics. Effective prompt engineering starts with clarity and precision. Think of it as giving a new employee a task; the more specific your instructions, the better the outcome.

Clarity is King

Vague prompts lead to vague and often unusable results. Avoid ambiguity by providing clear, concise instructions that leave no room for misinterpretation.

* Be Specific: Instead of “Write about marketing,” try “Write a 500-word blog post on the top 3 social media marketing strategies for B2B tech startups in 2025.”

* Define the Audience and Tone: Specify who the content is for and the desired tone. For example, “Explain the concept of blockchain to a non-technical audience in a friendly and approachable tone.”

* Provide Context: Give the AI the necessary background information to complete the task accurately. If you’re asking for a project proposal, include details about the client, goals, budget, and timeline.

Structure Your Prompts for Success

A well-structured prompt guides the LLM to produce a well-structured output. Breaking down complex requests into smaller, logical steps can dramatically improve the quality of the response.

* Use Bullet Points or Numbered Lists: For multi-step tasks, outline each step clearly.

* Task Decomposition: Instead of one massive prompt, break down a complex task into a series of smaller, more manageable prompts. This “chain-of-thought” approach helps the AI to “think” more logically.

* Specify the Output Format: Clearly state the desired format, whether it’s JSON, Markdown, a table, or a specific heading structure. This is a highly reliable technique for controlling the output.

Advanced Patterns for Production-Grade Performance

Once you have a solid grasp of the fundamentals, you can start implementing advanced patterns to optimize your prompts for the demands of a production environment. These techniques enhance reliability, control, and the overall quality of your AI system’s outputs.

System Prompts: Setting the Stage

System prompts are high-level instructions that define the AI’s persona, role, and constraints for the entire interaction. Think of it as setting the “rules of engagement.” A well-crafted system prompt ensures consistency and keeps the AI aligned with your objectives.

Example System Prompt:

“You are a helpful and friendly customer support assistant for a SaaS company. Your primary goal is to resolve user issues quickly and accurately. You should always be polite, empathetic, and professional. Do not provide information outside of the company’s official documentation.”

Reasoning Scaffolds: Guiding the AI’s Thought Process

For complex problem-solving, you can build “scaffolding” into your prompts to guide the AI’s reasoning. This involves instructing the model to think step-by-step, consider different perspectives, or verify its own work before providing a final answer. This approach is a more sophisticated version of chain-of-thought prompting.

Format Locking: Ensuring Consistent Outputs

In production systems, consistency is critical, especially when the AI’s output is fed into another automated process. Format locking involves providing explicit instructions and examples of the desired output structure, such as a JSON schema. This minimizes parsing errors and ensures seamless integration with other parts of your technology stack. For more in-depth knowledge on advanced techniques, Advanced Retrieval for AI with LlamaIndex offers valuable insights.

Building a Prompt Template Library: The Key to Scalability

As your organization’s use of AI grows, managing prompts on an ad-hoc basis becomes unsustainable. A centralized prompt template library is essential for scalability, consistency, and collaboration.

Why You Need a Template Library

* Consistency: Ensures that everyone in the organization is using the same high-quality, approved prompts for similar tasks.

* Efficiency: Saves time by allowing users to reuse and adapt existing templates instead of starting from scratch.

* Collaboration: Facilitates knowledge sharing and allows both technical and non-technical team members to contribute to prompt development.

* Governance: Provides a single source of truth for all prompts, making it easier to manage updates, enforce standards, and maintain security.

Best Practices for Your Library

* Organize by Use Case: Structure your library with a clear hierarchy based on departments, projects, or specific tasks.

* Include Clear Documentation: Each template should have a description of its purpose, expected inputs, and sample outputs.

* Implement Access Controls: Use role-based permissions to control who can create, edit, and approve prompts.

Evaluation and Versioning: The Cornerstone of Production Stability

Treat your prompts like you treat your code. In a production environment, “good enough” isn’t good enough. You need a systematic approach to evaluating and versioning your prompts to ensure they remain effective and reliable over time.

The best practice is to pair prompt versioning, regression testing, and observability platforms for ultimate production stability.

Prompt Versioning

Just like software, prompts evolve. Implementing a version control system for your prompts is crucial. This allows you to:

* Track Changes: Keep a complete history of all modifications to a prompt.

* Rollback to Previous Versions: If a new version of a prompt is underperforming, you can easily revert to a previous, more stable version.

* A/B Testing: Experiment with different prompt variations and compare their performance systematically.

Regression Testing and Evaluation

Before deploying a new prompt version, you must test it rigorously. Regression testing involves running the new prompt against a standardized set of test cases to ensure that it not only performs the new task correctly but also doesn’t negatively impact existing functionality. Develop a suite of evaluation metrics to measure performance, which can include both quantitative measures (e.g., accuracy, response time) and qualitative assessments from human reviewers.

Observability Platforms

Once a prompt is in production, you need to monitor its performance in real-time. LLM observability platforms are specialized tools that allow you to track key metrics, identify issues, and understand how your prompts are behaving in the real world. This continuous feedback loop is essential for ongoing LLM optimization and maintenance. For those looking to deepen their understanding of machine learning operations, the MLOps Community is an excellent resource.

Common Pitfalls and How to Avoid Them

Even with the best practices in place, there are common mistakes that can derail your prompt engineering efforts. Being aware of these pitfalls is the first step to avoiding them.

* Being Too Vague: The most common mistake. Always strive for specificity.

* Ignoring the Model’s Limitations: Understand that LLMs are not all-knowing. They don’t have real-time information and can “hallucinate” or make up facts.

* Forgetting to Iterate: Prompt engineering is an iterative process. Your first prompt is rarely your best. Continuously test, refine, and improve.

* Neglecting Safety and Ethics: Always consider the potential for bias and harmful outputs. Build in safeguards and regularly audit your AI’s responses.

* Lack of Testing: Never deploy a prompt to production without thorough testing.

Conclusion: Prompt Engineering as a Strategic Imperative

Prompt engineering has evolved from a niche trick to a strategic discipline that is fundamental to successful AI implementation. By embracing a structured, engineering-led approach, organizations can move beyond experimentation and build production-ready AI systems that are reliable, scalable, and deliver tangible business outcomes. The journey from a simple prompt to a robust AI application requires a commitment to best practices, continuous improvement, and the right tools.

Ready to unlock the full potential of your AI initiatives? The experts at Viston AI specialize in developing enterprise-grade AI solutions that drive real results. From strategic consulting to custom AI development, we provide the expertise you need to transform your business.

Contact Viston AI today to learn how our AI-powered solutions can help you innovate and grow.

#PromptEngineering #LLMOps #AIforBusiness #EnterpriseAI #LLMOptimization #FutureOfWork #AISystems #DigitalTransformation

Frequently Asked Questions (FAQs)

1. What is prompt engineering?

Prompt engineering is the process of designing, crafting, and optimizing input text (prompts) to guide Large Language Models (LLMs) toward generating desired, accurate, and relevant outputs. It’s a blend of art and science that involves understanding the AI’s capabilities and limitations to communicate instructions effectively.

2. Why is prompt engineering so important for businesses?

Effective prompt engineering is crucial for businesses because it directly impacts the quality, reliability, and cost-effectiveness of AI applications. Well-crafted prompts lead to more accurate results, better user experiences, and a higher return on investment in AI technologies. It’s the key to transforming a powerful AI model into a valuable business tool.

3. What is the difference between a simple prompt and a production-ready prompt?

A simple prompt is often a one-off question or instruction used for experimentation. A production-ready prompt is a highly engineered and tested instruction designed for a live, automated system. It includes clear constraints, role definitions (system prompts), structured formatting, and has undergone rigorous evaluation and versioning to ensure consistent and reliable performance at scale.

4. What are some key LLM optimization techniques?

Key LLM optimization techniques related to prompts include using clear and specific language, breaking down complex tasks (task decomposition), providing examples (few-shot prompting), guiding the model’s reasoning process (chain-of-thought), and specifying the exact output format. For the models themselves, optimization can involve fine-tuning on specific datasets and other advanced methods.

5. Why is a prompt template library necessary for scaling AI?

A prompt template library is a centralized repository of pre-approved, reusable prompts. It is essential for scaling AI initiatives because it ensures consistency across an organization, improves efficiency by eliminating redundant work, facilitates collaboration between technical and non-technical teams, and provides a framework for governance and quality control.

6. How do I measure the performance of a prompt?

Prompt performance is measured through a combination of automated and human evaluation. Automated metrics can include accuracy, latency (response time), and adherence to a specified format. Human evaluation is critical for assessing more subjective qualities like tone, relevance, and helpfulness. A robust evaluation framework often includes a “golden dataset” of test cases to run new prompts against.

7. What is prompt versioning?

Prompt versioning is the practice of applying software version control principles to prompts. Each change to a prompt is tracked and assigned a version number (e.g., v1.0, v1.1). This allows teams to manage changes systematically, collaborate on improvements, test different versions, and quickly roll back to a previous version if a new one causes problems, ensuring production stability.

8. What are the biggest mistakes to avoid in prompt engineering for production systems?

The biggest mistakes include using vague and untested prompts, failing to specify the output format, not having a version control system, neglecting to monitor prompts in production, and overlooking the importance of a centralized prompt management system or library. These oversights can lead to inconsistent results, system errors, and an inability to scale AI effectively.