LLM Cost Optimization: Slash API & Infra Spend Before It Slashes Your Budget

Large Language Models (LLMs) are revolutionizing industries, but their power comes with a hefty price tag. As enterprises weave AI into their core operations, the cost of API calls and the underlying infrastructure can quickly spiral out of control. Many organizations are discovering that their initial AI spending forecasts are wildly inaccurate, leading to significant budget overruns. The good news? Strategic LLM cost optimization can dramatically reduce these expenses—often by up to 80%—without sacrificing performance.

For C-suite executives, AI engineers, and IT leaders, mastering LLM cost management is no longer just a technical challenge; it’s a critical business imperative for 2025 and beyond. This blog post demystifies the complexities of LLM spending and provides a clear, actionable framework for cutting costs while boosting efficiency and responsiveness. We’ll explore how cost-aware routing and intelligent context management can transform your AI from a cost center into a strategic, high-ROI asset.

Understanding the Hidden Costs of LLMs

Before diving into solutions, it’s crucial to understand what drives LLM expenses. The primary culprit is the consumption-based pricing model used by most providers. You pay for every “token”—a unit of text that can be as short as a single character or as long as a word—that you send to (input) and receive from (output) the model. Output tokens are often significantly more expensive than input tokens, making verbose models a major drain on resources.

Beyond direct API fees, hidden costs lurk in the infrastructure required to support these models, especially for companies self-hosting open-source alternatives. These include compute power, data storage, and the engineering hours spent on integration and maintenance. Without a clear view into this fragmented spending, costs can escalate exponentially, jeopardizing the financial viability of your AI initiatives.

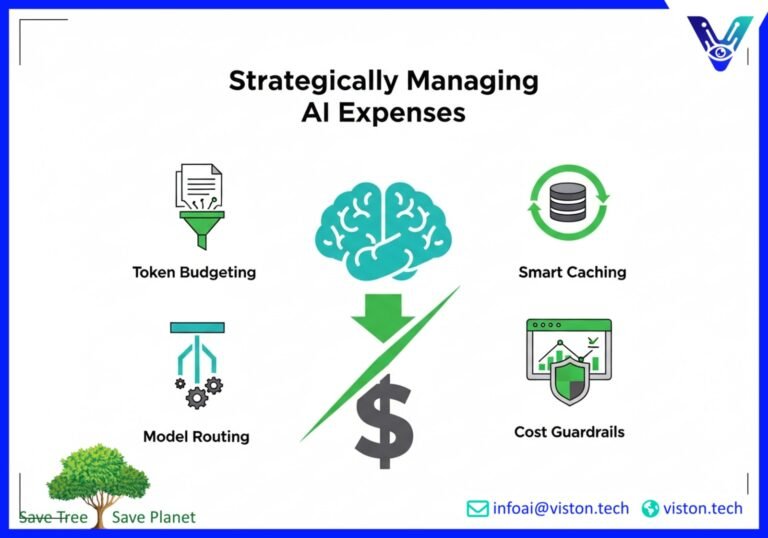

The Four Pillars of LLM Cost Optimization

Effective LLM cost optimization is not about finding a single magic bullet. It’s about implementing a multi-layered strategy that addresses inefficiencies at every stage of the AI workflow. Here are four foundational pillars that can deliver immediate and substantial savings.

1. Intelligent Context Controls and Token Budgeting

The most direct way to cut costs is to reduce the number of tokens you process. Many applications send far more information to the LLM than necessary, bloating prompts with irrelevant context. This is where token budgeting comes in.

Think of tokens as the currency of your AI. Every prompt has a budget, and every piece of information must justify its inclusion.

- Trim the Fat from Prompts: Start by removing conversational fluff, repetitive instructions, and verbose examples from your prompts. Short, direct, and clear instructions often yield better results at a fraction of the cost.

- Summarize and Compress: Instead of feeding a long conversation history or a large document directly into a prompt, use a smaller, faster model to summarize the text first. This condensed version provides the necessary context without the token overhead.

- Context-Aware Retrieval: For applications that draw on a knowledge base, like Retrieval-Augmented Generation (RAG), refine your retrieval strategy. Instead of pulling ten lengthy document chunks, retrieve three highly relevant, concise snippets. This focused approach not only saves tokens but often improves the accuracy of the response.

By implementing disciplined token budgeting, teams can often achieve immediate cost reductions of 15-40%. For more insights on prompt engineering, The Prompting Guide offers excellent resources.

2. The Power of Smart Caching

How many times does your organization ask the same or a semantically similar question to an LLM? Caching is a simple yet powerful technique that stores the answers to previous queries. When an identical or similar query comes in, the system retrieves the answer from the cache instead of making another expensive API call.

There are two primary types of caching:

- Exact-Match Caching: This is the most basic form. If the new prompt exactly matches a previous one, the cached response is served. This is highly effective for applications with frequent, repetitive queries, such as customer service bots answering common questions.

- Semantic Caching: This more advanced technique uses vector embeddings to understand the meaning behind a query. If a new prompt is semantically similar to a cached one—even if the wording is different—the system can return the stored answer. For example, “How do I reset my password?” and “I forgot my password, what should I do?” would be recognized as the same query.

Implementing a robust caching layer can deflect a significant percentage of inbound queries, leading to substantial cost savings and a dramatic improvement in response times for users.

3. Dynamic Model Routing and Router Trees

One of the biggest mistakes in enterprise AI is using a single, powerful—and expensive—model like GPT-4 for every single task. This is like using a sledgehammer to crack a nut. The reality is that many queries are simple and can be handled perfectly well by smaller, faster, and much cheaper models.

This is where model routing comes into play. A model router, or a “router tree,” acts as an intelligent traffic controller for your AI queries. It analyzes each incoming prompt and directs it to the most appropriate model based on its complexity, intent, and priority.

Here’s how a typical router tree might work:

- A simple query, like a basic classification or data extraction task, is sent to a small, fast open-source model.

- A moderately complex query, such as summarizing a short email, is routed to a mid-tier model like GPT-3.5-Turbo.

- Only the most complex, high-stakes queries requiring deep reasoning or creativity are escalated to a premium model like Claude 3 Opus or GPT-4.

This tiered approach ensures that you only pay for the horsepower you truly need. Studies have shown that intelligent model routing can cut inference costs by as much as 85% while maintaining nearly the same level of performance as using a top-tier model exclusively. This strategy optimizes for both cost and speed, delivering a superior user experience. To learn more about the architecture of these systems, check out this overview of production-ready LLM routers.

4. Cost Dashboards and Proactive Guardrails

You can’t control what you can’t see. The final pillar of LLM cost optimization is establishing comprehensive visibility and control over your AI spending. This requires moving beyond monthly invoices and implementing real-time monitoring.

- Cost Dashboards: A centralized dashboard should provide a granular, real-time view of your LLM usage. It should allow you to track spending by project, team, user, and even by individual model. This visibility helps you identify which applications are driving costs and where your optimization efforts will have the most impact. Visibility alone can often lead to a 10-20% reduction in spend as teams become more conscious of their consumption.

- Automated Guardrails: Don’t wait for bill shock at the end of the month. Implement automated guardrails to prevent runaway costs before they happen. These can include:

- Budget Alerts: Set thresholds to automatically notify teams when spending is approaching its limit.

- Token Caps: Enforce hard limits on the number of tokens an input or output can have.

- Rate Limiting: Prevent individual users or processes from overwhelming the system with excessive API calls.

- Circuit Breakers: Automatically disable a feature or fall back to a cheaper model if its cost exceeds a predefined budget.

These tools, often part of a broader FinOps strategy for AI, empower organizations to innovate responsibly while maintaining strict budget discipline. Several platforms, such as Datadog, offer solutions for monitoring these costs.

The Future is Cost-Aware AI

As AI becomes more deeply embedded in business processes, managing its cost will be as crucial as managing any other operational expense. By adopting a proactive and multi-faceted approach to LLM cost optimization, enterprises can unlock the full potential of this transformative technology without breaking the bank.

Implementing strategies like token budgeting, intelligent caching, dynamic model routing, and robust cost monitoring ensures that your AI solutions are not only powerful and responsive but also economically sustainable. This strategic approach transforms AI from a potential budget liability into a powerful engine for growth and innovation.

Ready to take control of your LLM spending and maximize your AI ROI? The journey starts with a smart, cost-aware strategy.

#LLMCostOptimization #AIEconomics #FinOps #ModelRouting #TokenBudgeting #EnterpriseAI #FutureOfAI #CostSavings

Frequently Asked Questions (FAQs)

1. What is LLM cost optimization?

LLM cost optimization is the practice of implementing strategies and tools to reduce the expenses associated with using Large Language Models. This includes minimizing API call costs, optimizing infrastructure, and improving the overall efficiency of AI workflows without significantly impacting performance.

2. How does token budgeting help in reducing LLM costs?

Token budgeting involves carefully managing the number of tokens sent to and received from an LLM. Since most providers charge per token, reducing token usage directly cuts costs. This is achieved by writing more concise prompts, summarizing long texts before input, and removing unnecessary information.

3. What is model routing and why is it important for LLM cost optimization?

Model routing is a technique where incoming AI queries are dynamically sent to the most cost-effective LLM capable of handling the task. Instead of using an expensive, powerful model for every query, a router intelligently selects a cheaper model for simpler tasks, saving significant costs while maintaining quality.

4. How can caching improve LLM performance and save money?

Caching stores the responses to previous LLM queries. When an identical or semantically similar query is made again, the system can deliver the stored answer instantly instead of making a new, costly API call. This reduces latency, lowers API expenses, and lessens the computational load on the system.

5. Why are cost dashboards and guardrails essential for managing LLM spend?

Cost dashboards provide real-time visibility into how and where money is being spent on LLM usage, enabling teams to identify high-cost areas. Guardrails are automated rules, like budget alerts or token limits, that proactively prevent overspending, ensuring that AI costs stay within a predefined budget.

6. Can I optimize LLM costs if I’m using self-hosted open-source models?

Yes. While you avoid per-token API fees, self-hosting has significant infrastructure costs (compute, storage). Strategies like model routing (using smaller models), quantization (reducing model size), and batching requests can dramatically improve hardware efficiency and lower your operational expenses.

7. What is the first step my organization should take to optimize LLM costs?

The best first step is to gain visibility. Implement a cost dashboard or monitoring tool to understand your current spending patterns. Once you know which applications, teams, or models are driving the majority of your costs, you can prioritize and apply targeted optimization strategies like token budgeting and model routing for the greatest impact.

8. How much can a company realistically save with LLM cost optimization?

The savings can be substantial. By combining several strategies—such as prompt optimization, caching, and model routing—businesses can often reduce their LLM-related expenses by up to 80%. Even implementing basic techniques can quickly lead to savings of 15-40%.

Take Control of Your AI Spend with Viston AI

Navigating the complexities of LLM cost optimization can be challenging. Viston AI provides AI-powered solutions designed to give you complete visibility and control over your AI/ML operational costs. Our intelligent platform helps you implement advanced strategies like cost-aware model routing and automated guardrails, ensuring you achieve maximum ROI from your AI investments.

Contact Viston AI today to learn how we can help you build a powerful, efficient, and cost-effective AI strategy.