Secure, Scalable, and Intelligent LLMOps Integration for Global Businesses.

Unlock the full potential of Generative AI with Viston. When you [Hire OpenAI Developers] from our expert team, you aren’t just accessing API integration; you are deploying a complete LLMOps architecture designed for enterprise velocity. With 15+ years of expertise and a track record of serving 2,860+ clients across the USA, UK, Germany, and Australia, Viston creates custom AI ecosystems that drive automation and decision intelligence. Whether you need advanced RAG pipelines, fine-tuned GPT-4o models, or secure Edge AI implementations, our developers transform raw API capabilities into competitive business advantages.

Accelerate Innovation with Strategic LLM Implementation

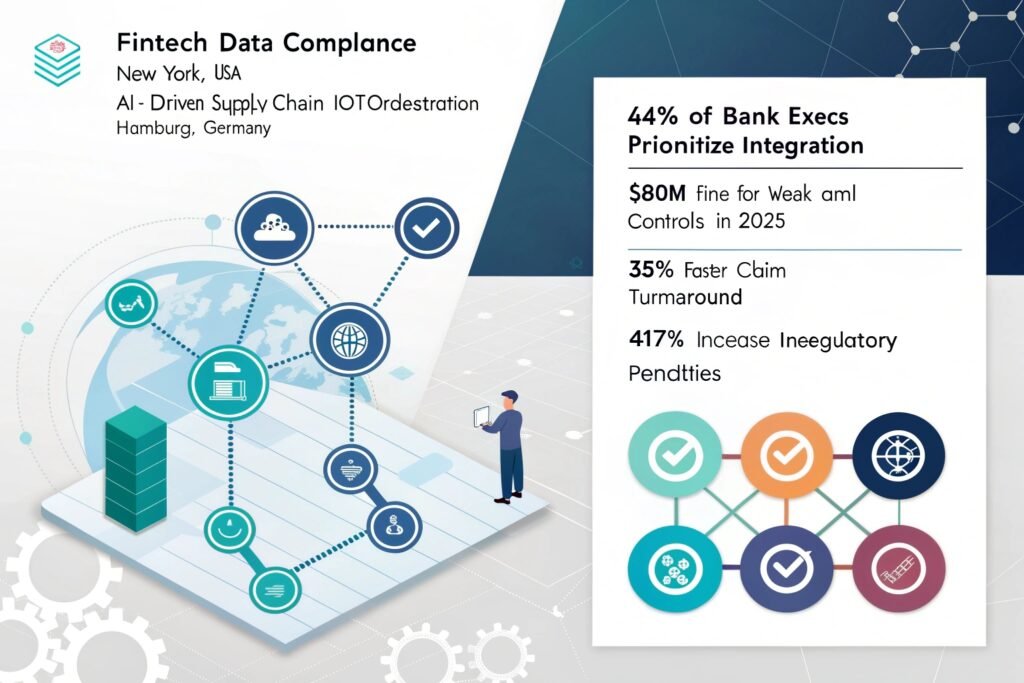

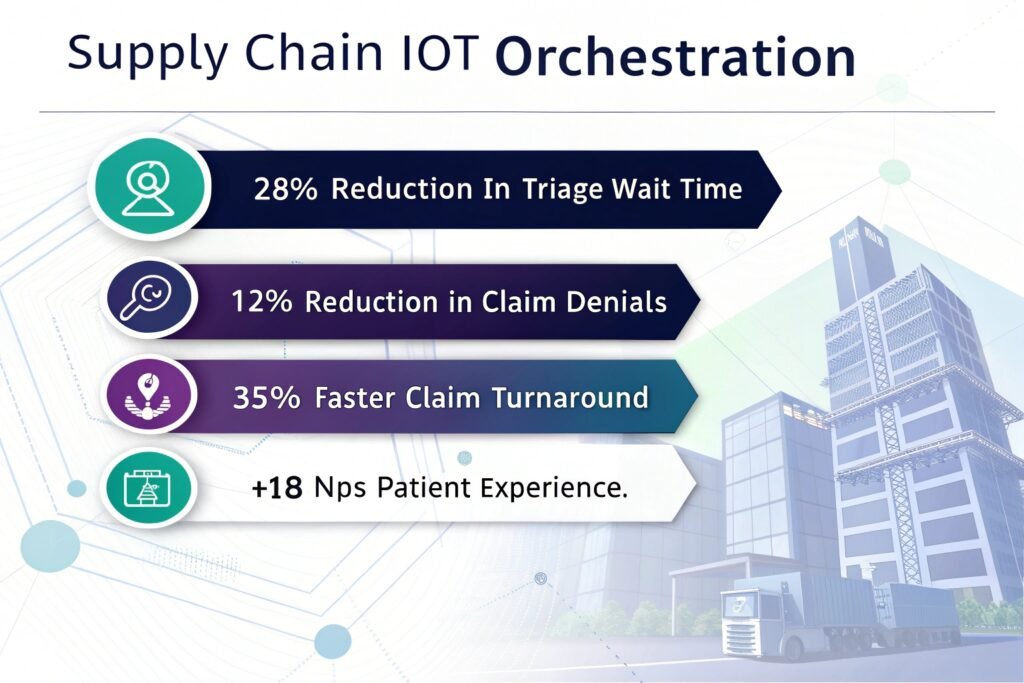

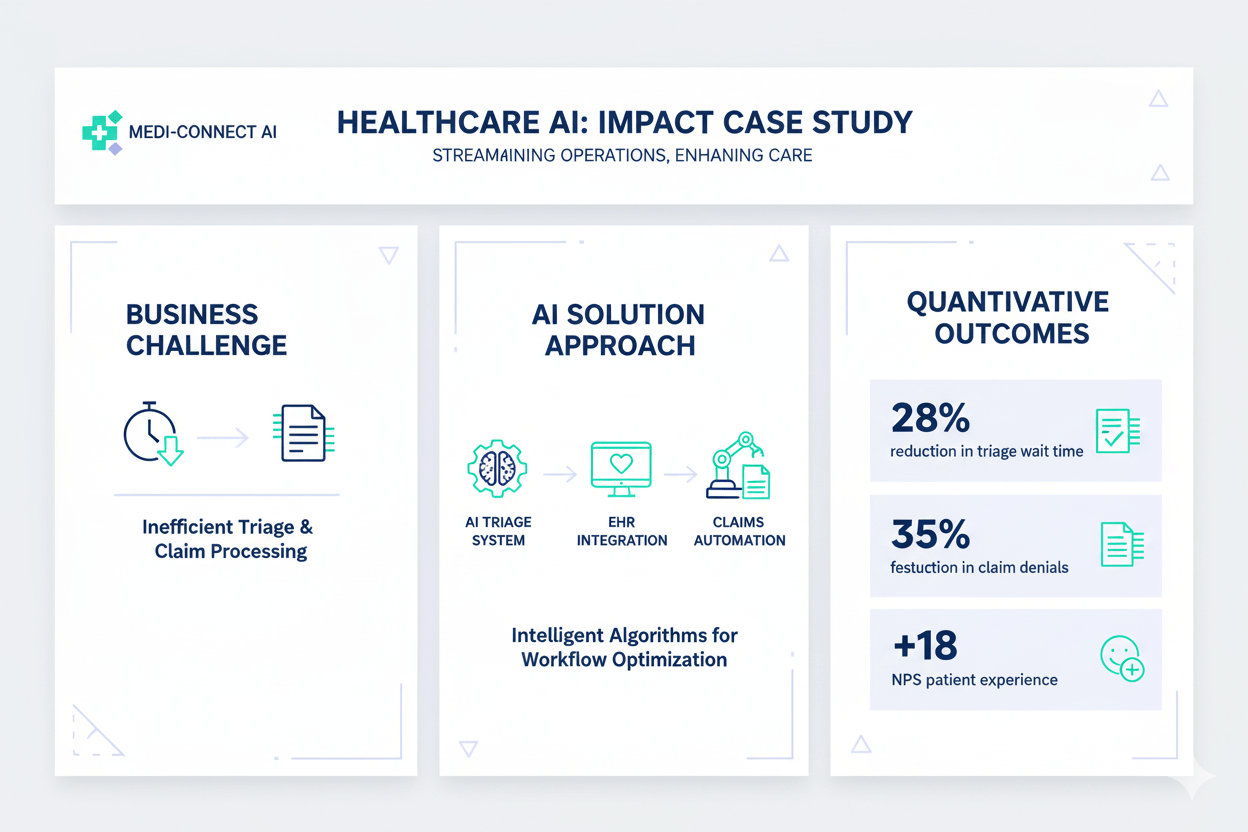

In the current B2B landscape, relying on generic AI tools is insufficient. To gain a genuine market edge, organizations must [Hire OpenAI Developers] who understand the nuances of system design, secure key management, and hallucination guardrails. Viston provides end-to-end LLMOps in a Box, bridging the gap between experimental AI and production-ready infrastructure. We help SaaS, Fintech, and Manufacturing leaders across the USA, Canada, France, and the Nordics transition from manual processes to autonomous, AI-driven operations.

We build Retrieval-Augmented Generation systems that ground GPT models in your proprietary data for zero-hallucination accuracy.

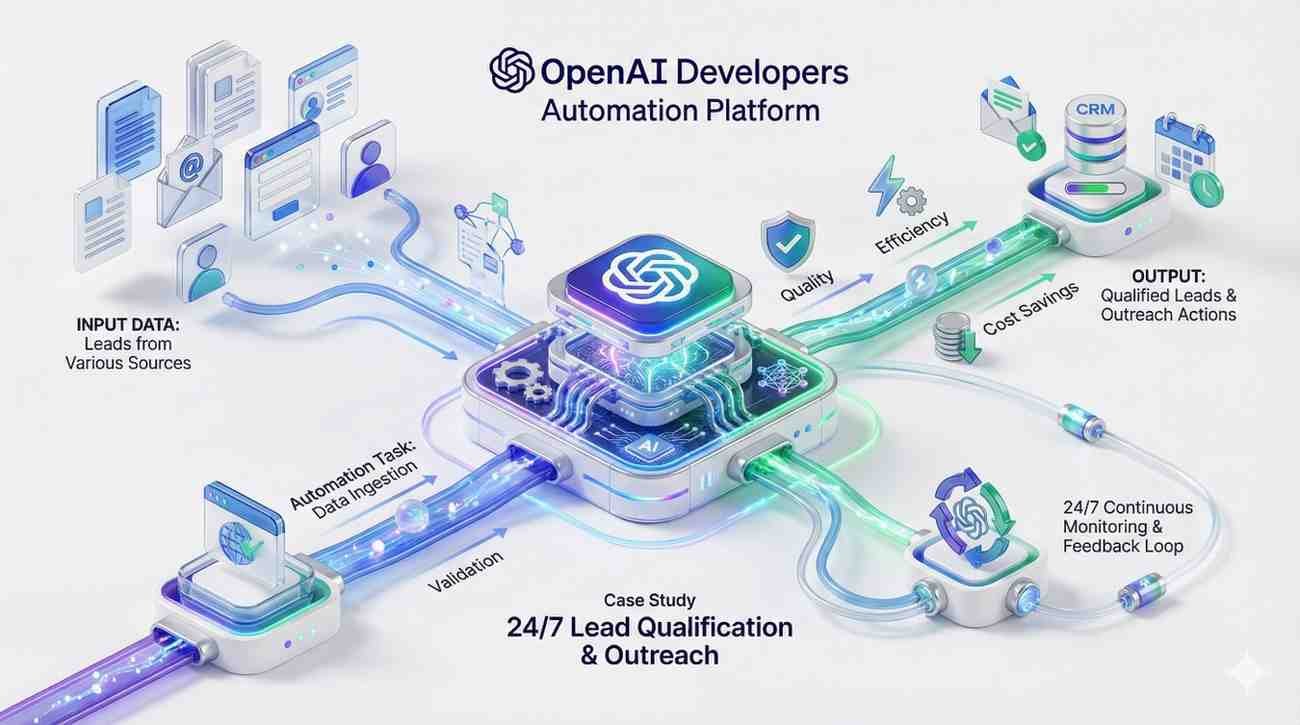

Move beyond chat; our developers build autonomous agents capable of executing complex multi-step tasks via Function Calling.

Deploy lightweight, distilled models for real-time inference on manufacturing and logistics edge devices.

Built-in governance ensuring your AI initiatives meet strict regulatory standards in the EU and North America.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

High-Impact Models

GPT-4o

GPT-4 turbo

GPT-3.5

OpenCV

DALL-E 3

LangChain

Semantic Kernel

LlamaIndex

AutoGPT

OpenAI Swarm

Python

FastAPI

Node.js

GraphQL

REST

Pinecone

Milvus

Milvus

MongoDB

ChromaDB

AWS Bedrock

Azure OpenAI

Terraform

Docker

PII Redaction

Guardrails AI

OAuth2

Presidio

LangSmith

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

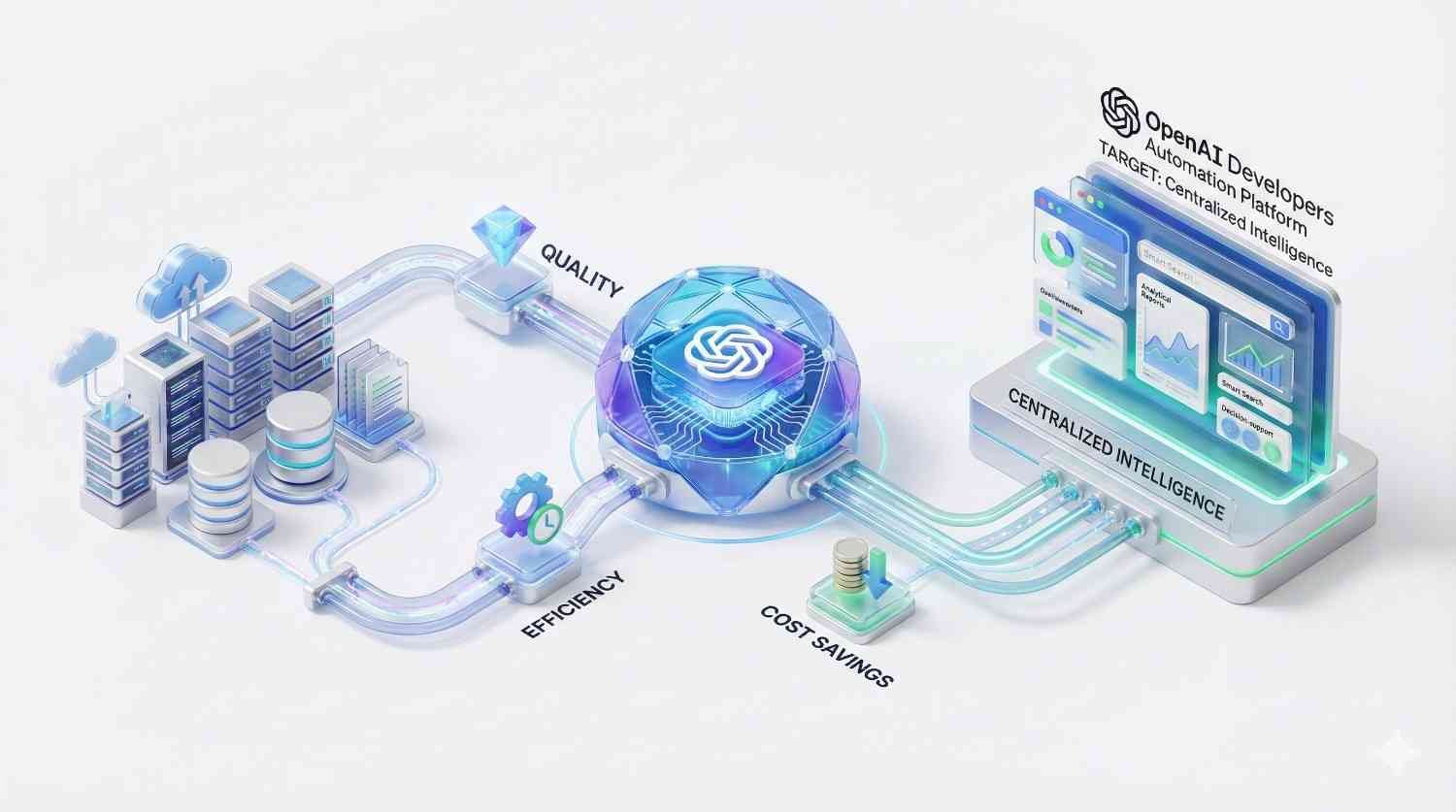

Zero-Hallucination Architectures

We specialize in grounding LLMs using RAG and vector databases. This ensures your AI application provides factual responses based strictly on your verified data sources.

Enterprise-Grade Security

Data privacy is paramount. We implement PII redaction, secure API key management, and VPC deployments to ensure your sensitive data never trains public models.

Advanced Prompt Engineering

Our developers use “Chain-of-Thought” and “Tree-of-Thoughts” prompting techniques to elicit complex reasoning from models, improving output quality by up to 40%.

Cost-Effective Scaling

We optimize token usage through intelligent caching strategies and model distillation, ensuring your API costs remain manageable as your user base grows.

Custom Model Fine-Tuning

For niche industries, generic models fail. We fine-tune OpenAI models on your specific datasets to master your industry jargon, tone, and compliance rules.

Multi-Modal Integration

We don’t just do text. We integrate Whisper (Audio) and DALL-E (Image) to build comprehensive multi-modal applications for rich user experiences.

We utilize Azure OpenAI Service or Enterprise API agreements where data is not used for model training. We also implement middleware that sanitizes PII (Personally Identifiable Information) before it is ever sent to the LLM, ensuring full GDPR and HIPAA compliance.

Yes. While RAG is often sufficient, we offer full fine-tuning services. We prepare your training datasets, manage the fine-tuning jobs, and evaluate the custom model against benchmarks to ensure it understands your specific medical, legal, or technical terminology better than the base model.

We have deep verticals in Fintech, Healthcare, Logistics, Legal Tech, and E-Commerce. Our developers have specific experience dealing with the compliance requirements and data structures unique to these sectors across Europe and North America.

Typically, we can have a developer or a team ready to interview within 48 hours. Once selected, onboarding usually takes less than 3 days. Our “LLMOps in a Box” framework allows us to set up the initial environment almost immediately.

Absolutely. Our expertise lies in connecting modern AI with legacy ERPs, CRMs, and databases. We build secure API wrappers and microservices that allow your old infrastructure to “talk” to new AI agents without requiring a full system rewrite.

We offer flexible models including hourly rates for staff augmentation and fixed costs for defined projects. Costs vary based on the seniority of the developer (Architect vs. Junior Engineer) and the complexity of the integration. Contact us for a tailored quote.

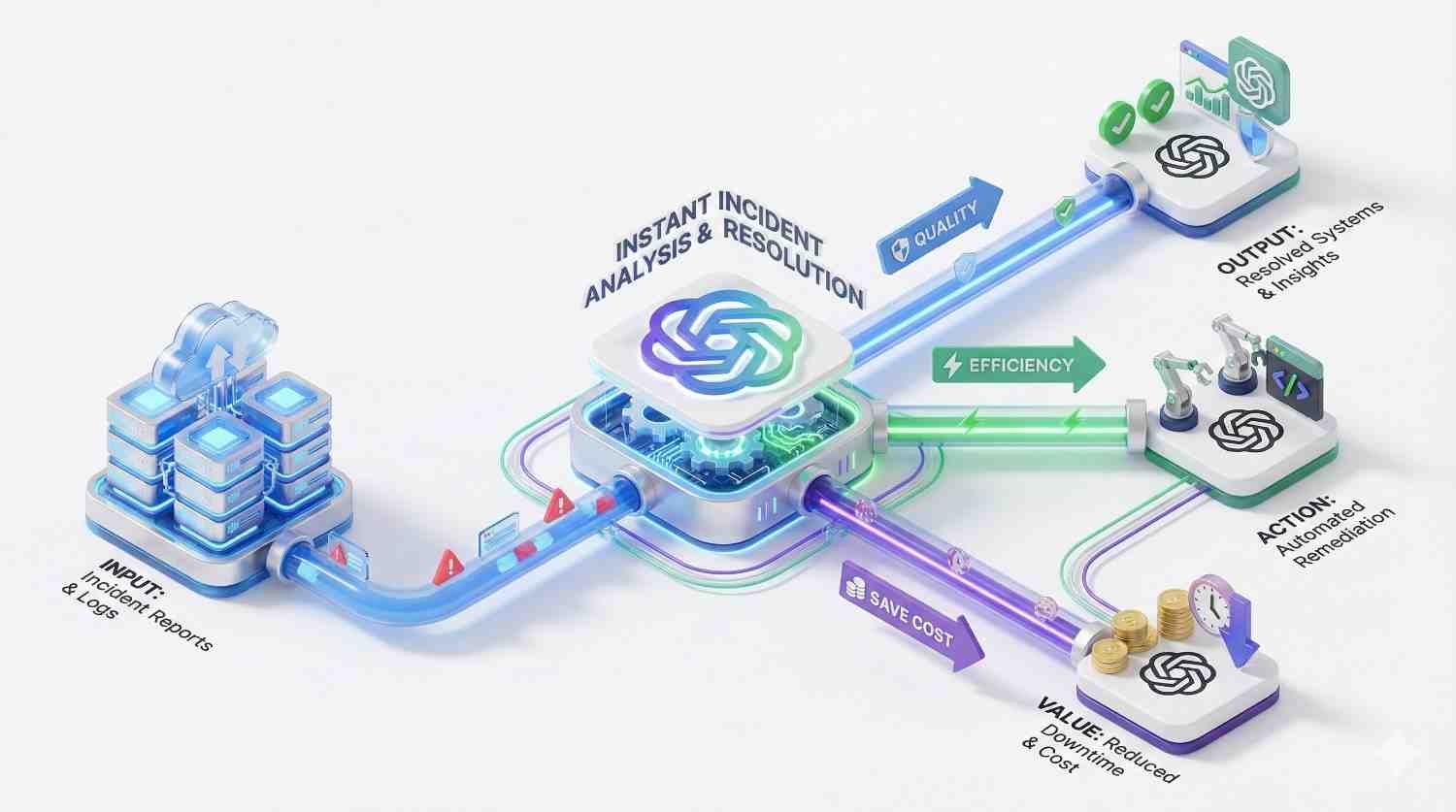

We implement robust back-off strategies, request queuing, and caching layers (semantic caching). This prevents your application from hitting OpenAI rate limits and significantly reduces latency by serving repeated queries from the cache rather than the API.

Yes. We are experts in frameworks like AutoGPT and OpenAI Swarm. We can build systems where multiple AI agents collaborate—one planning, one coding, one reviewing—to solve complex problems autonomously.

Yes. For our European clients, strictly adhering to the EU AI Act is mandatory. Our developers are trained to categorize AI risks and implement necessary transparency and human-oversight logs required by European law.

We can structure a dedicated team to provide 24/7 monitoring and incident response (NOC). This ensures that critical alerts are acknowledged and triaged immediately, regardless of the hour, protecting your uptime and customer experience around the clock.

Don’t let technical debt slow your AI adoption. Partner with Viston to access top-tier talent backed by 15+ years of expertise and a network of 2,860+ satisfied clients. Whether you are in New York, London, Berlin, or Sydney, our team is ready to elevate your capabilities.