Scale your generative AI capabilities with engineers who master model architecture, fine-tuning, and production deployment.

Accelerate your transition from proof-of-concept to high-performance production with Viston. We provide access to elite Large Language Model (LLM) developers who specialize in building secure, domain-specific AI applications. With 15+ years of engineering expertise and a track record of serving 2,860+ clients across the USA, UK, Germany, and Australia, Viston is the trusted partner for organizations seeking robust LLMOps and intelligent automation. Whether you need to fine-tune Llama 3, orchestrate multi-agent workflows, or optimize inference latency for real-time applications, our developers deliver scalable, compliant, and high-impact solutions.

In the rapidly evolving landscape of Generative AI, generalist developers often struggle with the nuances of stochastic models. To achieve true competitive advantage, organizations require specialized talent capable of bridging the gap between raw model capability and business-critical reliability.

When you hire LLM developers through Viston, you engage experts who understand the full lifecycle of AI integration—from data preparation and vector database management to prompt chaining and cost-optimization strategies. We don’t just implement APIs; we engineer resilient “LLMOps in a Box” architectures that transform how your workforce operates.

Proven success delivering compliant AI solutions in complex regulatory environments across North America and Europe.

Deep expertise across open-source (Mistral, Llama) and closed-source (GPT-4, Claude, Gemini) ecosystems

Advanced techniques in quantization and token usage optimization to keep operational costs predictable.

Implementation of guardrails, PII redaction, and adversarial defense mechanisms for responsible AI.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Agentic Workflows

Background: A leading Berlin-based fashion retailer wanted to move beyond basic product recommendations to a conversational styling assistant.

Tech Stack: OpenAI GPT-4o API, Redis Vector Store, Azure Functions, React.

Challenge: Customers were overwhelmed by choice. The client needed a “virtual stylist” that remembered user preferences across sessions without hallucinating non-existent inventory.

Solution: Our team built a stateful agentic workflow using Redis for long-term memory. The LLM was instructed to query the inventory database strictly before suggesting items to ensure stock availability.

Results: Conversion rates for users engaging with the AI stylist increased by 22%. The system handles 50,000+ concurrent conversations during peak sales events.

Testimonial: “The scalability Viston delivered is incredible. We saw an immediate ROI during Black Friday with zero downtime.” — CTO

Background: A Sydney-based legal tech startup aimed to disrupt the contract review market for SMBs.

Tech Stack: Anthropic Claude 3.5 Sonnet, Docker, PostgreSQL (pgvector), Python.

Challenge: The startup needed to identify high-risk clauses in diverse contract formats (PDF, DOCX) with extreme precision, as errors could lead to liability issues.

Solution: Viston developers engineered a multi-step validation pipeline where one AI agent extracts clauses and a second “critic” agent reviews them against Australian legal standards.

Results: The platform reduced contract review time from 4 hours to 15 minutes, achieving a 98% accuracy rate in risk identification.

Testimonial: “Viston’s approach to ‘AI-as-a-partner’ rather than just a tool gave us the confidence to launch to market three months early.” — Founder & CEO

Python

PyTorch

TensorFlow

BabyAGI

Transformers

LangChain

LlamaIndex

DSPy

Haystack

Semantic Kernel

Pinecone

Milvus

Weaviate

ChromaDB

Qdrant

vLLM

Ray Serve

Docker

AWS Bedrock

Kubernetes

LoRA/QLoRA

PEFT

RLHF

Quantization

Guardrails AI

LangSmith

RAGAS

ServiceNow

TruLens

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

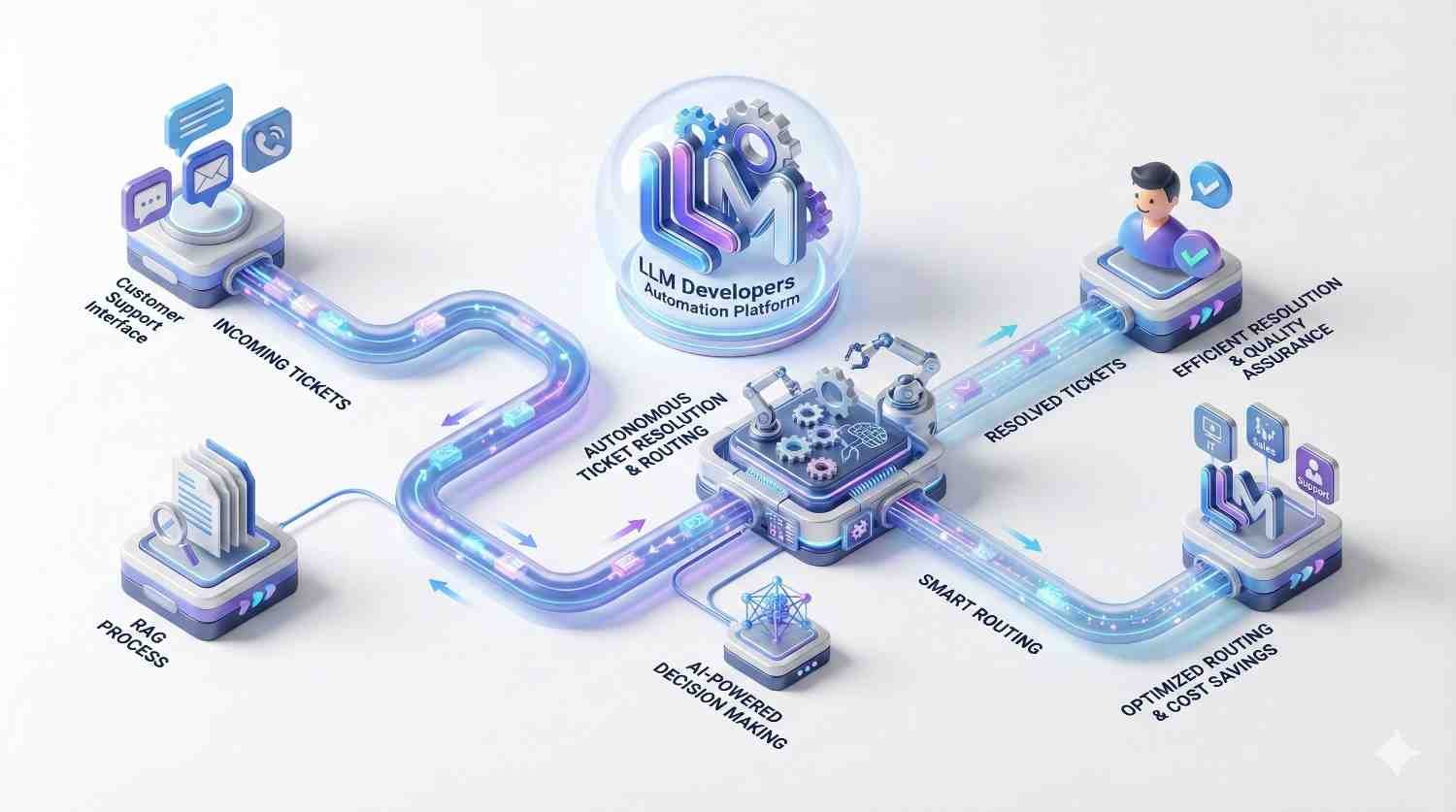

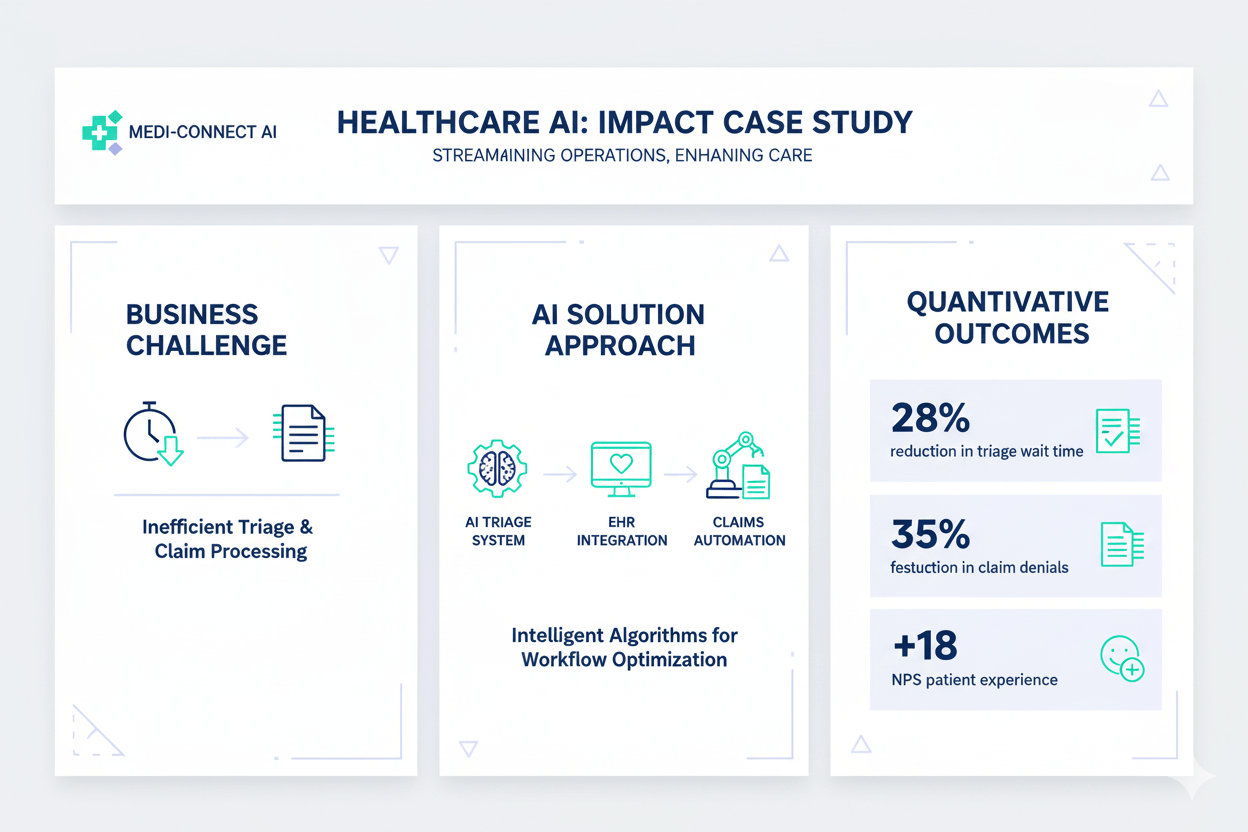

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

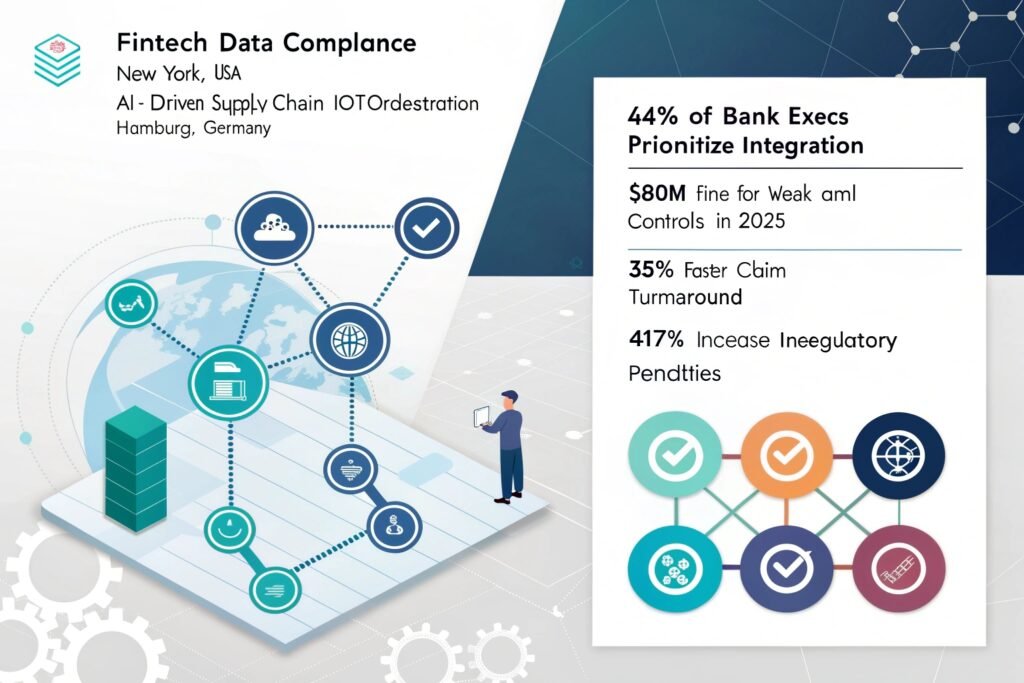

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

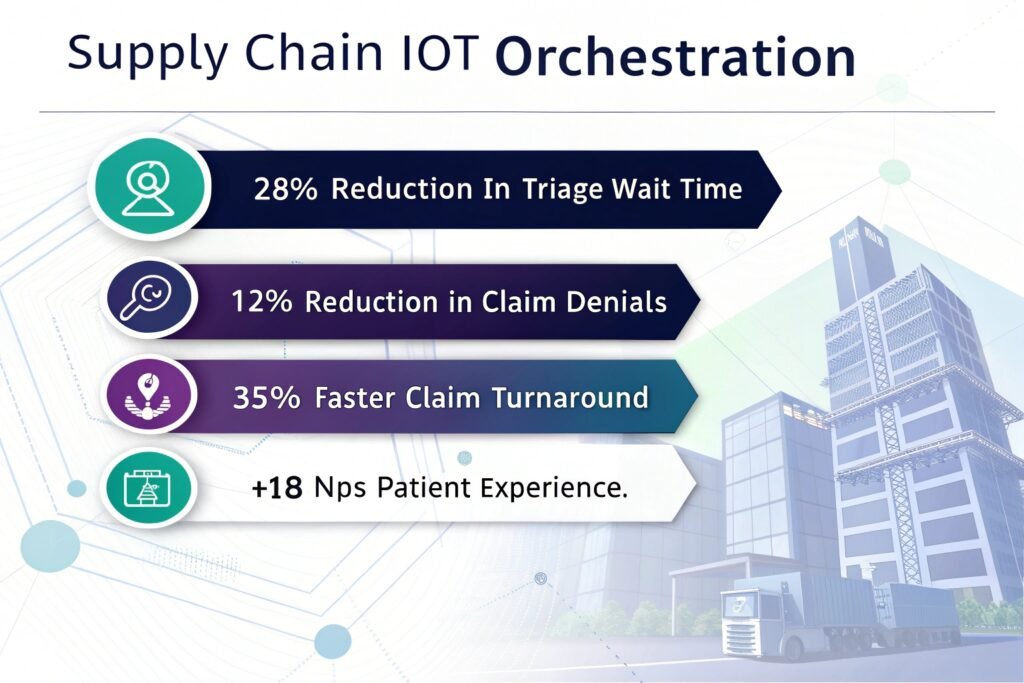

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Deep Domain Fine-Tuning Expertise

General models often fail in niche industries. Our developers excel at curating datasets and fine-tuning models (like Llama 3 or Mistral) to understand specific medical, legal, or engineering terminologies, ensuring high relevance.

Production-Grade Inference Optimization

Building a demo is easy; scaling is hard. We specialize in optimizing model latency and throughput using techniques like vLLM and quantization, ensuring your application remains responsive and cost-effective at scale.

Advanced Multi-Agent Orchestration

Move beyond simple chatbots. Our experts build complex agentic systems where multiple AI models collaborate to plan, execute, and verify tasks, automating entire business workflows rather than just generating text.

Strict Data Privacy & Governance

We understand that enterprise data is sacred. Our developers architect solutions that run within your VPC or on-premise, utilizing local LLMs to ensure sensitive data never leaves your controlled environment.

Seamless Legacy System Integration

AI shouldn’t stand alone. We connect LLMs to your existing ERPs, CRMs, and databases via robust APIs, allowing the AI to take action and read/write data directly within your current infrastructure.

We sign strict NDAs and prioritize “Local-First” development. We prefer using self-hosted open-source models or private cloud instances (AWS Bedrock, Azure OpenAI) where data is not used for model training. We also implement PII redaction layers before data ever touches an LLM.

Absolutely. This is a core part of our consulting. We analyze your budget, latency requirements, and data sensitivity to recommend the best path—often a hybrid approach using GPT-4 for complex reasoning and a fine-tuned Llama model for high-volume, routine tasks.

Yes, we have extensive experience with multi-lingual models. We have deployed solutions covering French, German, Spanish, and Nordic languages, utilizing specific tokenizers and datasets to ensure native-level fluency and cultural nuance.

We maintain a bench of pre-vetted experts. Typically, we can present shortlisted candidates within 48 hours, and onboarding can be completed within 3 to 5 business days.

Costs vary based on seniority and location. However, hiring through Viston is generally 40-60% more cost-effective than hiring a full-time US-based senior AI engineer, saving you recruitment fees, benefits, and overhead.

Yes, we specialize in rapid prototyping. We can scope a 4-8 week MVP sprint to build a functional proof-of-concept that demonstrates core value to stakeholders or investors.

Yes. We implement RAG (Retrieval-Augmented Generation) pipelines, citation requirements, and “Guardrails” frameworks to ground the LLM in your facts, significantly reducing the risk of fabricated information.

Not necessarily. Our data engineers can help you construct, clean, and format your existing raw data (PDFs, emails, logs) into a training-ready format or a vector database for RAG implementation.

Yes, especially our European and UK teams. We design systems with explainability and data sovereignty in mind to ensure your AI deployment meets the stringent requirements of the EU AI Act and GDPR.

Viston is built for scalability. You can start with one developer and scale to a full “pod” (including a PM, QA, and Data Engineer) as your product gains traction, without bureaucratic delays.

Don’t let technical debt slow down your AI ambitions. Partner with Viston to access world-class engineering talent that has empowered 2,860+ clients globally. From predictive intelligence to creative automation, we build the systems that define the future.