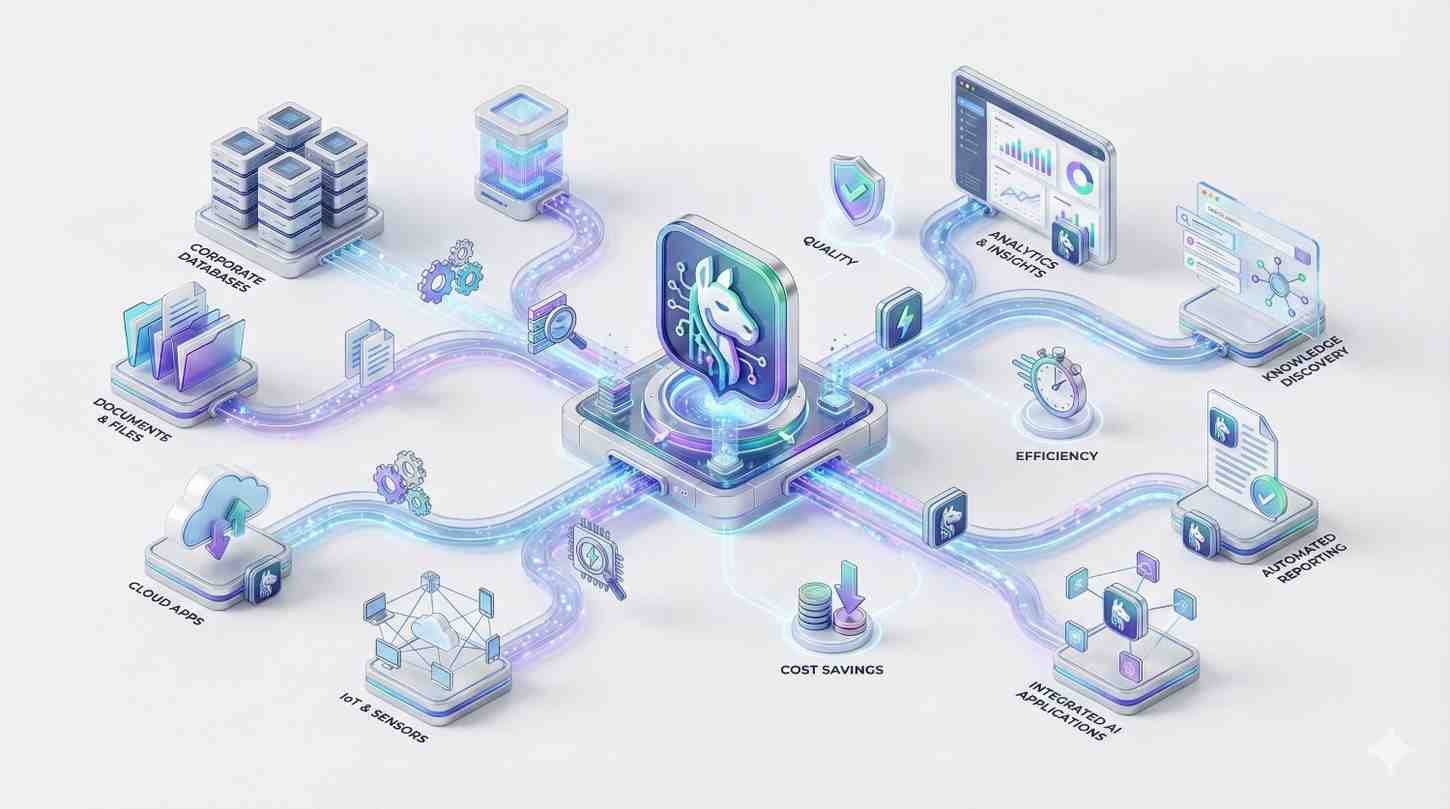

Transform your unstructured data into intelligent, context-aware AI applications with Viston’s elite engineering talent.

For over 15 years, Viston has been the trusted technology partner for Fortune 500 companies and innovative startups across the USA, UK, Germany, Canada, and Australia. We don’t just build chatbots; we engineer sophisticated Retrieval-Augmented Generation (RAG) systems that turn your proprietary documents into actionable intelligence. With a track record of serving 2,860+ clients, our dedicated LlamaIndex developers specialize in bridging the gap between your enterprise data and powerful Large Language Models (LLMs), ensuring accuracy, security, and speed without expensive model retraining.

In the modern B2B landscape, generic AI models are insufficient. To gain a competitive edge, you need applications that understand your specific business logic, customer history, and technical documentation. When you Hire Llama Index Developers from Viston, you are deploying engineers capable of orchestrating complex data frameworks that feed LLMs the exact information they need, exactly when they need it.

We move beyond basic prompt engineering to build robust LLMOps architectures. Our developers enable your AI to “read” your entire knowledge base—whether it resides in PDFs, SQL databases, or APIs—and generate responses that are factually grounded.

Move beyond keyword matching to understand user intent.

Automatically convert unstructured documents into clean, usable JSON formats.

Optimize token usage by retrieving only relevant context, significantly lowering API costs.

Keep your data within your governance perimeter while leveraging external LLM intelligence.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

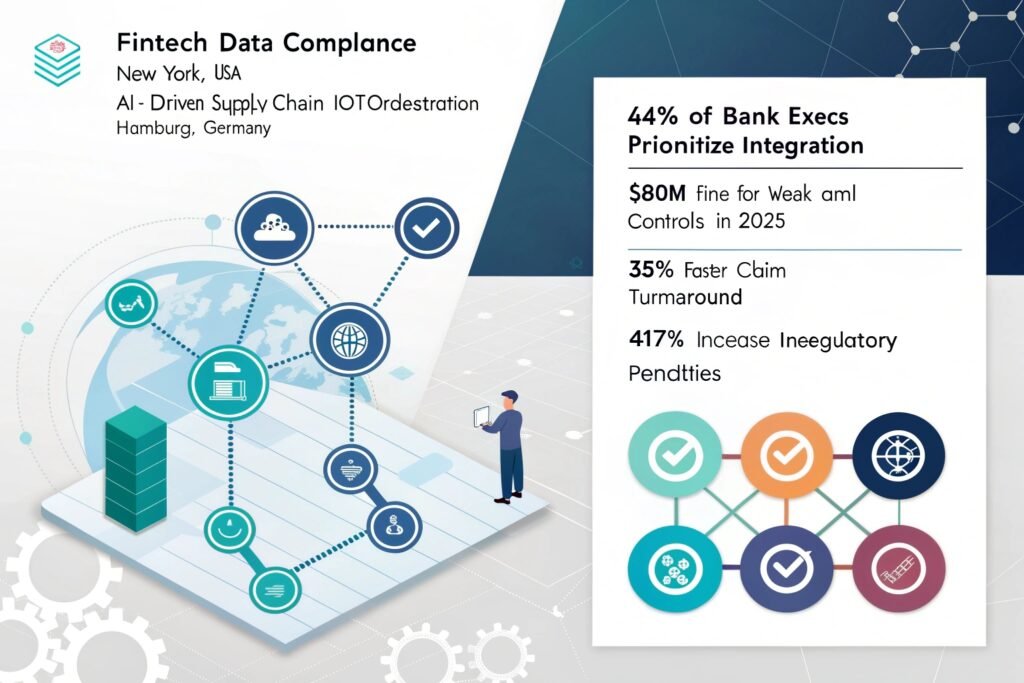

Testimonial: “Viston’s developers understood the strict compliance needs of Wall Street. The RAG agent they built is now our primary risk assessment tool.” — VP of Risk, Fintech Enterprise.

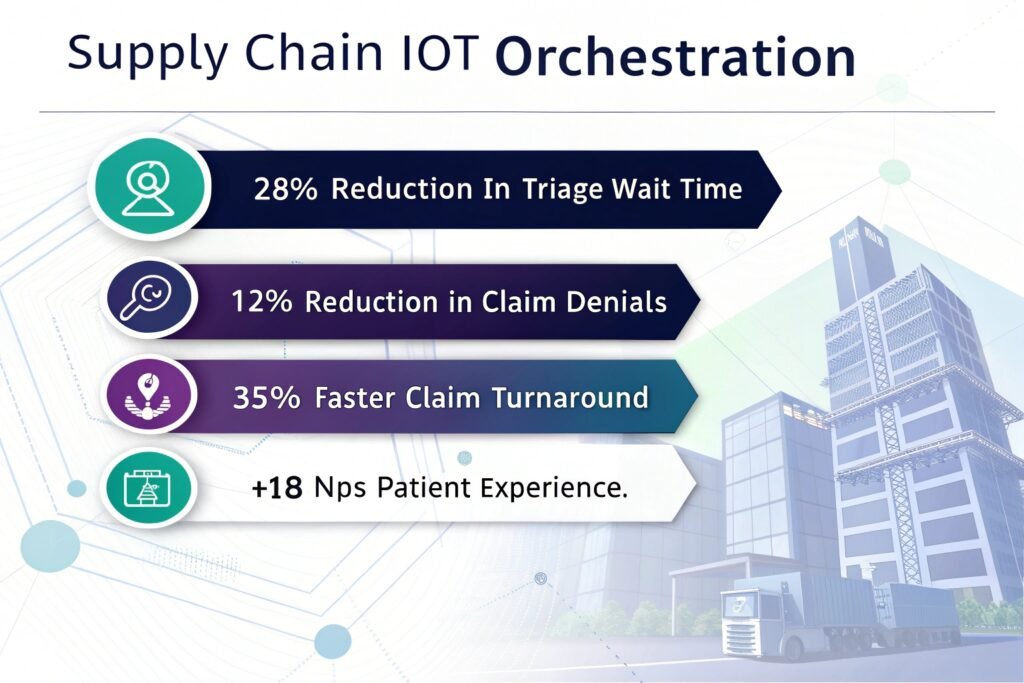

Background: A pharmaceutical giant needed to synthesize insights from unstructured doctor notes and clinical trial results.

Tech Stack: LlamaIndex, BioBERT, Qdrant, LangChain.

Challenge: Valuable patient data was locked in messy text formats, making it invisible to traditional analytics tools. Privacy compliance (HIPAA) was critical.

Solution: Viston developers engineered a local, private RAG system. Using LlamaIndex’s structured data extractors, we converted clinical notes into structured analytics dashboards without data ever leaving the secure VPC.

Results: Accelerated patient cohort identification by 4x. Uncovered adverse effect correlations months earlier than manual review.

Testimonial: “The ability to query our unstructured data as if it were a database has revolutionized our R&D speed. Viston is a crucial partner.” — VP of Digital Health.

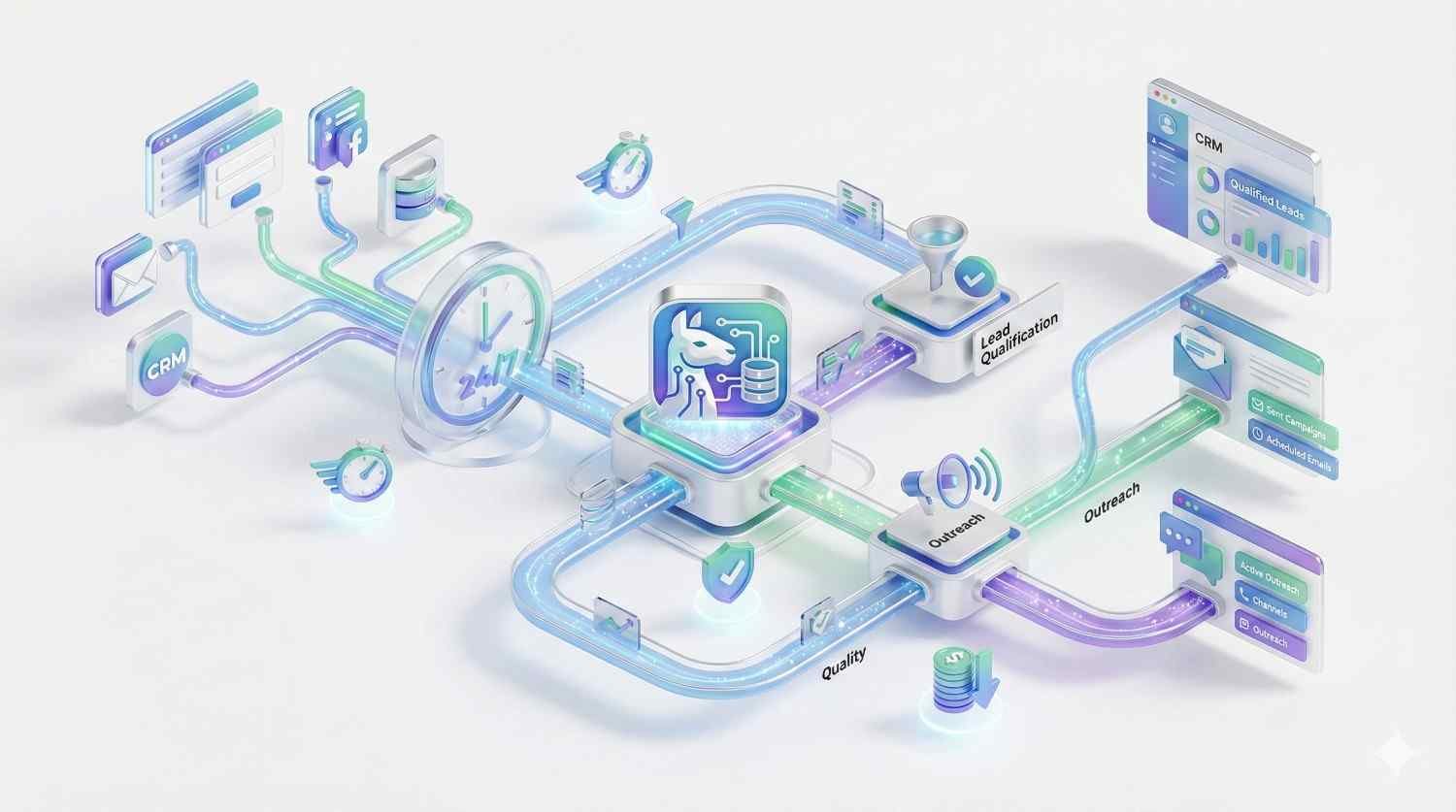

Background: A logistics firm covering the APAC region struggled with fragmented data across emails, invoices, and shipping manifests.

Tech Stack: LlamaIndex, MongoDB, AWS Bedrock, Claude 3.

Challenge: Operations managers spent 40% of their day searching for shipment updates across disconnected systems.

Solution: We integrated a “Chat with your Supply Chain” agent. Using LlamaIndex routers, the system intelligently routed queries to either the SQL database (for tracking) or email archives (for context).

Results: Reduced query response time from 20 minutes to 3 seconds. Saved $2M annually in operational overhead.

Testimonial: “We hired Llama Index developers from Viston to fix our data visibility. They delivered a system that feels like magic but runs on solid engineering.” — CTO, APAC Logistics Group.

Python

TypeScript

JavaScript

Go

AutoGPT

LlamaIndex

LangChain

Llama 3

Semantic Kernel

Pinecone

Milvus

Weaviate

ChromaDB

FAISS

Python

FastAPI

Flask

Node.js

GraphQL

AWS

Azure AI

Google Vertex

Docker

LangSmith

Arize Phoenix

Weights & Biases

ServiceNow

GitHub Action

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

A standard Python developer builds logic. A Llama Index developer specializes in semantic data architecture. They understand vector embeddings, chunking strategies, retrieval algorithms, and how to bridge deterministic data with probabilistic LLMs. This specific skillset is required to prevent hallucinations and ensure the AI application is actually useful for business.

Yes, this is a primary use case. Our engineers use advanced chunking strategies and vector databases (like Pinecone or Weaviate) to index millions of documents. This allows the AI to answer questions based only on your data, providing citations and virtually eliminating hallucinations.

We take a “privacy-first” approach. We can implement local LLMs (using Llama or Mistral) that never send data to the cloud. Alternatively, for cloud models, we build redaction chains that strip PII (Personally Identifiable Information) before the prompt is sent to providers like OpenAI, ensuring full GDPR/CCPA compliance.

Absolutely. Llama Index excels at connecting to various data sources. Our developers have deep experience creating custom data loaders for legacy SQL databases, Oracle systems, on-premise SharePoint, and even mainframe data exports, unifying them into a single vector index for easy querying.

We build modular systems. By abstracting the “Query Engine” layer, our developers ensure that if a better or cheaper model is released (e.g., moving from GPT-4 to a new open-source model), we can switch the inference engine without rewriting your entire data pipeline or re-indexing your documents.

Yes, these are our primary sectors. We implement strict citation layers (the AI must link to the source document) and use metadata filtering to ensure compliance. We can also deploy entirely air-gapped solutions where no data leaves your physical infrastructure.

We implement “filtering at retrieval.” Before the LLM generates an answer, our system checks the user’s permissions. If a user isn’t authorized to see a specific document, that document is excluded from the context window entirely, ensuring GDPR and internal security protocols are respected.

Yes. We offer flexible engagement models. You can hire a developer for a 4-week PoC to validate a use case before committing to a full-scale development contract. This is a popular option for testing internal knowledge management tools.

We support clients globally. We have talent clusters aligned with time zones in North America (USA, Canada), EMEA (UK, Germany, France, Nordics), and APAC (Australia). We ensure synchronous communication hours for your stand-ups and planning meetings.

We can structure a dedicated team to provide 24/7 monitoring and incident response (NOC). This ensures that critical alerts are acknowledged and triaged immediately, regardless of the hour, protecting your uptime and customer experience around the clock.

Don’t let technical complexity stall your AI roadmap. Partner with Viston to access the top 1% of engineering talent. With 15+ years of expertise, 2,860+ clients, and a presence across the USA, Europe, and Australia, we deliver results that matter.