Secure, Scalable, and Sovereign AI Implementation for Global Business

At Viston, we empower enterprises to move beyond generic API wrappers and build owned, domain-specific AI assets. With 15+ years of expertise and a portfolio of 2,860+ satisfied clients across the USA, UK, Germany, France, and Australia, we are the premier partner for organizations looking to Hire LLaMa Developers.

Our specialized engineering teams leverage Meta’s Llama ecosystem to deliver high-performance, cost-efficient, and privacy-compliant generative AI. Whether you need fine-tuned models for financial forecasting in London or edge-deployed inference for manufacturing in Berlin, Viston delivers specific, measurable AI outcomes.

Unlocking Data Sovereignty and Operational Efficiency

In the 2026 landscape, relying solely on closed-source “black box” models poses significant risks regarding cost unpredictability and data privacy. When you Hire LLaMa Developers from Viston, you transition from renting intelligence to owning it. Our engineers specialize in adapting Meta’s open-weights models to your specific corporate DNA, ensuring that your AI understands your proprietary terminology, workflows, and compliance mandates without leaking sensitive IP to third-party providers.

We help organizations across the USA, Canada, and the Nordics navigate the complexities of LLMOps. By fine-tuning Llama models, we achieve state-of-the-art performance on domain-specific tasks—often outperforming larger, generalist models—while drastically reducing inference latency and operational costs.

Full control over model deployment (On-Prem, VPC, or Private Cloud) to meet strict data residency laws in the EU and Australia.

Utilizing Parameter-Efficient Fine-Tuning (PEFT) and LoRA to minimize compute costs while maximizing accuracy.

Optimized inference engines for real-time applications in Fintech and Logistics.

Eliminate reliance on fluctuating API pricing and rate limits from hyperscalers.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

PyTorch

TensorFlow

LangChain

BabyAGI

LlamaIndex

LoRA

QLoRA

PEFT

Serverless

DPO

Pinecone

Milvus

Weaviate

ChromaDB

FAISS

AWS Bedrock

Azure AI Studio

Google Vertex AI

NVIDIA

Docker

Kubernetes

MLflow

Weights & Biases

NeMo Guardrails

LangSmith

Private VPC Networking

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

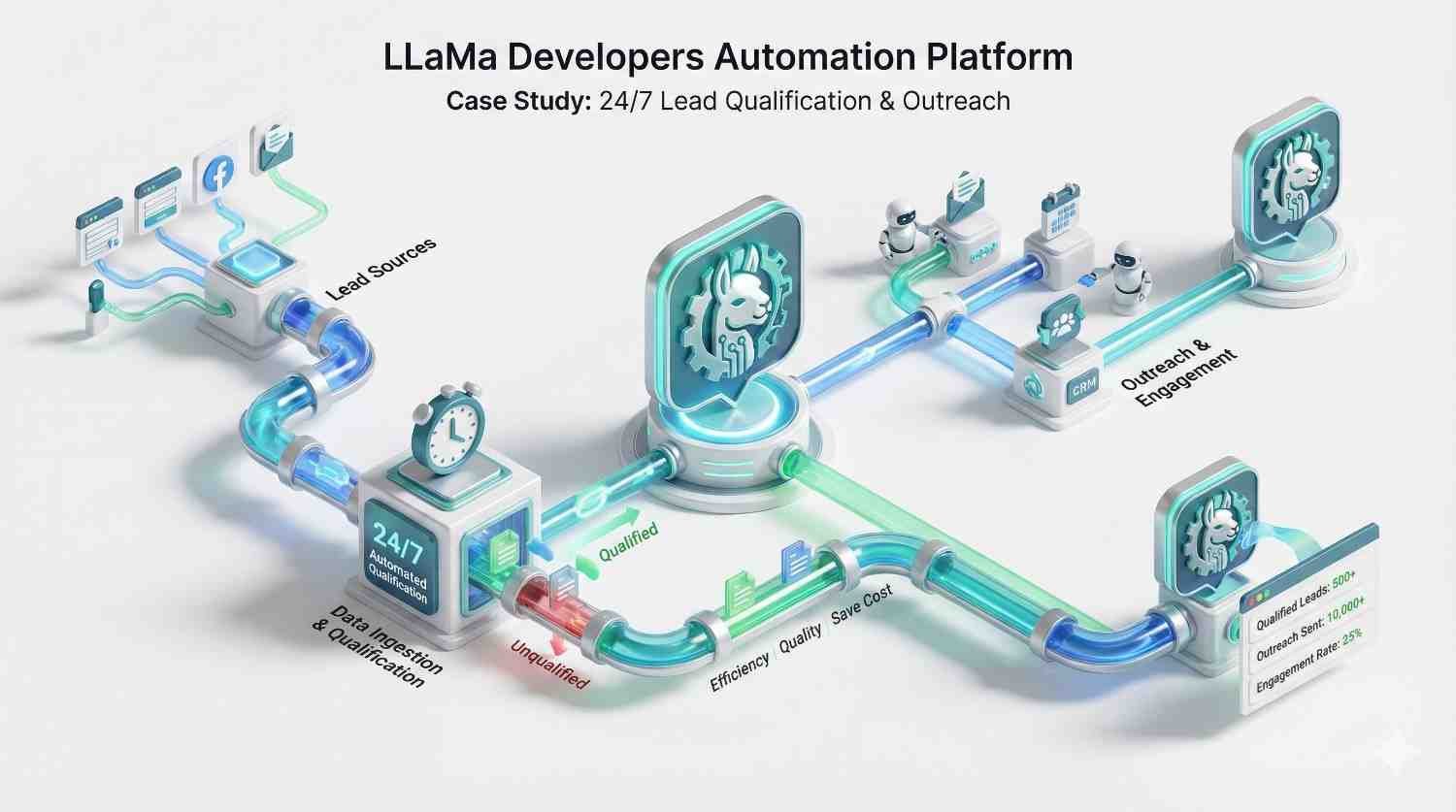

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

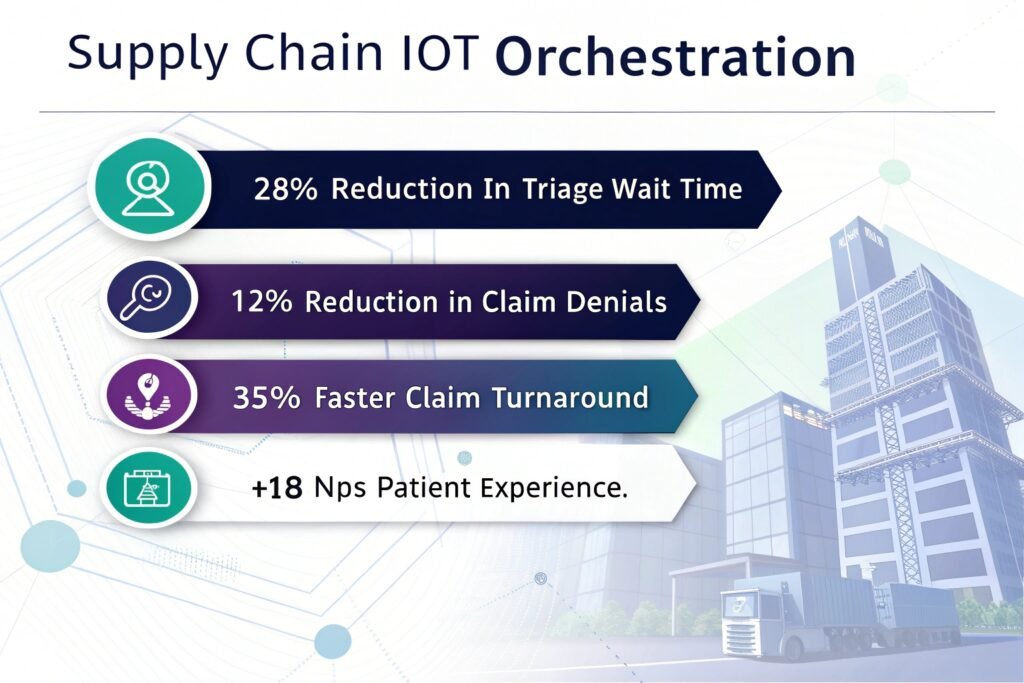

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Experts in Parameter-Efficient Fine-Tuning (PEFT)

Advanced RAG Implementation Skills

Deep Knowledge of Model Quantization

Strict Ethical AI & Compliance Focus

Full-Stack Integration Capability

Our LLaMa developers aren’t just data scientists; they are software engineers. They know how to wrap models in robust APIs, integrate with existing ERPs, and build intuitive front-end interfaces.

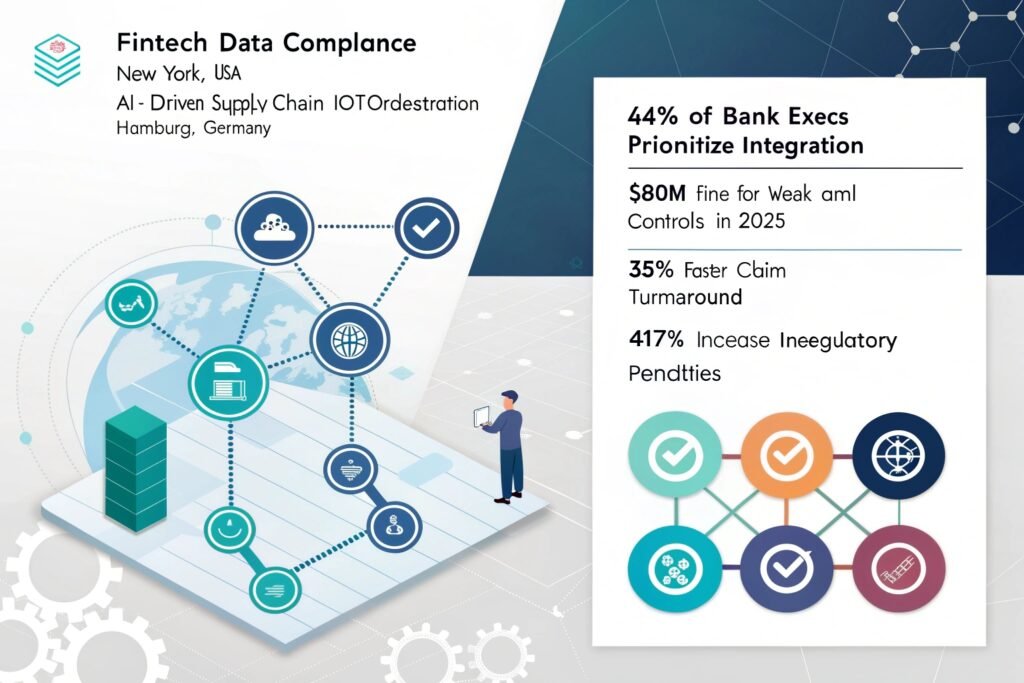

Llama offers data sovereignty and cost control. Unlike closed models where you pay per token and share data with a vendor, Llama models can be hosted on your own infrastructure (On-Prem or Private Cloud). This is critical for enterprises in Finance, Healthcare, and Defense who cannot risk data leakage or regulatory non-compliance.

Yes. Our team is actively deploying Llama 3.1 across client projects. We handle the migration of prompts, fine-tuning datasets, and evaluation pipelines to upgrade your legacy systems (Llama 2 or Mistral) to the latest state-of-the-art open-source models, ensuring you benefit from improved reasoning and larger context windows.

Absolutely. When you Hire LLaMa Developers from Viston, all code, model weights, adapters (LoRA), and datasets remain 100% your property. We operate on a “work for hire” basis. You retain full ownership of the AI asset, giving you the freedom to deploy, sell, or modify it without vendor lock-in.

Timeline depends on data readiness and complexity. A Proof of Concept (POC) using parameter-efficient fine-tuning (PEFT) can often be delivered in 2-3 weeks. A fully evaluated, production-grade model with RAG integration and custom UI typically takes 6-10 weeks. Our agile process ensures you see iterative progress weekly.

Yes, this is a core differentiator for Viston. We specialize in quantization (reducing model precision to 4-bit or 8-bit) and using frameworks like ONNX and Llama.cpp. This allows us to run powerful models on consumer-grade GPUs, local servers, or even high-end IoT devices for manufacturing and logistics clients.

Our developers have deep vertical experience across Fintech (fraud detection, reporting), Healthcare (patient data summarization), LegalTech (contract review), E-commerce (personalized recommendations), and Manufacturing (predictive maintenance). We match you with developers who understand your specific industry jargon and compliance needs.

We implement a multi-layered safety approach. This includes rigorous data cleaning before training, using Reinforcement Learning from Human Feedback (RLHF) to align model behavior, and implementing output guardrails (like NeMo) to filter responses. We also utilize RAG architectures to ground the model’s answers in your verified facts.

Hiring through Viston offers a hybrid advantage. You get the cost-efficiency of dedicated resources (avoiding the high overhead of full-service agencies) backed by the management and guarantees of an established firm (avoiding the risk of freelancers). You get enterprise-grade talent at competitive rates with transparent billing.

Yes. We work with clients across Europe (Germany, France, Spain) and have experience fine-tuning Llama on non-English datasets. We can enhance the model’s multilingual capabilities for cross-border support automation and document translation, ensuring high-quality outputs in your target markets.

We can structure a dedicated team to provide 24/7 monitoring and incident response (NOC). This ensures that critical alerts are acknowledged and triaged immediately, regardless of the hour, protecting your uptime and customer experience around the clock.

Don’t let data privacy concerns or API costs slow down your AI transformation. Partner with Viston to build secure, high-performance, and owned AI assets. With 15+ years of expertise, 2,860+ clients, and a global presence across the USA, Europe, and Australia, we are ready to deliver.