Secure, monitor, and optimize your Generative AI applications with Viston’s elite engineering teams.

Accelerate your transition from prototype to production. Viston provides top-tier engineering talent to implement end-to-end LLMOps using LangSmith. With 15+ years of technical expertise and a portfolio of 2,860+ satisfied clients across the USA, UK, Germany, France, and Australia, we are the trusted partner for enterprises demanding reliability in their AI infrastructure. When you [Hire LangSmith Developers] from Viston, you gain the ability to debug, test, and evaluate LLM apps with unprecedented precision.

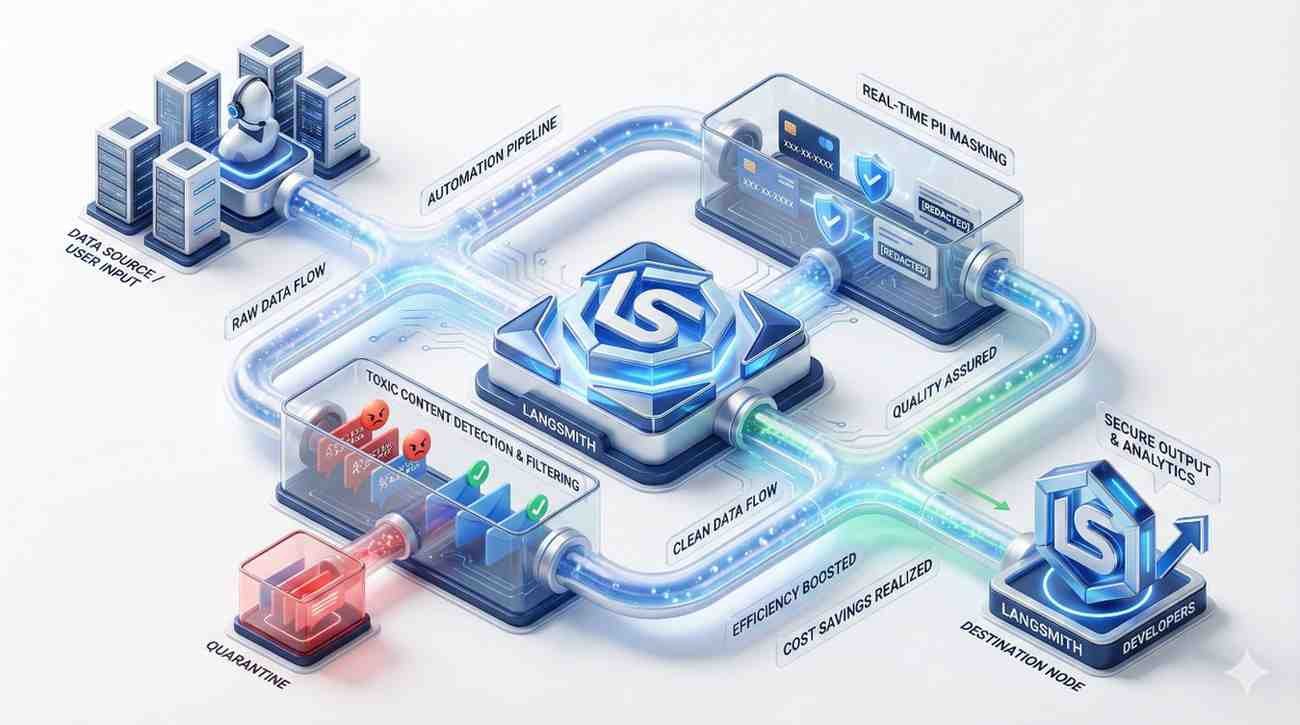

Taking Large Language Model (LLM) applications from a Jupyter notebook to a high-traffic enterprise environment requires rigorous observability. [Hire LangSmith Developers] from Viston to dismantle the “black box” nature of AI. Our engineers specialize in instrumenting your code to capture full execution traces, allowing you to spot regressions, debug complex chains, and monitor token usage in real-time.

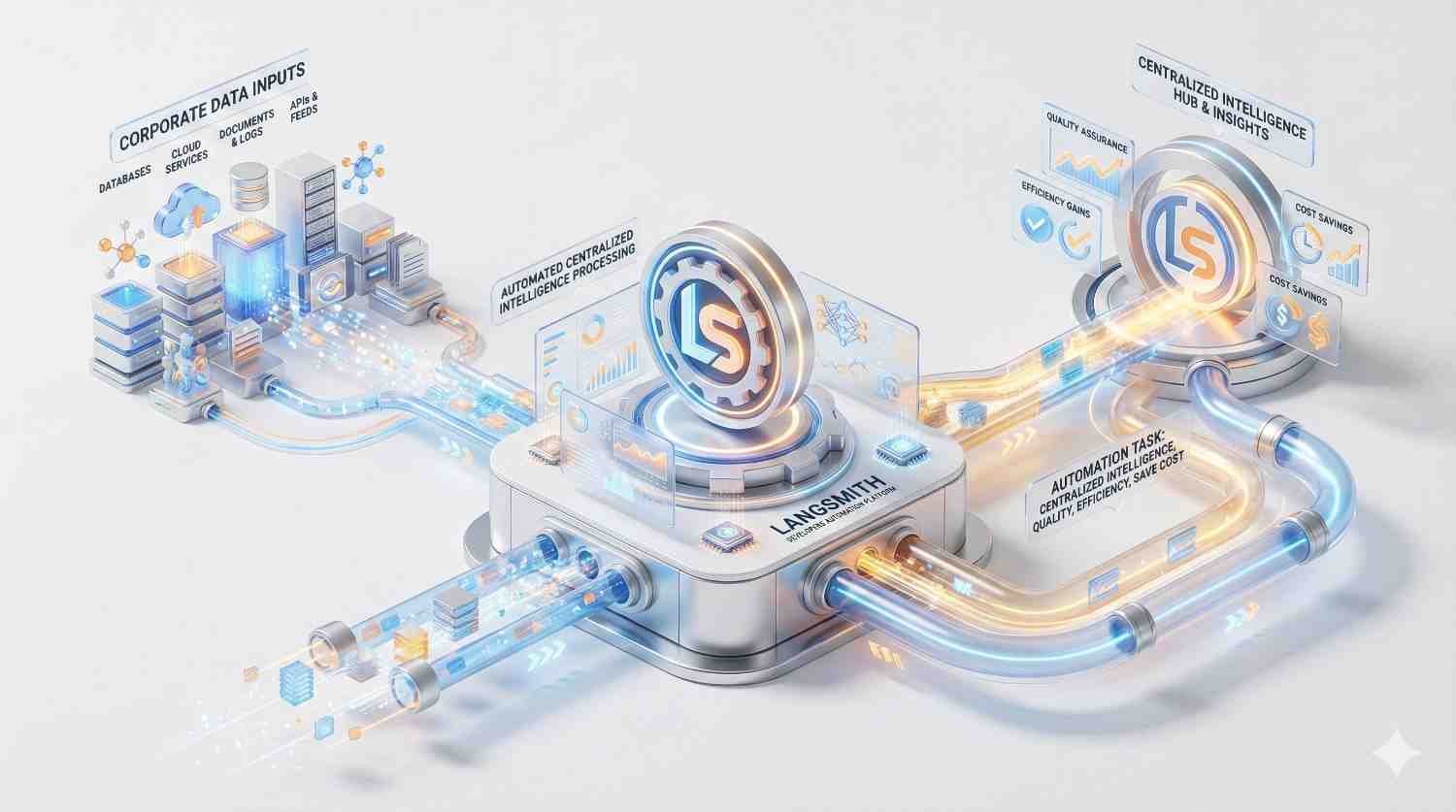

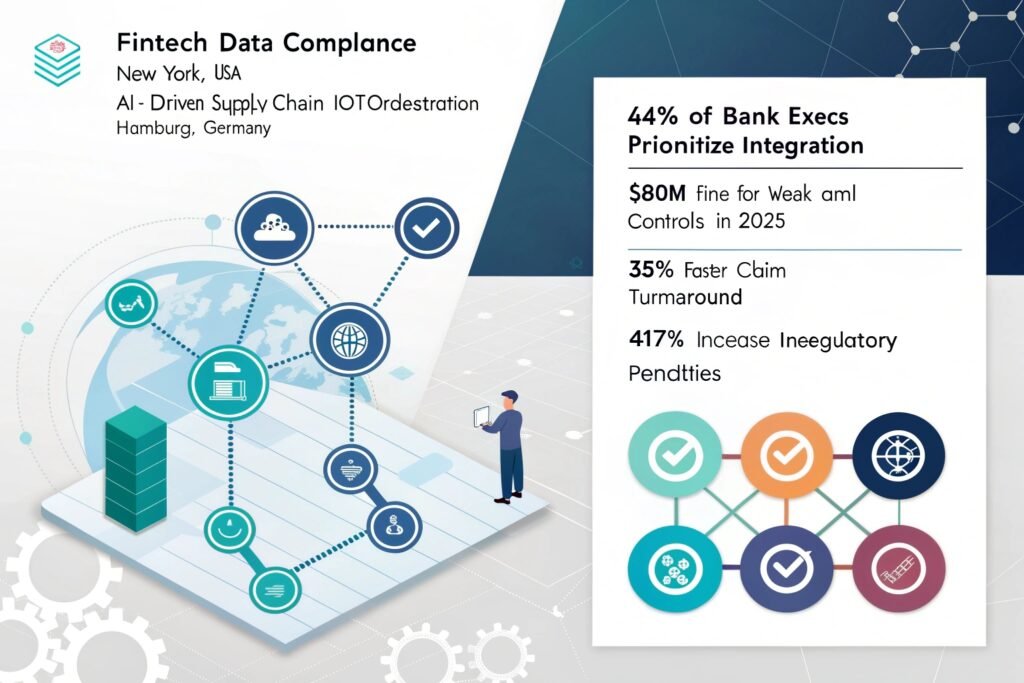

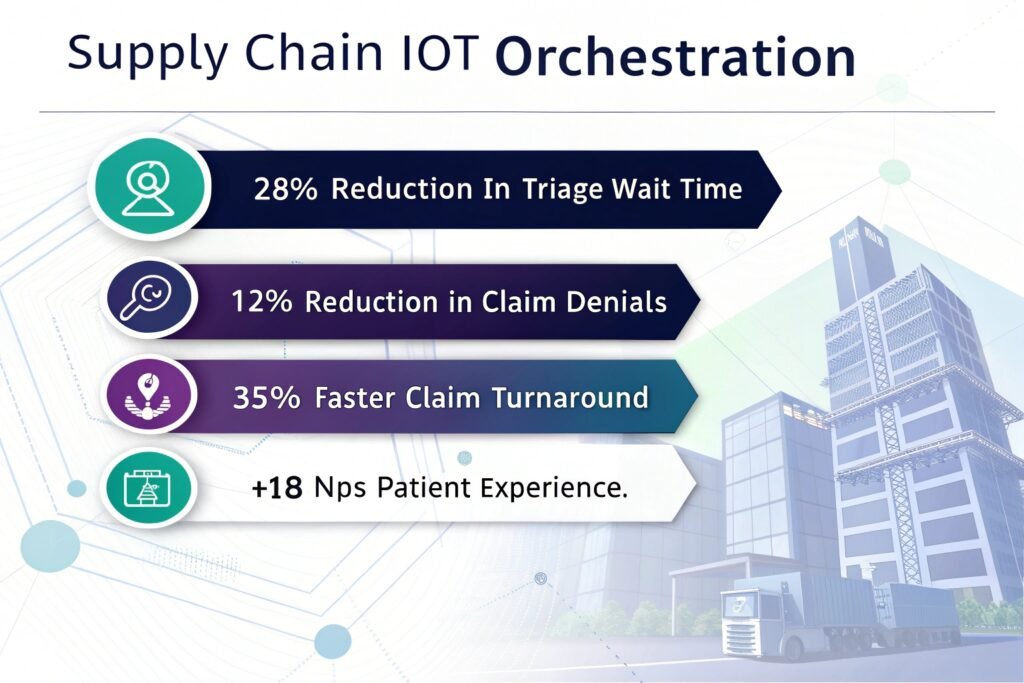

We don’t just write code; we build LLMOps in a Box solutions that ensure your AI agents behave predictably. Whether you are in FinTech, Healthcare, or SaaS, our developers ensure your system is ready for the demands of the US, European, and Australian markets.

Visualize every step of your chain to pinpoint exactly where an LLM hallucinated or a retrieval failed.

Move beyond "eye-balling" results by implementing programmatic datasets and evaluators for continuous quality assurance.

detailed analytics to optimize token spend and improve user experience metrics across global deployments.

Enable your product teams to iterate on prompts alongside engineers without breaking production code.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

Python

TypeScript

LlamaIndex

LangChain

OpenAI SDK

REST

GraphQL

FastAPI

Webhooks

gRPC

Docker

Kubernetes

AWS

Vercel

Azure

Python

FastAPI

Flask

Node.js

GraphQL

LangSmith

Arize

Datadog

Prometheus

OAuth2

PII Redaction

GDPR

IAM

SOC2

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

LangSmith provides the “missing link” between a prototype and a reliable product. Without it, you cannot easily see the intermediate steps of an LLM chain, making debugging nearly impossible. It is essential for identifying why a model failed, tracking costs, and ensuring consistent quality through automated testing.

Yes. Our developers are experts in hybrid environments. We can integrate LangSmith tracing into existing Python or TypeScript codebases, regardless of whether you are using LangChain or a custom implementation, ensuring you get visibility without rewriting your entire application.

We configure the tracing client to ensure sensitive PII is redacted before it leaves your infrastructure. We also implement project-level access controls, ensuring that only authorized personnel can view inputs and outputs, maintaining full compliance with EU and US data regulations.

The ROI is often realized within the first month through cost savings (optimizing token usage), reduced engineering time spent on manual debugging, and the prevention of reputational damage caused by AI hallucinations. A reliable app retains users; a buggy one loses them.

No. While they work seamlessly together, LangSmith can trace any LLM workflow. Our developers can instrument raw OpenAI API calls, or other frameworks like LlamaIndex, to send telemetry data to LangSmith for unified observability.

We maintain a bench of pre-vetted engineers. Typically, we can present candidates within 48 hours and have them onboarded and pushing code to your repository within 3 to 5 business days.

Absolutely. Creating custom “evals” is a core competency. We can write Python scripts that use another LLM (LLM-as-a-Judge) or heuristic logic to grade your model’s responses based on your specific business criteria (e.g., conciseness, JSON formatting, tone).

Yes, we have experience migrating observability stacks. We can map your existing metrics and logs to LangSmith’s tracing format, ensuring you don’t lose historical insights while upgrading your capabilities.

We serve a broad spectrum of B2B sectors including FinTech, Healthcare, Logistics, and E-Commerce. Our developers understand the specific compliance and latency requirements unique to these high-stakes industries.

We support clients globally, with a strong focus on the USA, Canada, UK, Germany, Nordics, and Australia. Our developers work in aligned time zones to ensure overlap for stand-ups and real-time collaboration.

Don’t let “black box” AI stall your production release. Partner with Viston to gain total visibility, control, and reliability over your LLM applications. With 15+ years of expertise and a global footprint, we are the premier choice for enterprises ready to scale.