Accelerate your digital transformation with Viston’s elite OpenAI architects. We build secure, scalable, and intelligent AI ecosystems for the Fortune 500 and fast-growing tech leaders.

With over 15 years of technical expertise and a portfolio of 2860+ satisfied clients across the USA, UK, Germany, Nordics, and Australia, Viston is the premier partner for high-velocity AI adoption. Whether you need to deploy sophisticated RAG (Retrieval-Augmented Generation) systems, custom fine-tuned models, or autonomous agentic workflows, our specialized engineers turn Generative AI into a competitive advantage. We don’t just integrate APIs; we engineer robust LLMOps infrastructures that drive predictive intelligence and workforce transformation.

In the rapidly evolving landscape of 2026, relying on off-the-shelf AI tools is no longer sufficient for competitive differentiation. To truly leverage the power of Generative AI, organizations must [Hire ChatGPT Developers] capable of architecting bespoke solutions that integrate seamlessly with legacy systems. Viston provides the technical bridge between raw model capabilities and business value.

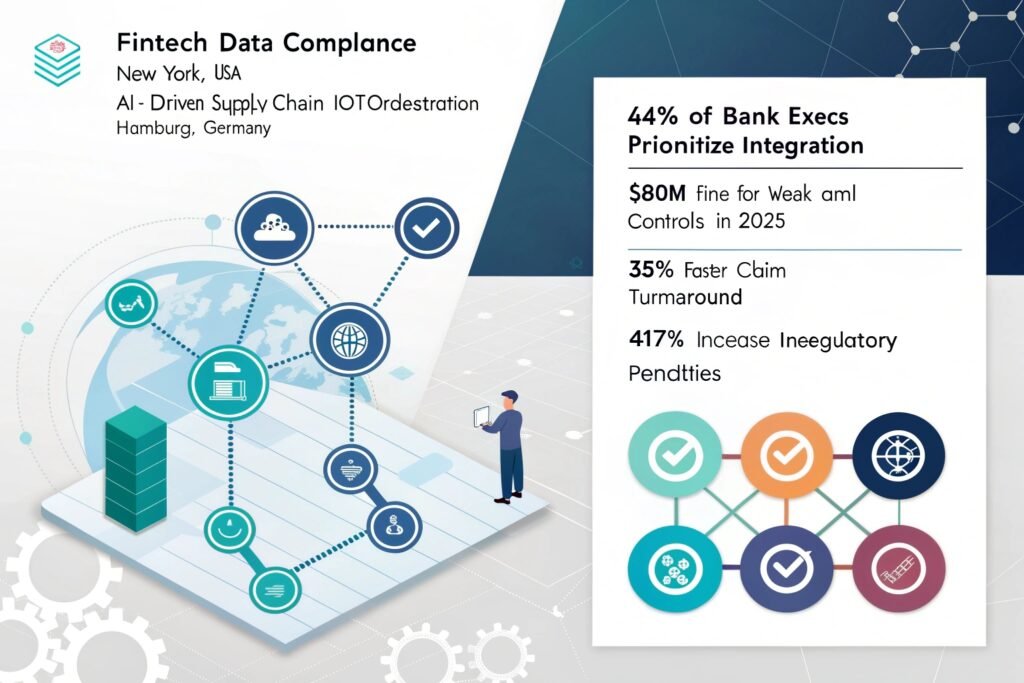

Our developers are not just coders; they are LLMOps specialists. They understand the nuances of prompt engineering, vector database orchestration, and the critical importance of “Human-in-the-Loop” (HITL) feedback mechanisms. From creating intelligent customer service agents in the UK to deploying predictive maintenance IoT analysis in German manufacturing, our global reach ensures we understand your regional compliance and operational needs.

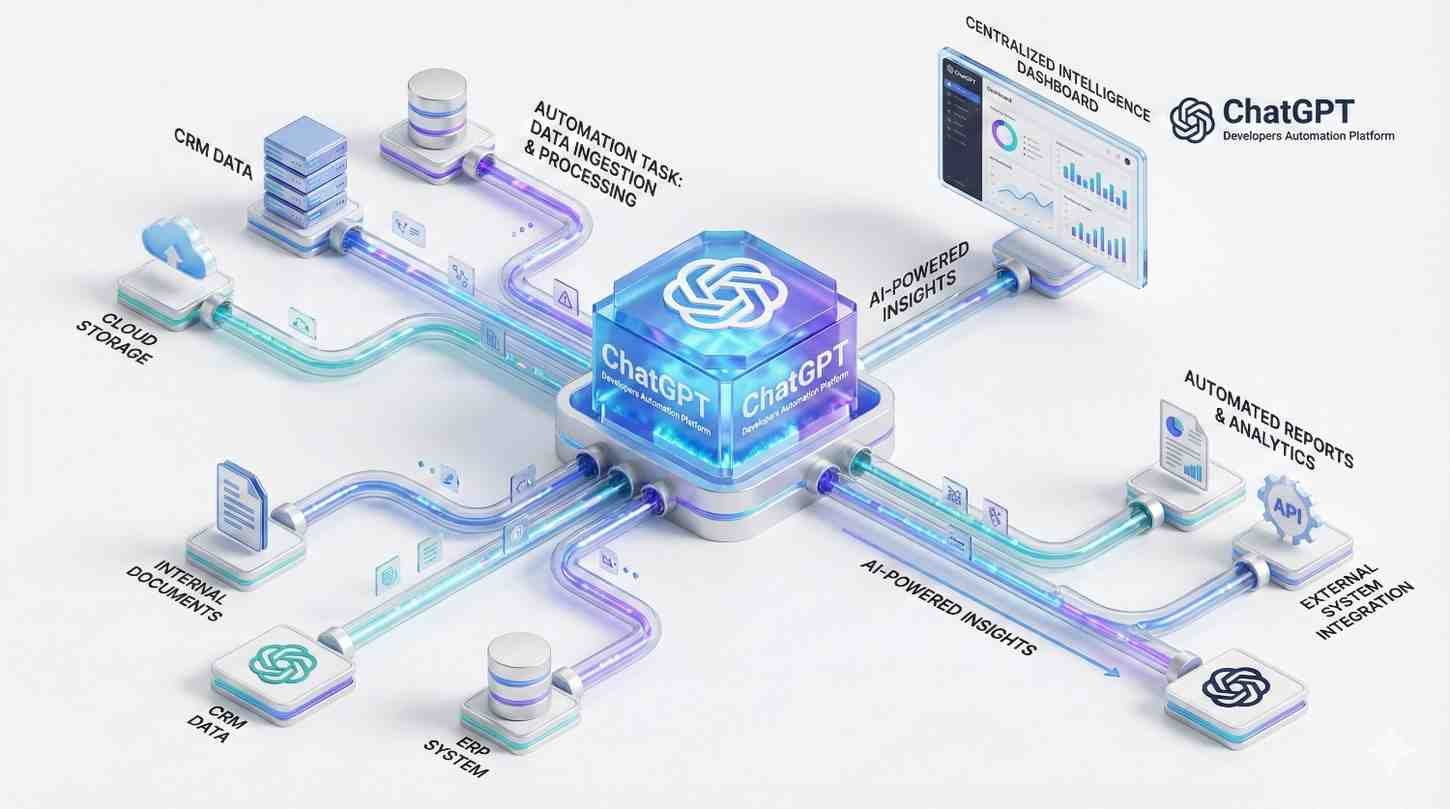

We manage the entire lifecycle of your Large Language Models, from selection and fine-tuning to deployment and monitoring.

We build "Chat with your Data" systems that keep your proprietary information private while leveraging GPT-4’s reasoning.

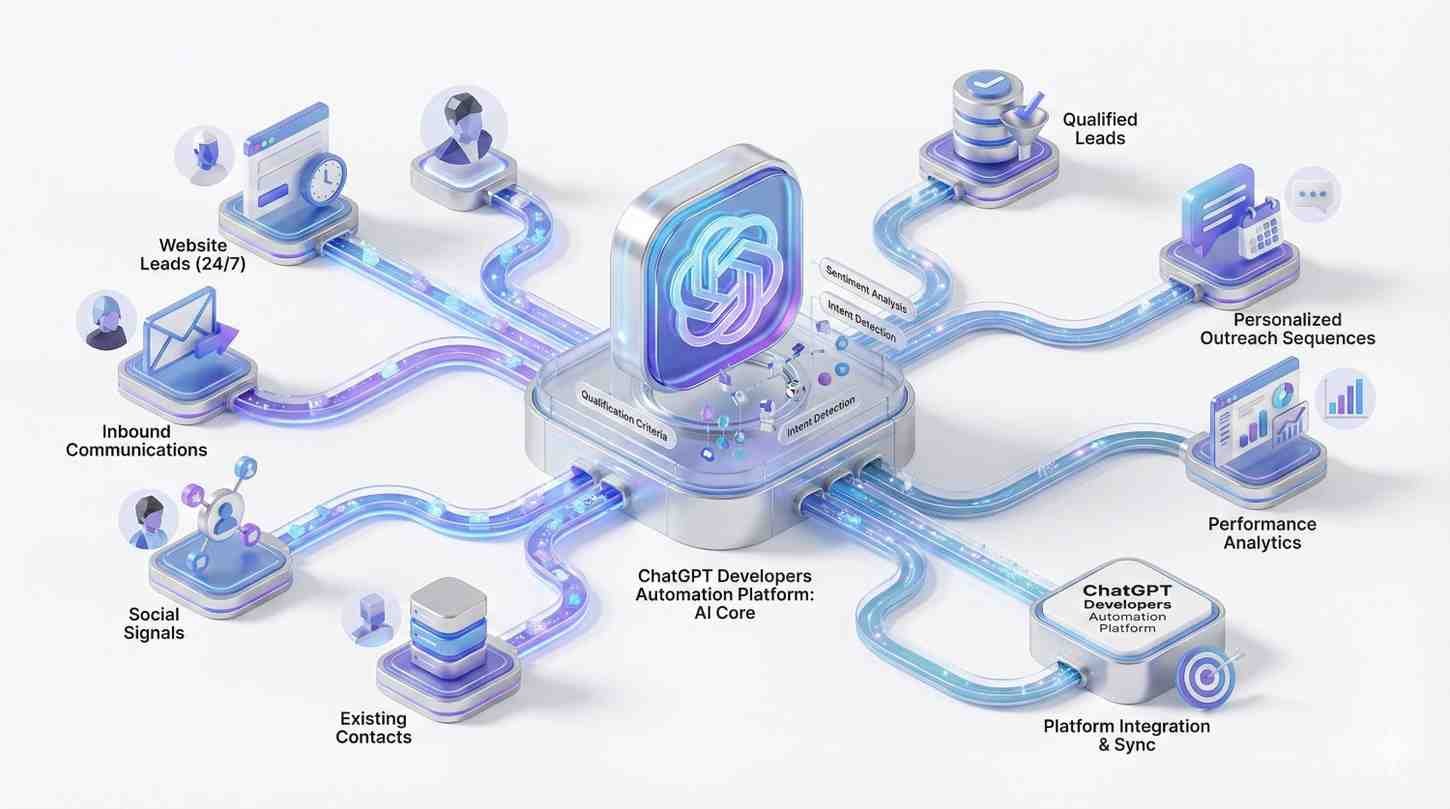

We move beyond chatbots to autonomous agents that can plan, execute tasks, and interface with your CRM and ERP.

We deploy lightweight AI models to edge devices for real-time latency-sensitive decision-making.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

Prometheus

Grafana

Dynatrace

Datadog

Nagios

TensorFlow

PyTorch

Keras

OpenAI API

Scikit-learn

Python

Ansible

Terraform

PowerShell

Bash

Kubernetes

Docker

Helm

OpenShift

Istio

AWS

Azure

Google Cloud Operations

IBM Watson AIOps

PagerDuty

Opsgenie

VictorOps

ServiceNow

Jira Service Management

$22/hour

$2800/month

Custon Quote

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Advanced Prompt Engineering

Our developers go beyond basic prompts. We use Chain-of-Thought (CoT) and Tree-of-Thoughts reasoning to ensure your AI handles complex logic without errors.

Token Cost Optimization

We architect solutions that minimize token usage through efficient context management and caching, saving enterprise clients thousands in monthly API fees.

Hallucination Mitigation

We implement strict guardrails and “grounding” techniques using RAG to ensure your AI models provide factual responses based only on your data.

Seamless Systems Integration

We don’t build isolated bots. We connect ChatGPT to your ERP, CRM, and SQL databases using secure function calling and API actions.

Enterprise-Grade Security

From data encryption in transit to private cloud deployments via Azure OpenAI, we prioritize the security of your intellectual property above all else.

We prioritize security by utilizing Azure OpenAI Service or Enterprise licensing where data is not used to train public models. Additionally, we implement middleware layers that redact Personally Identifiable Information (PII) before it ever reaches the LLM, ensuring full GDPR and CCPA compliance for our B2B clients.

Yes. While RAG is often sufficient, our developers are experts in fine-tuning models (via OpenAI or open-source alternatives like Llama) on your specific industry datasets. This is particularly useful for specialized fields like legal tech, medical coding, or complex engineering where domain-specific vocabulary is critical.

A standard chatbot simply answers questions based on training data. The Agentic AI systems we build can plan and execute tasks. They can browse the web, query your internal database, generate a PDF report, and email it to a client—all autonomously based on a high-level goal you provide.

We use a multi-layered approach. First, we use RAG to ground the model in your trusted data. Second, we implement “Self-Correction” loops where the model critiques its own output. Third, we use evaluation frameworks (like RAGAS) to constantly monitor accuracy and flag anomalies for human review.

Absolutely. This is our core strength. We wrap the AI logic in robust APIs (REST/GraphQL) that your legacy systems can consume. Whether you are running an old ERP or a modern microservices architecture, our developers ensure seamless, secure, and low-latency integration.

We build modular systems. When a new model drops (e.g., moving from GPT-4 to GPT-5), we can swap the underlying engine with minimal code changes. We also implement versioning strategies to ensure that updates to public models do not break your specific prompt logic or workflows.

Yes. Our team is at the forefront of Generative AI for Creative Acceleration in IT. We use GenAI to write post-incident reports, generate remediation scripts, and create natural language interfaces for complex database queries.

Yes. We offer an “End-to-end LLMOps platform” service. Our architects can design and build a custom AIOps platform tailored to your specific data streams and operational goals, giving you full ownership of the IP.

Viston operates globally. We provide engineers who can work in your specific time zone or offer a follow-the-sun model to ensure 24/7 coverage for critical operational monitoring and development.

This is our pre-configured operational framework. It allows companies to rapidly deploy Large Language Model capabilities within their ops environment without the months of setup usually required, accelerating your transition to AI-driven management.

Don’t let your competitors outpace you with superior AI automation. Leveraging 15+ years of expertise and a global track record across the USA, Europe, and Australia, Viston is ready to deploy the specialized talent you need.