In the era of Generative AI and high-velocity data, static scheduling is obsolete. You need dynamic, event-driven orchestration. At Viston, we provide elite engineering talent to design, deploy, and optimize Apache Airflow environments for Global 2000 enterprises. With 15+ years of expertise and over 2,860+ satisfied clients across the USA, UK, Germany, France, and Australia, Viston is the premier partner for scalable data engineering. Whether you are migrating legacy ETLs or building cutting-edge RAG agents, our developers ensure your data arrives on time, every time.

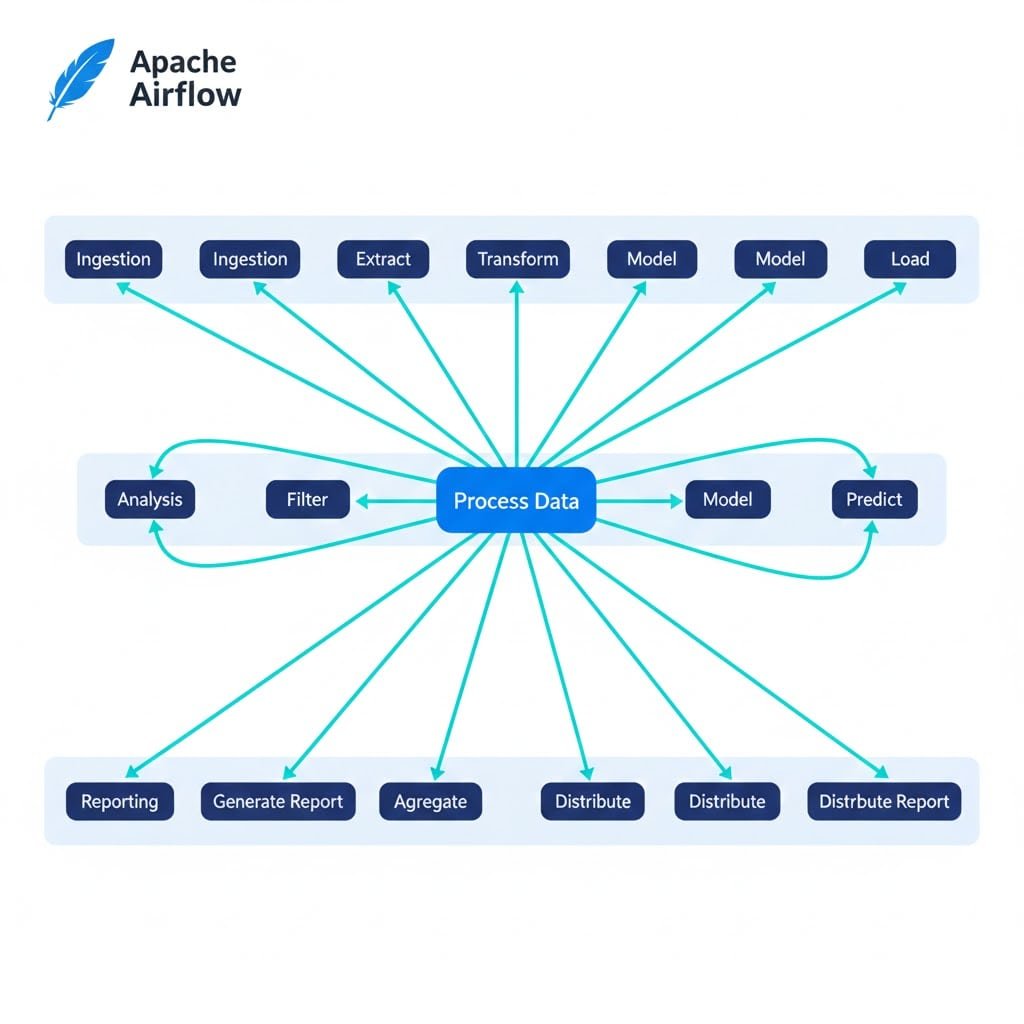

In the modern data stack, Apache Airflow is the heartbeat of operations. However, out-of-the-box implementations often fail to scale, leading to “DAG spaghetti,” scheduling latency, and opaque failures. To support advanced use cases like LLMOps, Edge AI, and predictive intelligence, you need developers who understand Airflow’s internal architecture—not just how to write a Python script.

Viston developers are Python-native orchestration specialists. We move beyond basic Cron jobs to design complex, dependency-rich workflows that react to real-time events. By treating infrastructure as code, we ensure your data pipelines are version-controlled, testable, and idempotent.

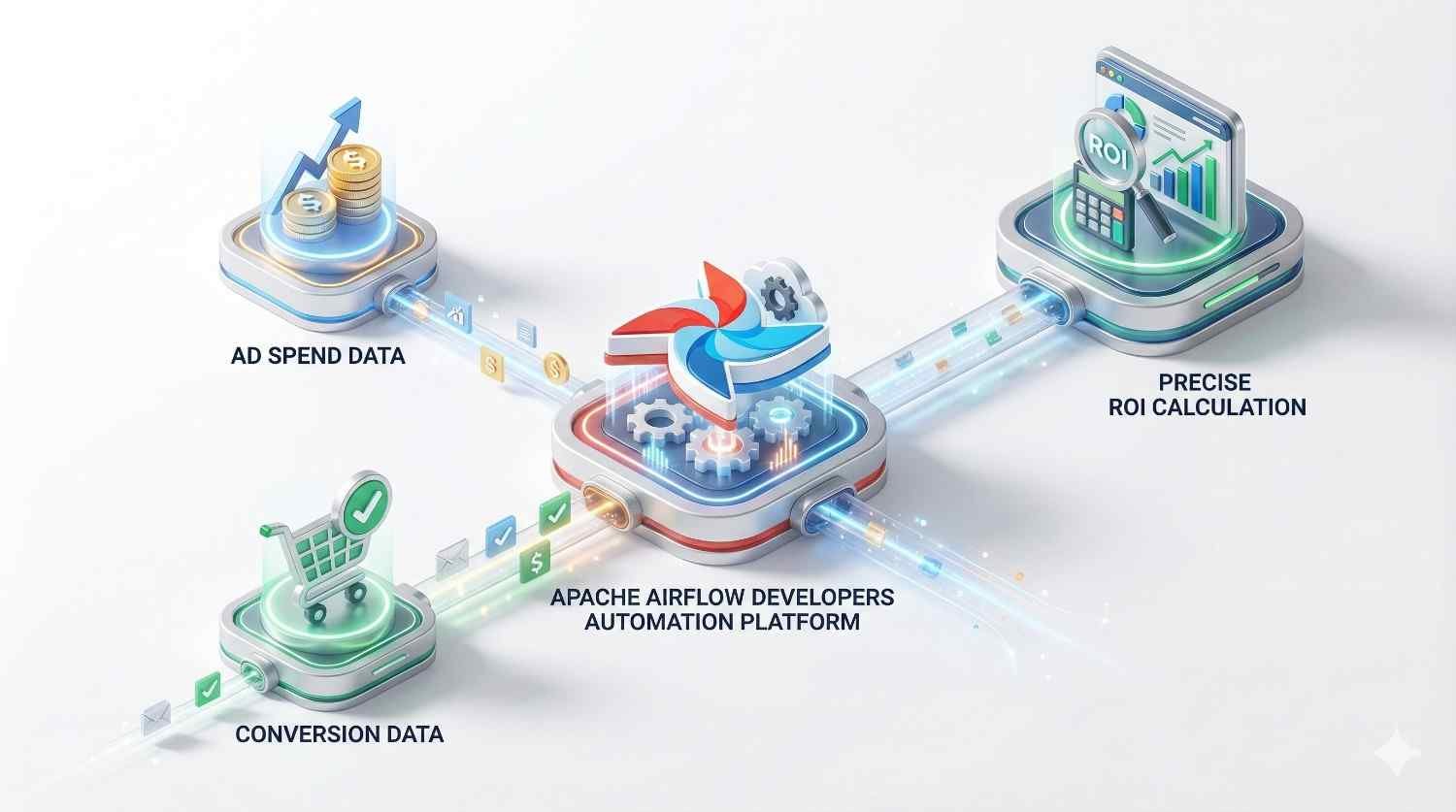

We utilize deferrable operators and sensors to trigger workflows based on external events (S3 uploads, API calls) rather than arbitrary time schedules, reducing latency and resource waste.

Our pipelines feature built-in retry mechanisms, SLA callbacks, and smart alerting to handle failures autonomously, ensuring analytics-grade reliability without constant manual intervention.

We engineer pipelines specifically for Generative AI, managing the complex sequence of data ingestion, vector database embedding, and model fine-tuning required for RAG agents.

With teams positioned in key time zones serving the US, Canada, Europe, and Australia, we provide "follow-the-sun" engineering support for continuous pipeline optimization.

Experience

Availability

Deployments

Experience

Availability

AI/ML Integrations

Experience

Availability

Migrations

Python

SQL

Bash

Scala

Java

Go

Airflow 2.0+

Dagster

Luigi

Salesforce

Cron

AWS

Azure

Google Cloud

Heroku

Digital Ocean

Docker

Kubernetes

Helm

ECS

EKS

Snowflake

BigQuery

Redshift

PostgerSQL

MongoDB

Jenkins

GitLab CI

GitHub Actions

Terraform

Ansible

Prometheus

Spark

Kafka

Hadoop

PyTorch

Pandas

$22/hour

$2800/month

Custon Quote

Teams available in US, UK/Europe, and Australian time zones.

strict NDAs and Western legal frameworks ensure your code and data remain yours.

We are confident in our quality. Start with a trial period to verify expertise.

Our developers are trained on the latest Airflow features (2.10+) and AI trends.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Custom Operator Development

Standard operators often limit capabilities. Our experts build custom Python plugins to integrate proprietary internal tools or legacy systems that lack native Airflow support, ensuring no system is left behind.

Deferrable Operators Implementation

We optimize costs by implementing deferrable operators (AsyncIO). This frees up worker slots while waiting for external tasks to complete, allowing you to run more DAGs with less infrastructure.

Robust Security & RBAC

Security is paramount. We configure granular Role-Based Access Control (RBAC), secret management (Vault/AWS Secrets Manager), and network isolation to ensure your data pipeline is secure by design.

Complex Dependency Management

We solve “dependency hell.” Our team excels at managing complex cross-DAG dependencies using Datasets and Sensors, ensuring downstream analytics never run on stale or incomplete data.

Seamless Migration Services

Moving from Jenkins, Cron, or legacy Oozie? We specialize in risk-free migrations to Airflow, preserving data history and logic while modernizing the underlying architecture for future scalability.

Yes. Migration is a core competency at Viston. We have successfully migrated clients from Cron, Jenkins, Control-M, and Oozie to Apache Airflow. We follow a phased approach: auditing existing workflows, mapping dependencies, refactoring code for Pythonic best practices, and running parallel executions to ensure data parity before final cutover.

We adhere to “Security by Design” principles. Our developers configure Airflow with strict RBAC (Role-Based Access Control), integrate with enterprise identity providers (SSO/LDAP), and ensure all credentials are stored in secure vaults (HashiCorp Vault, AWS Secrets Manager). We also implement encryption for data at rest and in transit.

Absolutely. Viston positions itself as a leader in LLMOps. Our developers build pipelines that automate the entire lifecycle of Large Language Models, including data scraping, vector embedding generation, RAG knowledge base updates, and model fine-tuning, helping you deploy AI agents faster.

Yes. Inefficient DAGs waste compute resources. We optimize your environment by implementing deferrable operators (which release worker slots during wait times), tuning scheduler parameters, and right-sizing Kubernetes clusters. We often reduce cloud orchestration costs by 30-40% for our clients.

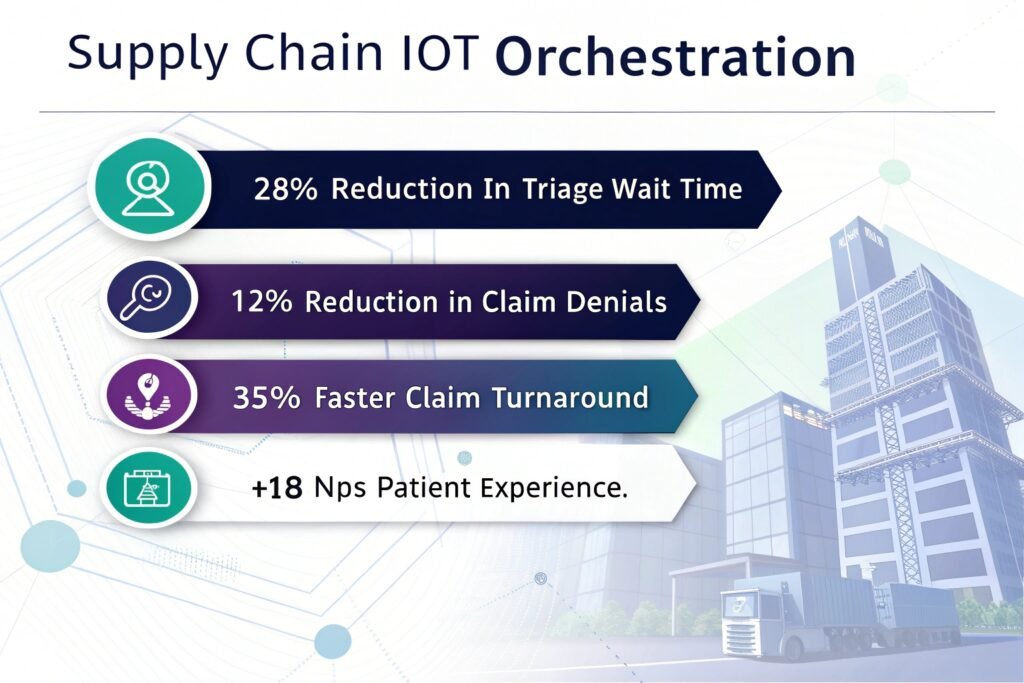

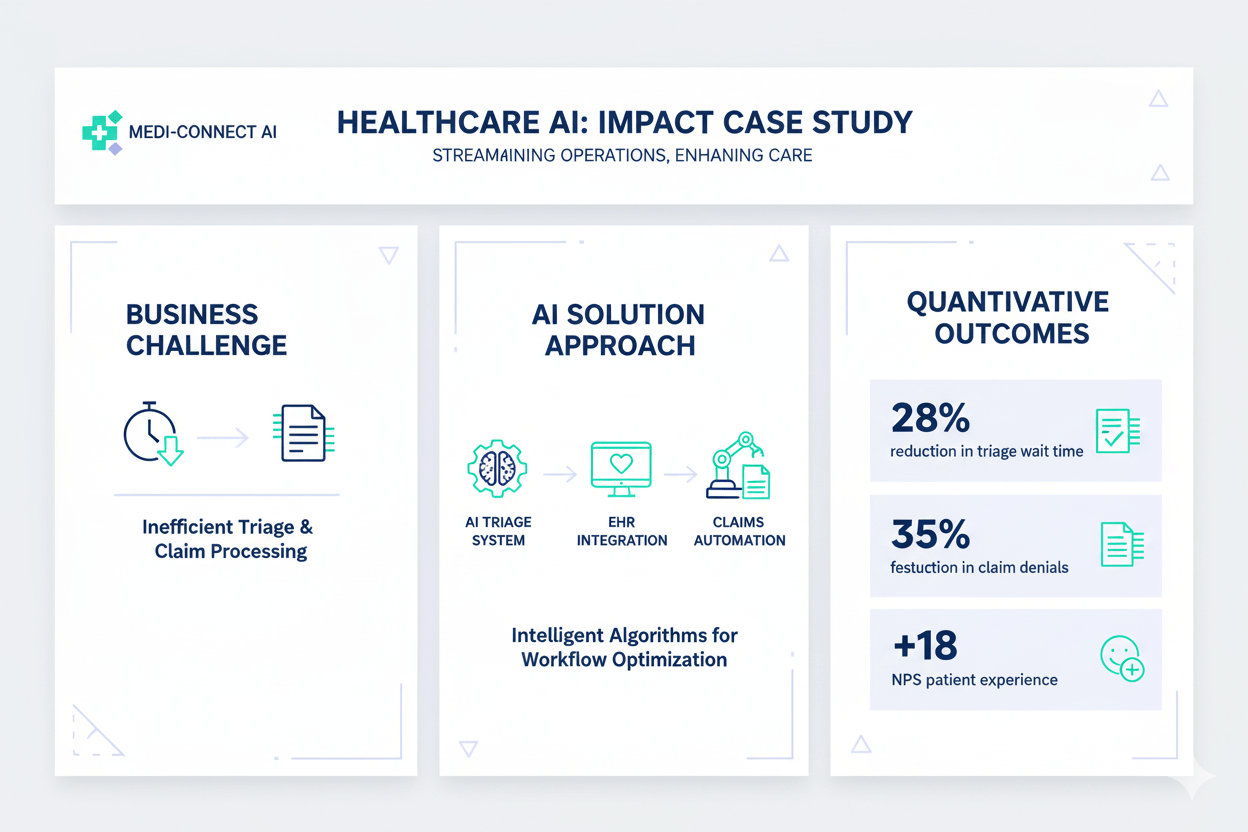

We have deep domain expertise in Fintech (fraud detection, reconciliation), Healthcare (HIPAA-compliant data lakes), E-commerce (inventory sync, recommendation engines), and Logistics (supply chain visibility). Our developers understand the specific regulatory and speed requirements of these sectors.

Our streamlined process allows for rapid deployment. Typically, we can present shortlisted candidates within 48 hours of understanding your requirements. Once an interview is successful, the developer can usually be onboarded and start contributing to your repository within 3 to 5 business days.

Yes, our team is proficient in the latest Airflow 2.4+ features, including Datasets for data-aware scheduling. This allows us to build event-driven architectures where DAGs run only when specific datasets are updated, rather than relying on arbitrary time-based schedules.

Yes. We serve a global client base. We offer developers who can work in your specific time zone (EST, PST, GMT, CET, AEST) to ensure real-time collaboration with your internal engineering teams and provide support during your critical business hours.

We treat DAGs as production software. Our developers implement rigorous CI/CD pipelines for Airflow, including unit tests for custom operators, integration tests for DAG logic, and code linting (Flake8/Black) to ensure maintainability and prevent regressions in production.

Client satisfaction is our priority. If a developer does not meet your expectations during the trial period or at any point in the engagement, we will immediately provide a replacement with overlapping skills at no additional cost to ensure project continuity.

Stop wrestling with broken pipelines and start orchestrating value. Whether you need to scale Generative AI, improve predictive intelligence, or automate complex workflows, Viston provides the elite talent you need.