Real‑Time AI Observability for Agentic Workflows: Beyond Logs and Metrics

The year is 2026, and artificial intelligence has evolved. We’ve moved beyond simple chatbots to sophisticated AI agents. These autonomous systems now handle complex, multi-step tasks across our enterprises. They automate customer support, optimize supply chains, and even write and deploy code. This leap forward brings incredible power. But it also introduces a critical new challenge: understanding what these agents are doing and why.

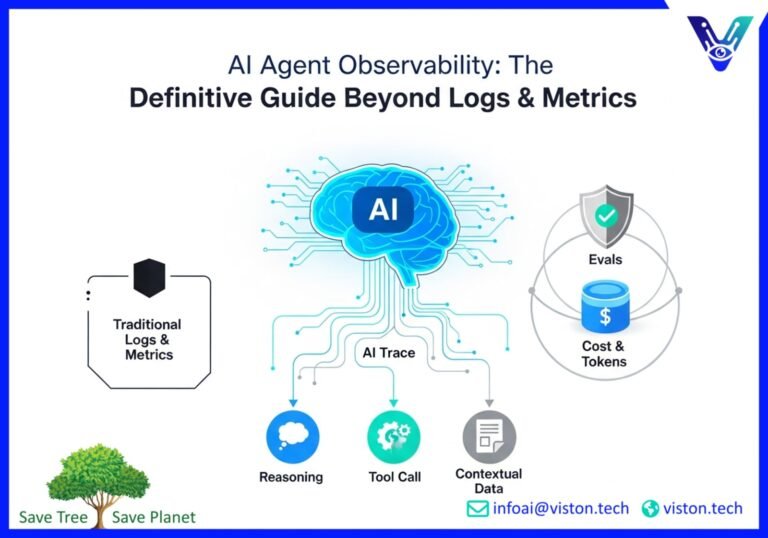

For decades, DevOps and IT teams have relied on logs and metrics to monitor their software. This traditional approach works well for predictable, human-written code. But AI agents are different. They are non-deterministic. They make their own decisions. As we deploy these powerful new workflows, we’re discovering that old monitoring methods are leaving us in the dark. A new imperative has emerged: real-time AI observability.

This isn’t just about collecting data. It’s about gaining deep, actionable insights into complex AI systems. It’s about ensuring they operate reliably, cost-effectively, and safely. This post explores the new frontier of AI observability for agentic workflows. We’ll cover why it’s different, what you need to track, and the tools that can give you the visibility you need to innovate with confidence.

The New Frontier: Why Agentic Workflows Are Different

To grasp the need for a new observability paradigm, we must first understand how agentic workflows differ from the microservices architectures we’re used to. Microservices are like a well-organized factory assembly line. Each service has a specific, predictable job. The workflow is deterministic; the same input always produces the same output. Monitoring this is straightforward.

Agentic workflows, on the other hand, are like a team of expert artisans. You don’t give them a rigid set of instructions. You give them a goal, access to tools, and the autonomy to figure out the best way to achieve it. This introduces incredible flexibility but also significant unpredictability.

- Autonomous Decision-Making: Agents decide their own next steps. They can choose from various tools and data sources to solve a problem. This dynamic nature means there isn’t a single, predefined execution path.

- Complex, Multi-Step Reasoning: An agent might make dozens of sequential decisions to fulfill a single request. It reasons, acts, observes the outcome, and adapts its approach.

- Tool Use (Function Calling): Agents interact with the outside world by calling APIs and external tools. They might query a database, search the web, or trigger another software process.

- Non-Deterministic Behavior: Ask the same agent the same question twice, and you might get two different paths to the same answer. The underlying language models are inherently creative and unpredictable.

This complexity means that when something goes wrong, a simple error log is useless. You can’t just look at the final output. You need to understand the agent’s entire thought process. Why did it choose that path? Which tool did it call, and with what parameters? Where did its reasoning lead it astray? This is where true AI observability begins.

Beyond Logs and Metrics: What to Trace in Agentic AI

Effective AI observability goes far beyond traditional methods. It requires capturing a rich, interconnected set of data that tells the complete story of an agent’s journey. For DevOps and AI teams in 2026, this means focusing on tracing, evaluations (evals), and cost monitoring in real time.

Tracing the Agent’s Journey: Steps and Decisions

The core of AI observability is tracing. A trace is a detailed, step-by-step record of the agent’s entire workflow for a given task. Think of it as a flight data recorder for your AI. It captures every thought, decision, and action.

A complete trace should include:

- Initial Prompt: The original request that kicked off the workflow.

- Reasoning Steps: The agent’s internal monologue. This shows how it breaks down the problem and forms a plan.

- Tool Calls: Which tools the agent selected, the exact inputs it provided, and the outputs it received.

- Contextual Data: Any information the agent retrieved, such as from a database or knowledge base, to inform its decisions.

- Final Output: The end result delivered to the user.

By visualizing this entire sequence, developers can instantly debug failures, identify bottlenecks, and understand unexpected behavior.

Evals: The New Quality Assurance for AI

In the world of agentic AI, quality is not just about being bug-free. It’s about being helpful, accurate, and safe. This is where evals come in. An “eval” is a test that measures the quality of an agent’s output against predefined criteria. Evals are the new form of unit testing for AI.

Key quality dimensions to evaluate include:

- Faithfulness: Does the agent’s response accurately reflect its source data, or is it hallucinating?

- Helpfulness: Does the answer actually solve the user’s problem?

- Tool Correctness: Did the agent call the right tool with the correct parameters?

- Safety and Compliance: Does the response adhere to safety guardrails and avoid generating toxic or biased content?

These evaluations can be automated using other AI models (LLM-as-a-judge), checked against deterministic rules, or involve human-in-the-loop feedback for more nuanced assessments. For more on this, check out this excellent guide to AI Agent Evaluation.

Tokenomics: Monitoring Tokens and Cost

Every call to a large language model (LLM) comes at a cost, measured in “tokens” (pieces of words). An agentic workflow can involve numerous LLM calls, and costs can spiral out of control if not monitored closely. Effective cost monitoring is non-negotiable.

You need real-time visibility into:

- Token Consumption: Track the number of input and output tokens for every step in the workflow.

- Cost Per Interaction: Calculate the precise cost of each user request from start to finish.

- Model Performance vs. Cost: Analyze which models provide the best balance of performance and cost for different tasks. A powerful model might be overkill for simple requests.

By tracking these metrics, you can optimize prompts, cache common responses, and route tasks to the most cost-effective models. This prevents budget overruns and ensures the economic viability of your AI applications.

Essential Tools and Patterns for AI Observability in 2026

The demand for deeper AI insights has led to a new ecosystem of specialized observability tools. These platforms are built from the ground up to handle the complexities of agentic systems. They provide a unified view that connects development, testing, and production monitoring.

The leading tools in 2026 generally fall into three categories:

- End-to-End Observability Platforms: Solutions like Arize AI and Maxim AI offer comprehensive, end-to-end platforms. They unify tracing, evals, drift detection, and production monitoring into a single workflow. These are ideal for enterprises seeking a complete solution for building and operating reliable AI.

- Developer-First and Open-Source Tools: Platforms like Langfuse and LangSmith are deeply integrated with popular development frameworks like LangChain. They provide powerful tracing and debugging capabilities that are beloved by developers for their flexibility and control.

- Cloud-Native Solutions: Major cloud providers offer their own suites of tools. Google’s Vertex AI Agent Builder, for instance, includes built-in monitoring and evaluation capabilities that are tightly integrated with their AI ecosystem.

The most effective pattern emerging is the adoption of a unified platform that closes the loop between pre-production testing and real-time production monitoring. This allows teams to capture problematic interactions in production, automatically convert them into test cases, and use them to continuously improve the agent’s performance.

Practical Examples: AI Observability in Action

Let’s make this concrete with a couple of real-world scenarios.

Example 1: The Confused Customer Support Agent

A company deploys an AI agent to handle customer support queries. Suddenly, they notice a spike in escalations to human agents. Traditional logs show no errors, just that conversations are stalling.

With a proper AI observability platform, they open a trace of a failed interaction. They see the agent correctly identifies the user’s issue but then enters a loop. It repeatedly calls the “check_order_status” tool with the wrong date format. The tool returns a valid (but empty) response, so no error is logged. The agent, unable to proceed, simply apologizes and asks the user to rephrase. The trace instantly reveals the root cause: a misunderstanding in the agent’s reasoning about how to format dates for that specific tool. The team can now fix the prompt or the tool interaction with precision.

Example 2: The Expensive Financial Analysis Agent

A financial firm uses an agent to summarize market news. At the end of the month, they are shocked by their LLM bill, which is ten times higher than projected. The operations team has no way of knowing why.

Using an AI observability tool with granular cost monitoring, they quickly filter for the most expensive interactions. They discover that a single type of query—”summarize today’s tech market news”—is responsible for 80% of the costs. Drilling down into the traces, they find the agent is pulling full transcripts of dozens of articles and feeding them all into the most powerful (and expensive) LLM for summarization. The solution is clear: they implement a two-step process. First, a cheaper model scans the headlines to select the three most relevant articles. Then, only those three articles are passed to the expensive model for a high-quality summary. The next month, their bill is back on track.

The Future is Observable: Building Trust in Autonomous AI

Agentic AI is poised to revolutionize how businesses operate. But this future depends on our ability to build systems that are reliable, transparent, and trustworthy. We cannot afford to operate black boxes, especially when they are interacting with our customers and making critical business decisions.

Real-time AI observability is the bedrock of this trust. It moves us beyond simply logging outputs to truly understanding processes. It gives us the control to debug failures, the insight to manage costs, and the confidence to innovate safely. For any organization serious about deploying AI at scale in 2026, embracing this new observability imperative is not just a best practice—it is essential for success.

How Viston AI Can Help

Navigating the complex world of agentic AI requires a partner with deep expertise and proven solutions. At Viston AI, we specialize in implementing industry-specific AI platforms that are powerful, compliant, and fully observable. We have a track record of helping global enterprises accelerate their software delivery cycles and enhance security through intelligent automation.

Our solutions are designed with observability at their core, providing the transparency and control you need to manage sophisticated AI workflows. Whether you are in financial services, healthcare, or technology, we can help you build the robust, AI-powered systems of the future.

Ready to unlock the full potential of your AI initiatives? Contact Viston AI today to learn how our AI-powered solutions can transform your business.

Frequently Asked Questions (FAQs)

What is AI observability?

AI observability is the practice of monitoring and understanding the internal workings of complex AI systems, like AI agents. It goes beyond traditional logs and metrics to include detailed tracing of reasoning steps, tool usage, cost analysis, and continuous quality evaluation.

How do AI agents differ from traditional software?

Traditional software is deterministic; it follows a predefined set of instructions written by a human. AI agents are non-deterministic and autonomous. They can reason, create their own multi-step plans, and use external tools to achieve a goal, making their behavior less predictable.

Why are logs and metrics not enough for AI agents?

Logs and metrics can tell you if an agent produced an output or an error, but they can’t tell you why. Because agents make their own decisions, you need to trace their entire reasoning process to understand their behavior and debug issues effectively.

What are “evals” in the context of AI agents?

“Evals” (evaluations) are automated tests used to measure the quality and safety of an AI agent’s performance. They check for things like factual accuracy (to prevent hallucinations), helpfulness, and adherence to safety guidelines.

How can I monitor the cost of my AI agents?

Cost monitoring requires tracking the number of “tokens” (pieces of words) used in every call to an LLM throughout an agent’s workflow. AI observability platforms provide real-time dashboards that break down costs by interaction, user, or model.

What’s the first step to implementing AI observability?

The first step is to integrate a tracing solution into your AI application. This will begin capturing the step-by-step execution of your agentic workflows, providing the foundational data needed for debugging, evaluation, and cost analysis.

Can open-source tools handle agent observability?

Yes, open-source tools like Langfuse provide powerful tracing and observability features, especially for teams that want deep customization and control. They are excellent for developer-centric workflows and can be a great starting point for building an observability practice.

How does AI observability improve compliance?

By creating an immutable, detailed record of every decision and action an AI agent takes, observability provides a clear audit trail. This helps organizations demonstrate regulatory compliance and prove that their AI systems are operating within established ethical and safety guardrails.

#AIObservability #AgenticWorkflows #LLMOps #DevOps #RealTimeAI #Tracing #Evals #CostMonitoring #VistonAI #FutureOfAI #EnterpriseAI