Human-Centered Agent Governance: RACI for AI Agents and People

Welcome to 2026. Artificial intelligence is no longer just a tool; it’s a teammate. AI agents are taking on complex tasks, making decisions, and interacting with customers, often with minimal human supervision. This leap in autonomy brings incredible opportunities for efficiency and innovation. But it also raises a critical question: when an AI agent makes a mistake, who is accountable?

The answer isn’t in the code. It’s in the governance. As we integrate these powerful new agents into our workflows, we must move beyond purely technical controls. We need clear, human-centered accountability structures. This is where a time-tested project management framework, RACI, comes in, reimagined for the age of AI. An AI governance framework that clearly defines roles and responsibilities is no longer a nice-to-have; it’s a business necessity.

This blog post will explore how to implement a Human-Centered Agent Governance model using the RACI framework. We will provide actionable insights for enterprise C-Suite executives, AI/ML engineers, IT leaders, and anyone else navigating the complexities of AI adoption. We’ll cover everything from defining roles and responsibilities to establishing escalation models and providing the right training. We will also provide a sample policy to help you get started.

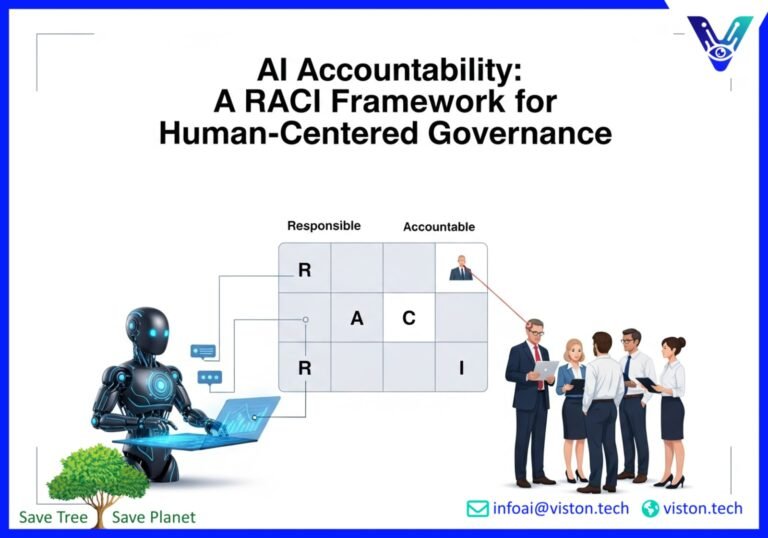

What is RACI and Why Does it Matter for AI Governance?

RACI is a simple yet powerful tool for clarifying roles and responsibilities in any project or process. It’s an acronym that stands for:

- Responsible: The person or AI agent who does the work.

- Accountable: The person who is ultimately answerable for the correct and thorough completion of the task.

- Consulted: The people or systems that provide input and expertise.

- Informed: The people who need to be kept up-to-date on progress.

Traditionally, RACI has been used to manage human teams. But in a world where AI agents are increasingly autonomous, we need to adapt this framework to include them. This is the core of Human-Centered Agent Governance: creating a clear, transparent, and accountable operating model for both your human and AI workforce.

Introducing RACI for AI Agents: A New Operating Model

Applying RACI to AI agents requires us to think differently about responsibility and accountability. An AI agent can be responsible for a task, but it can never be truly accountable. Accountability requires a level of understanding, ethics, and legal standing that AI, in its current form, simply doesn’t possess. Accountability must always rest with a human.

Here’s a breakdown of how to apply RACI in a human-AI hybrid environment:

- Responsible: This role can be assigned to either a human or an AI agent. For example, an AI agent could be responsible for processing customer data, while a human is responsible for handling complex customer escalations.

- Accountable: This role must always be assigned to a human. This is the person who is ultimately answerable for the outcome of the task, regardless of whether it was performed by a human or an AI. For example, a product manager might be accountable for the performance of an AI-powered recommendation engine.

- Consulted: Both humans and AI systems can be consulted. A human expert might be consulted on a complex legal question, while an AI system might be consulted to provide data analysis.

- Informed: Again, both humans and AI systems can be in this category. A project manager needs to be informed of a project’s status, and an AI-powered monitoring system might need to be informed of changes to a production environment.

Defining Roles and Responsibilities in Your AI Governance Framework

To successfully implement a RACI framework for AI governance, you need to clearly define the roles and responsibilities of both your human and AI team members. This requires a deep understanding of your organization’s goals, risk appetite, and the capabilities of your AI systems. For more on building robust governance frameworks, explore the insights from a recent World Economic Forum report on AI agent governance.

Key Roles in a Human-Centered AI Governance Model

Here are some of the key roles you’ll need to define in your AI governance operating model:

- AI Agent: The AI system responsible for executing specific tasks.

- Human-in-the-Loop: The person responsible for monitoring the AI agent’s performance and intervening when necessary.

- AI Product Manager: The person accountable for the overall performance of the AI agent and its alignment with business goals.

- Data Scientist/ML Engineer: The person responsible for developing, training, and maintaining the AI model.

- Legal and Compliance Officer: The person responsible for ensuring the AI agent complies with all relevant laws and regulations.

- Ethics Committee: A cross-functional team responsible for reviewing and approving AI use cases and ensuring they align with the organization’s ethical principles.

Building Your AI RACI Matrix

Once you have defined the key roles, you can create a RACI matrix to map out the responsibilities for each task in the AI lifecycle. This matrix should be a living document, updated regularly as your AI systems evolve and new use cases are introduced.

Here’s a simplified example of a RACI matrix for an AI-powered customer service chatbot:

| Task | AI Chatbot | Human-in-the-Loop | AI Product Manager | Legal & Compliance |

|---|---|---|---|---|

| Answering customer queries | R | C | A | I |

| Escalating complex issues | R | A | I | C |

| Monitoring for bias | C | R | A | C |

Developing Robust Escalation Models

Even the most advanced AI agents will encounter situations they can’t handle. That’s why a clear and efficient escalation model is a critical component of any AI governance framework. Your escalation model should define when and how an AI agent should hand off a task to a human.

Triggers for Escalation

Here are some common triggers for escalating a task from an AI agent to a human:

- Low confidence score: The AI agent is not confident in its ability to complete the task correctly.

- High-risk situation: The task involves a high level of risk, such as a large financial transaction or a critical medical diagnosis.

- Customer request: The customer explicitly requests to speak with a human.

- Ethical dilemma: The AI agent encounters a situation that raises ethical concerns.

Designing an Effective Escalation Path

Your escalation path should be designed to be as seamless as possible for the customer. This means ensuring that the human who takes over the task has all the necessary context and information. A well-designed escalation path can be the difference between a frustrating customer experience and a positive one. For deeper insights into designing these pathways, the PwC’s 2025 Responsible AI survey offers valuable perspectives on operationalizing governance.

The Importance of Training in AI Governance

Implementing a new AI governance framework is not just about creating new processes and procedures. It’s also about fostering a culture of accountability and responsible AI use. This requires ongoing training for all employees who interact with AI systems.

Training for Different Roles

Your training program should be tailored to the specific roles and responsibilities of your employees. For example:

- AI/ML Engineers: Need training on ethical AI development, bias detection, and the technical aspects of your AI governance framework.

- Business Leaders: Need training on the strategic implications of AI, risk management, and how to foster a culture of responsible AI innovation.

- Frontline Employees: Need training on how to use AI systems effectively, when to escalate issues, and how to communicate with customers about AI.

By investing in comprehensive training, you can empower your employees to make informed decisions about AI and ensure that your governance framework is more than just a document—it’s a living part of your organizational culture.

Sample AI Governance Policy

To help you get started, here is a sample AI governance policy that you can adapt to your organization’s specific needs. This policy is intended to be a starting point and should be reviewed and approved by your legal and compliance teams.

1. Purpose

This policy establishes the principles and guidelines for the responsible and ethical use of artificial intelligence at [Your Company]. Our goal is to leverage AI to drive innovation and create value for our customers, while ensuring that our AI systems are fair, transparent, and accountable.

2. Scope

This policy applies to all employees, contractors, and third-party vendors who are involved in the development, deployment, or use of AI systems at [Your Company].

3. Principles

- Human-Centered: We will prioritize human well-being and ensure that our AI systems are designed to augment, not replace, human intelligence.

- Fairness and Equity: We will take proactive steps to mitigate bias in our AI systems and ensure that they are fair and equitable for all users.

- Transparency and Explainability: We will be transparent about our use of AI and strive to make our AI systems as explainable as possible.

- Accountability and Oversight: We will establish clear lines of accountability for our AI systems and ensure that there is meaningful human oversight.

4. Roles and Responsibilities

This section should detail your RACI matrix and the specific responsibilities of each role in your AI governance framework.

5. Risk Management

This section should outline your process for identifying, assessing, and mitigating the risks associated with your AI systems.

6. Training and Awareness

This section should describe your training program for employees and other stakeholders.

7. Policy Review and Updates

This policy will be reviewed and updated on an annual basis to ensure that it remains relevant and effective.

Conclusion: The Future of Work is a Human-AI Partnership

As we move further into 2026, the question is no longer whether AI will be a part of our workforce, but how we will manage the human-AI partnership. A Human-Centered Agent Governance model, built on the principles of the RACI framework, provides a clear path forward. By establishing clear roles, responsibilities, and accountability structures, we can unlock the full potential of AI while ensuring that it is used in a way that is safe, ethical, and beneficial for all.

The journey to effective AI governance is ongoing. It requires a commitment to continuous learning, adaptation, and collaboration. But by taking a human-centered approach, we can build a future where humans and AI work together to solve some of the world’s most pressing challenges.

Take the Next Step with Viston AI

Ready to build a robust AI governance framework for your organization? Viston AI offers end-to-end AI-powered solutions to help you navigate the complexities of AI adoption. Our team of experts can help you develop a tailored governance model that aligns with your business goals and values. Contact Viston AI today to learn more about our AI-powered solutions and how we can help you build a future-ready enterprise.

Frequently Asked Questions (FAQs)

What is the biggest challenge in implementing a RACI framework for AI governance?

The biggest challenge is often cultural. It requires a shift in mindset from viewing AI as just a tool to seeing it as a new kind of teammate. This requires clear communication, comprehensive training, and strong leadership to foster a culture of accountability and trust.

How can we ensure that our AI agents are aligned with our company’s ethical principles?

This requires a multi-faceted approach. It starts with building a diverse and inclusive team to develop your AI systems. It also involves conducting regular bias audits, establishing an ethics committee, and being transparent about your use of AI.

What is the role of the C-Suite in AI governance?

The C-Suite plays a critical role in setting the tone for responsible AI use. They are responsible for championing the AI governance framework, allocating the necessary resources, and ensuring that AI is aligned with the company’s overall strategic objectives.

How often should we review and update our AI governance framework?

Your AI governance framework should be a living document. It should be reviewed and updated at least annually, or more frequently if there are significant changes to your AI systems, business processes, or the regulatory landscape.

How can we measure the effectiveness of our AI governance framework?

You can measure the effectiveness of your AI governance framework by tracking key metrics such as the number of AI-related incidents, customer satisfaction scores, and employee adoption rates. Regular audits and assessments can also help you identify areas for improvement.

What’s the difference between AI being ‘responsible’ and ‘accountable’?

An AI can be ‘responsible’ for executing a task, like a calculator is responsible for a calculation. However, a human must be ‘accountable’—the one who answers for the outcome, especially if it’s wrong. Accountability involves ethical and legal responsibility that AI cannot hold.

Can an AI be in the ‘Consulted’ or ‘Informed’ roles in a RACI chart?

Yes. An AI system can be ‘consulted’ to provide data analysis or predictions to help a human make a decision. It can also be ‘informed’ by receiving data updates to adjust its operations, much like a dashboard is updated with new information.

How does a RACI framework help with AI transparency?

A RACI framework improves transparency by clearly documenting who is accountable for an AI’s decisions and actions. This clarity helps stakeholders understand the lines of responsibility and ensures that there is always a human to answer for the AI’s performance, which is a key principle of transparent AI operations.