Prompt Engineering Masterclass: 2025 Techniques for Enterprise Reliability

The era of treating generative AI as a novelty is over. In 2025, enterprises are moving beyond ad-hoc “prompt-and-pray” experiments and demanding predictable, reliable, and scalable AI solutions. The difference between a parlor trick and a production-ready powerhouse lies in a disciplined approach to prompt engineering 2025. For C-suite leaders, AI/ML engineers, and product managers, mastering this discipline is no longer optional—it’s the key to unlocking true enterprise value.

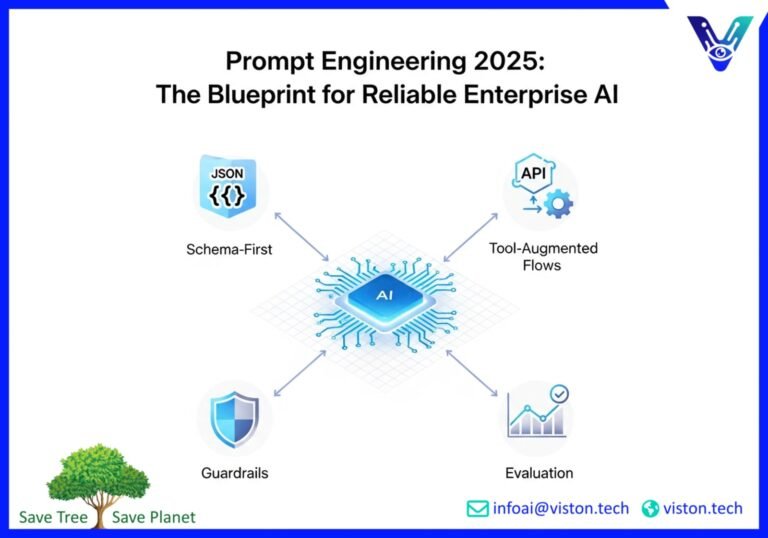

The challenge is clear: how do you transform a creative tool into a deterministic business process? How do you ensure your AI applications are not just impressive in a demo but consistently deliver accurate, safe, and compliant results at scale? The answer lies in moving from ad-hoc prompts to a structured framework built on four pillars: schema-first prompts, tool-augmented flows, robust guardrails, and rigorous evaluation.

This is not just a technical upgrade; it’s a strategic imperative. Companies that formalize their approach to prompt engineering are seeing a tangible return on investment, with some reporting up to a 340% higher return on their AI budget. This masterclass will guide you through the 2025 techniques that are turning AI’s promise into enterprise reality.

From Ad-Hoc to Architected: The Problem with Casual Prompting

In the early days of generative AI, success was often a matter of trial and error. A clever turn of phrase could coax a brilliant response from a large language model (LLM), but this approach is fraught with risk in an enterprise context:

- Inconsistency: Ad-hoc prompts produce variable outputs. The same question can yield different answers, making it impossible to build reliable workflows.

- Lack of Scalability: What works for one user on one task often fails when applied across the organization. This makes it difficult to scale AI-powered processes.

- Compliance and Safety Risks: Without a structured approach, AI models can generate responses that are inaccurate, biased, or violate regulatory requirements like GDPR or HIPAA.

- High Maintenance Costs: As models are updated or business requirements change, ad-hoc prompts often break, leading to a continuous and costly cycle of manual rework.

Gartner predicts that by the end of 2025, at least 30% of generative AI projects will be abandoned after the proof-of-concept stage due to these very issues. The “prompt-and-pray” model is simply not sustainable. To build enterprise-grade AI, we need to think like architects, not artists.

Schema-First Prompts: Laying the Foundation for Reliability

The first step in building a reliable AI system is to define the structure of both the input and the output. This is the core principle of schema-first prompting. Instead of relying on natural language instructions alone, you provide the LLM with a clear, machine-readable format, such as JSON, that dictates the expected data structure.

Think of it as giving the AI a blueprint. You’re not just asking it to write a report; you’re giving it a template with predefined fields like “title,” “author,” “summary,” and “key_findings.” This seemingly simple shift has profound implications for enterprise applications.

Why Schema-First Prompts are a Game-Changer

- Predictable Outputs: By defining the output schema, you ensure that the LLM’s response is always in a consistent, usable format. This is crucial for integrating AI into automated workflows, where data needs to be parsed and passed to other systems.

- Improved Accuracy: Schemas reduce ambiguity. When the model knows exactly what information it needs to provide, it is less likely to “hallucinate” or generate irrelevant content.

- Enhanced Developer Experience: For engineering teams, working with structured data is far more efficient than parsing unpredictable natural language. This accelerates development cycles and reduces integration costs. For an in-depth look at how schemas are revolutionizing database interactions, check out this guide on Schema Prompting.

Real-World Example: Financial Reporting

An unstructured prompt like, “Summarize the key findings from our Q4 financial report,” could produce anything from a single paragraph to a lengthy essay. In contrast, a schema-first prompt would look something like this:

Input Schema: A JSON object containing the raw financial data.

Output Schema:

{

"report_summary": {

"quarter": "Q4 2025",

"total_revenue": "float",

"net_profit": "float",

"key_highlights": [

"string",

"string"

],

"areas_for_improvement": [

"string"

]

}

}

This approach guarantees that the output is a structured, predictable, and immediately usable piece of data that can be fed into a dashboard, a presentation, or another automated process.

Tool-Augmented Flows: Giving Your AI Real-World Capabilities

Large language models are incredibly powerful, but their knowledge is limited to the data they were trained on. To perform enterprise tasks, they need to interact with the real world—accessing live data, executing actions in other software, and connecting to internal systems. This is where tool use and function calling come in.

Function calling is the ability of an LLM to intelligently decide when it needs to use an external tool to fulfill a request. You provide the model with a set of available “tools” (which are essentially API calls to other systems) and a description of what they do. The model can then choose the right tool for the job, extract the necessary information from the user’s prompt, and format it as a function call.

How Tool Use Transforms Enterprise Workflows

- Real-Time Data Access: An AI-powered chatbot can use a function call to a weather API to provide an up-to-the-minute forecast or query a CRM to retrieve the latest customer information.

- Automated Actions: A project management assistant can create a new task in Jira, send an email through Gmail, or schedule a meeting on Google Calendar by calling the appropriate APIs.

- Integration with Proprietary Systems: Enterprises can create custom tools that allow the LLM to interact with internal databases, inventory management systems, and other legacy software.

Example: An AI-Powered Customer Service Agent

A customer asks, “What’s the status of my order?” An LLM without tools can only provide a generic answer. A tool-augmented LLM, however, can:

- Recognize the need for a tool: It understands that to answer the question, it needs to look up order information.

- Select the right tool: It chooses the `getOrderStatus` function from its available tools.

- Extract the parameters: It identifies the order number from the customer’s query.

- Execute the function call: It calls the internal order management system’s API with the order number.

- Generate a response: It uses the information returned by the API to give the customer a precise and accurate update.

This ability to seamlessly blend natural language understanding with real-world actions is what makes tool-augmented flows so powerful for enterprise automation.

Guardrails: Ensuring Safety, Compliance, and Brand Alignment

As AI becomes more autonomous, the need for robust safety measures becomes paramount. AI guardrails are the policies, rules, and technical controls that ensure your AI systems operate within acceptable boundaries. In an enterprise setting, guardrails are not just a best practice; they are a necessity for risk management and regulatory compliance.

Think of guardrails as the bumpers in a bowling alley. They don’t interfere with the game, but they prevent the ball from going into the gutter. Similarly, AI guardrails steer the model away from producing harmful, inappropriate, or non-compliant outputs.

Key Types of Enterprise AI Guardrails

- Topic Restrictions: Preventing the AI from discussing sensitive or off-brand topics. For example, a financial services chatbot should be programmed to refuse to give investment advice.

- PII Redaction: Automatically identifying and masking personally identifiable information (PII) to comply with data privacy regulations like GDPR.

- Compliance Checks: Ensuring that AI-generated content, such as marketing copy or legal documents, adheres to industry-specific regulations.

- Tone and Style Alignment: Forcing the AI’s responses to conform to a predefined brand voice, whether it’s formal and professional or friendly and casual.

Implementing robust guardrails is particularly crucial in highly regulated industries. For a deeper dive into this topic, this article on AI Guardrails for Enterprise Security provides valuable insights.

Offline Evaluation Sets: The Key to Stability and Continuous Improvement

How do you know if your prompts are effective? How can you be sure that a change to a prompt won’t have unintended consequences? The answer is offline evaluation. This involves creating a standardized set of test cases—an “eval set”—that you can run your prompts against in a controlled environment before deploying them to production.

An offline eval set is like a final exam for your AI application. It allows you to benchmark performance, catch regressions, and iterate on your prompts with confidence.

Building an Effective Offline Evaluation Workflow

- Create a Diverse Dataset: Your eval set should include a wide range of inputs, covering common use cases, edge cases, and even potential adversarial attacks.

- Define Success Metrics: Determine what a “good” response looks like. This could be based on accuracy, relevance, tone, or adherence to a specific format.

- Automate the Testing Process: Integrate your evaluation workflow into your development pipeline so that every change to a prompt is automatically tested against your eval set.

- Review and Iterate: Use the results of your evaluations to identify weaknesses in your prompts and make data-driven improvements.

By adopting an offline evaluation workflow, you move from a subjective “I think this prompt is better” approach to an objective, metrics-driven process of continuous improvement. This is the hallmark of a mature, enterprise-grade AI practice.

The Future is Reliable: Your Path to Enterprise-Grade AI

The journey from ad-hoc prompting to a structured, evaluated, and tool-aware AI strategy is the defining challenge for enterprises in 2025. By embracing schema-first prompts, tool-augmented flows, robust guardrails, and offline evaluation, you can build AI solutions that are not just intelligent, but also reliable, scalable, and secure.

This is more than just a technical roadmap; it’s a blueprint for competitive advantage. The companies that master these prompt engineering 2025 techniques will be the ones that successfully integrate AI into their core business processes, unlocking unprecedented levels of efficiency, innovation, and value.

Ready to move beyond the hype and build AI solutions that deliver real enterprise results? The team at Viston AI specializes in developing custom, enterprise-grade AI-powered solutions. We can help you implement the strategies outlined in this masterclass and build an AI infrastructure that is reliable, secure, and tailored to your unique business needs.

Contact Viston AI today to schedule a consultation and take the first step towards building your future-proof AI strategy.

Frequently Asked Questions (FAQs)

-

1. What is prompt engineering?

- Prompt engineering is the process of designing and refining inputs (prompts) for large language models to ensure they produce accurate, relevant, and consistent outputs. In an enterprise context, it involves a structured approach to creating prompts that are reliable, scalable, and aligned with business objectives.

-

2. Why is a “schema-first” approach to prompting important?

- A schema-first approach involves defining a structured format (like JSON) for the AI’s output. This makes the responses predictable and easily integrable into automated workflows, which is essential for enterprise applications.

-

3. What is “function calling” in the context of AI?

- Function calling, or tool use, is the ability of an AI model to interact with external systems and APIs. It allows the AI to access real-time data, perform actions in other software, and connect to internal enterprise systems, greatly expanding its capabilities.

-

4. How do AI guardrails improve enterprise reliability?

- AI guardrails are rules and controls that ensure an AI operates within safe and compliant boundaries. They prevent the model from generating harmful, biased, or non-compliant content, which is critical for managing risk and maintaining brand reputation.

-

5. What is an offline evaluation set?

- An offline evaluation set is a standardized collection of test cases used to assess the performance of prompts before they are deployed. It allows for objective, data-driven improvement of AI systems and helps ensure stability and reliability.

-

6. How can I measure the ROI of prompt engineering?

- The ROI of prompt engineering can be measured through metrics like reduced operational costs from automation, increased productivity from streamlined workflows, higher accuracy in AI-generated content leading to less rework, and improved customer satisfaction. Some businesses report that a disciplined approach to prompt engineering can yield a significantly higher return on their AI investments.

-

7. Is prompt engineering a skill only for technical staff?

- While a technical understanding is beneficial for advanced techniques like schema design and function calling, the principles of clear, structured communication with AI are valuable for a wide range of roles, including product managers, marketers, and business analysts. The move towards more structured prompting actually makes AI more accessible to non-technical users by creating predictable and reliable systems.

-

8. How will prompt engineering evolve in the coming years?

- Prompt engineering will continue to become more sophisticated and integrated into the software development lifecycle. We can expect to see more advanced tools for prompt management, automated evaluation, and the development of even more complex tool-augmented and agentic AI systems that can handle multi-step, autonomous tasks.