The Agentic AI Trap: Why 40% of Initiatives Will Fail by 2027

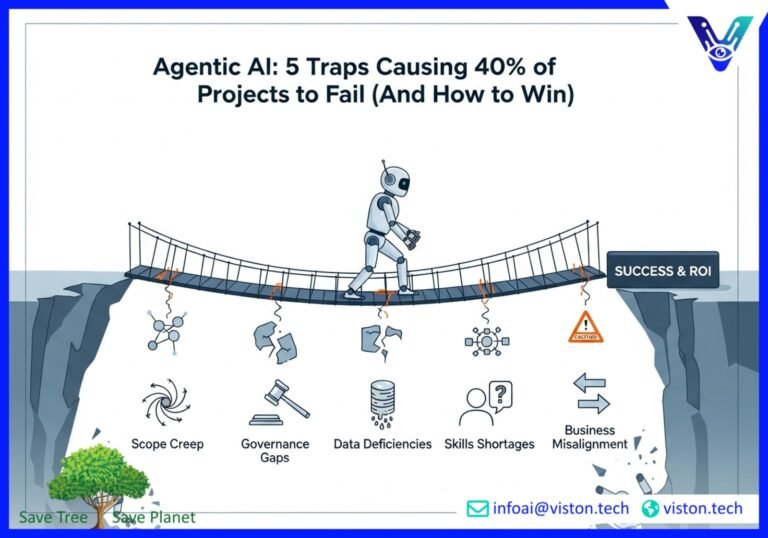

Artificial Intelligence is no longer a far-off concept; it’s a present-day reality revolutionizing industries. At the forefront of this evolution is agentic AI—sophisticated systems capable of autonomous actions to achieve goals. They promise unprecedented efficiency, from automating complex workflows to acting as autonomous customer service agents. Yet, a stark warning comes from industry analyst Gartner: by 2027, over 40% of these ambitious agentic AI projects will be shut down.

The reasons are not rooted in the failure of the technology itself, but in the chasms it exposes within organizations. Escalating costs, a foggy return on investment (ROI), and dangerously inadequate risk management are creating a perfect storm. Many companies, swept up in the hype, are diving headfirst into the agentic AI sea without checking for rocks below the surface. This isn’t just a bump in the road; it’s a critical strategic challenge. Ignoring these AI implementation risks is not an option if you want to be in the successful 60%. This post unpacks the five critical traps that lead to failure and provides a clear checklist to help your organization navigate the agentic AI landscape successfully.

Gartner’s Warning Unpacked: Beyond the Hype

The allure of agentic AI is undeniable. These systems, which can reason, plan, and execute multi-step tasks independently, feel like the ultimate productivity hack. Businesses are experimenting, launching proofs of concept to streamline everything from marketing campaigns to supply chain logistics. However, the transition from a controlled demo to a scalable, production-grade system is where the trouble begins.

Gartner’s prediction highlights a critical disconnect. The excitement for what’s possible is blinding leadership to the immense cost and complexity of what’s practical. Early-stage projects, often driven by hype, are being greenlit without a deep understanding of their true TCO (Total Cost of Ownership) or a clear, quantifiable business case. This leads to a painful realization down the line: the project is costing more than anticipated and delivering less value than promised. Without robust governance and risk management, these initiatives become expensive liabilities, leading boards and C-suites to pull the plug.

The 5 Failure Categories: Where AI Initiatives Go Wrong

To avoid becoming a statistic, it’s crucial to understand the specific failure modes that derail agentic AI projects. These aren’t just technical hiccups; they are deep-seated organizational challenges that require strategic intervention. Let’s explore the five most common traps.

1. The Unchecked Growth: Scope Creep

Scope creep is the silent project killer. It starts with a small, well-defined objective. Then, a stakeholder suggests a “minor” addition. Another team sees potential and requests a new feature. Soon, the project’s boundaries are a distant memory. In the world of agentic AI, this is particularly dangerous. Each new task or capability added to an AI agent increases its complexity exponentially. What began as a project to automate invoice processing can morph into an attempt to build an all-knowing financial oracle.

Why it happens:

- Vague Initial Objectives: Starting an AI project with a fuzzy goal like “improve efficiency” is an open invitation for scope creep.

- Overenthusiastic Stakeholders: As stakeholders witness the AI’s capabilities, they often generate a flood of new ideas, overwhelming the original plan.

- Lack of a Strong Change Management Process: Without a formal process to evaluate, approve, and integrate new requests, the project’s scope expands without adjustments to budget or timelines.

The result is a project that is perpetually delayed, consistently over budget, and ultimately fails to deliver on its core promise because its resources are spread too thin across a mountain of new requirements.

2. The Wild West: Governance Gaps

Imagine building a powerful new engine without a steering wheel or brakes. That’s agentic AI without governance. These systems operate with a degree of autonomy that requires strict oversight. Without it, you expose your organization to significant risks, including data breaches, compliance violations, and reputational damage. An AI agent given access to sensitive customer data without proper controls could inadvertently expose that information. An agent making financial decisions without oversight could make a costly error.

Key Governance Gaps:

- Lack of Accountability: When an autonomous agent makes a mistake, who is responsible? The developer? The business unit using it? Without clear lines of ownership, it’s impossible to manage risk effectively.

- Regulatory Blind Spots: Regulations like GDPR and other data privacy laws are constantly evolving. AI governance frameworks must be agile enough to adapt, yet many companies lack a dedicated body to monitor and implement these changes. For a comprehensive overview of AI risk management, the NIST AI Risk Management Framework is an essential resource.

- Ethical Oversights: AI models can inherit and amplify biases present in their training data. Without an ethical review board or robust testing, you could deploy an agent that makes discriminatory decisions, leading to legal and brand crises.

Effective AI governance isn’t about stifling innovation; it’s about creating the guardrails that allow it to flourish safely and responsibly.

3. The Flawed Foundation: Data Deficiencies

Data is the lifeblood of any AI system. For agentic AI, it’s the air it breathes. The quality of your data directly determines the performance and reliability of your AI agent. The old adage “garbage in, garbage out” has never been more relevant. Many organizations rush into AI development assuming their data is ready, only to discover a messy, siloed, and incomplete foundation.

Common Data Challenges:

- Poor Data Quality: Inaccurate, incomplete, or inconsistent data leads to an AI that learns the wrong lessons. An agent trained on flawed sales data will make flawed sales predictions.

- Data Silos: When data is locked away in different departments and incompatible systems, the AI cannot get a complete picture, limiting its ability to perform complex, cross-functional tasks.

- Bias in Datasets: If historical data reflects past biases (e.g., hiring practices), the AI will learn and perpetuate them. This creates significant ethical and legal risks.

A successful AI initiative must begin with a robust data strategy. This includes investing in data cleansing, integration, and establishing clear data governance policies to ensure your AI is built on a foundation of truth.

4. The People Problem: Skills Shortages

Agentic AI is a cutting-edge technology, and the talent required to build, manage, and scale it is scarce and expensive. The demand for AI specialists, data scientists, and ML engineers far outstrips the supply. This skills gap creates a major bottleneck for companies, slowing down development and increasing costs.

How the Skills Gap Manifests:

- Hiring Challenges: Companies find themselves in a fierce competition for a very small pool of qualified candidates.

- Over-reliance on a Few Experts: Often, AI knowledge is concentrated in a handful of individuals. If they leave, the project can grind to a halt.

- Lack of “AI-Literate” Leaders: It’s not just about technical skills. Business leaders need to understand the capabilities and limitations of AI to set realistic goals and make informed decisions. According to a recent report by the World Economic Forum, adapting the workforce to collaborate with AI is a critical challenge. You can learn more about this in their insightful analysis of the future of jobs.

Addressing the skills gap requires a multi-faceted approach, including upskilling your current workforce, fostering a culture of continuous learning, and building strategic partnerships to bring in external expertise when needed.

5. The Disconnected Strategy: Business Alignment Failure

Ultimately, the most common reason AI projects fail is the simplest: they aren’t solving a valuable business problem. A technologically impressive AI agent that doesn’t contribute to key business objectives is just an expensive science project. This misalignment often stems from a disconnect between IT/data science teams and business leaders.

Symptoms of Misalignment:

- Unclear ROI: The project is initiated without a clear framework to measure its impact on revenue, cost savings, or customer satisfaction. When asked to justify its budget, the team can only point to technical achievements.

- Solving the Wrong Problem: The AI is designed to optimize a process that isn’t a strategic priority, leading to a solution that, while effective, has minimal impact on the bottom line.

- Lack of Stakeholder Buy-in: If business units don’t understand or believe in the value of the AI project, they won’t support its integration into their workflows, leading to poor adoption and eventual failure. Explore the challenges of AI adoption in depth with this comprehensive overview from IBM.

Success requires a purpose-driven AI strategy. Every initiative must begin with a clear business need and have a direct, measurable link to a strategic goal.

Your Prevention Checklist: A Framework for Success

Avoiding the agentic AI trap requires diligence and a strategic mindset. Use this checklist to pressure-test your initiatives and build a foundation for success.

1. Solidify Your Business Case

- ☑ Is the project solving a high-value, strategic business problem?

- ☑ Have you defined clear, measurable KPIs to track success (beyond technical metrics)?

- ☑ Can you articulate the expected ROI in terms of cost savings, revenue generation, or risk reduction?

2. Establish Robust Governance

- ☑ Is there a clear owner for the AI initiative with ultimate accountability?

- ☑ Have you established an AI governance committee or ethics board?

- ☑ Do you have a process for ensuring compliance with current and future regulations?

3. Get Your Data House in Order

- ☑ Have you conducted a thorough audit of your data quality and availability?

- ☑ Is there a clear data strategy in place for cleaning, integrating, and managing the data needed for the project?

- ☑ Have you assessed your data for potential biases and developed a mitigation plan?

4. Define and Control the Scope

- ☑ Is the project scope clearly defined with specific deliverables and boundaries?

- ☑ Do you have a formal change control process to manage new requests?

- ☑ Are all stakeholders aligned on the initial scope and objectives?

5. Address the Skills Gap

- ☑ Have you assessed your team’s current AI capabilities?

- ☑ Is there a plan for upskilling, reskilling, or hiring the necessary talent?

- ☑ Are you fostering a culture of AI literacy across the organization, including at the leadership level?

6. Prioritize Change Management

- ☑ Do you have a plan for how the AI agent will be integrated into existing workflows?

- ☑ Have you communicated the purpose and benefits of the AI to the employees who will be affected?

- ☑ Is there a feedback loop for users to report issues and suggest improvements?

Conclusion: Turn Risk into Reward

Gartner’s prediction is not a prophecy of doom but a call to action. The 40% failure rate is not an inevitability; it’s a warning against recklessness. Agentic AI holds immense potential to transform your business, drive innovation, and create a significant competitive advantage. But this potential can only be realized through a thoughtful, strategic, and disciplined approach.

By understanding the common traps—from scope creep and governance gaps to data issues and skills shortages—you can proactively address your organization’s unique AI implementation risks. Success isn’t about having the most advanced algorithm. It’s about aligning that technology with a clear business purpose, governing it responsibly, and empowering your people to use it effectively.

Don’t let your AI initiative become another statistic. Build a strategy that ensures you’re on the right side of the 40% divide.

Ready to navigate the complexities of agentic AI and build solutions that deliver real business value? The experts at Viston AI are here to help. We provide AI-powered solutions and strategic guidance to ensure your projects are set up for success from day one. Contact Viston AI today to de-risk your AI journey and unlock its full potential.

#AgenticAI #AIStrategy #AIImplementation #DigitalTransformation #RiskManagement #Governance #TechLeadership #FutureOfWork #Innovation

Frequently Asked Questions (FAQs)

1. What is agentic AI?

Agentic AI refers to artificial intelligence systems that can take autonomous actions to achieve specific goals. Unlike traditional AI models that simply provide predictions or classifications, agentic AI can reason, plan a sequence of steps, and use various tools (like APIs or software) to execute those steps without direct human intervention for each action.

2. Why is Gartner predicting such a high failure rate for agentic AI projects?

Gartner’s prediction is based on several key AI implementation risks. The primary reasons include the high and often underestimated costs of development and scaling, the difficulty in proving a clear and compelling return on investment (ROI), and a lack of robust governance and risk management frameworks to handle the complexities of autonomous systems.

3. What is the biggest non-technical risk in an AI implementation?

The biggest non-technical risk is the failure to align the AI initiative with core business objectives. If a project doesn’t solve a significant business problem or contribute to a strategic goal, it will be viewed as an expensive distraction and will likely lose funding and support, regardless of how technologically advanced it is.

4. How can we measure the ROI of an agentic AI project effectively?

Effective ROI measurement for agentic AI requires looking beyond simple cost-cutting metrics. You should establish clear KPIs before the project begins, focusing on areas like increased revenue, improved customer satisfaction scores, enhanced decision-making speed and quality, reduced error rates, and increased employee productivity on higher-value tasks.

5. What is the first step we should take to avoid our AI project failing?

The first and most critical step is to define a clear, specific, and valuable business problem you are trying to solve. Before any code is written, leadership and key stakeholders must agree on the project’s purpose, its intended impact, and how success will be measured. This ensures business alignment from the very beginning.

6. What are “governance gaps” in the context of AI?

Governance gaps refer to the lack of clear policies, processes, and accountability structures for overseeing AI systems. This includes having no defined ownership if an AI makes a mistake, not having a process to ensure the AI complies with legal and ethical standards, and lacking a framework to manage risks like data privacy and algorithmic bias.

7. How does poor data quality impact an agentic AI?

Poor data quality directly compromises an agentic AI’s ability to function effectively and reliably. If the AI is trained on inaccurate, incomplete, or biased data, its understanding of the world will be flawed. This will lead it to make poor decisions, execute incorrect actions, and produce untrustworthy outcomes, ultimately rendering the system useless or even harmful.

8. Can we start an agentic AI project without a dedicated in-house AI team?

While it’s possible to start with a small team or by partnering with external experts, a long-term, scalable agentic AI initiative requires developing in-house expertise. A common failure mode is underestimating the ongoing need for skilled professionals to manage, maintain, and improve the AI system after its initial deployment. A hybrid approach of building and buying talent is often most effective.