Stop guessing. Start engineering. Transform erratic LLM outputs into deterministic business value.

In the era of “Promptware,” your prompts are your codebase. Viston delivers 15+ years of technical excellence to 2,860+ clients across the USA, Europe, and Australia, turning raw language models into precision instruments. We don’t just write text; we architect semantic infrastructure. By hiring our expert prompt engineers, you gain access to a rigorous, engineering-first methodology that stabilizes agentic workflows, reduces token costs by up to 40%, and ensures zero-shot reliability for mission-critical applications.

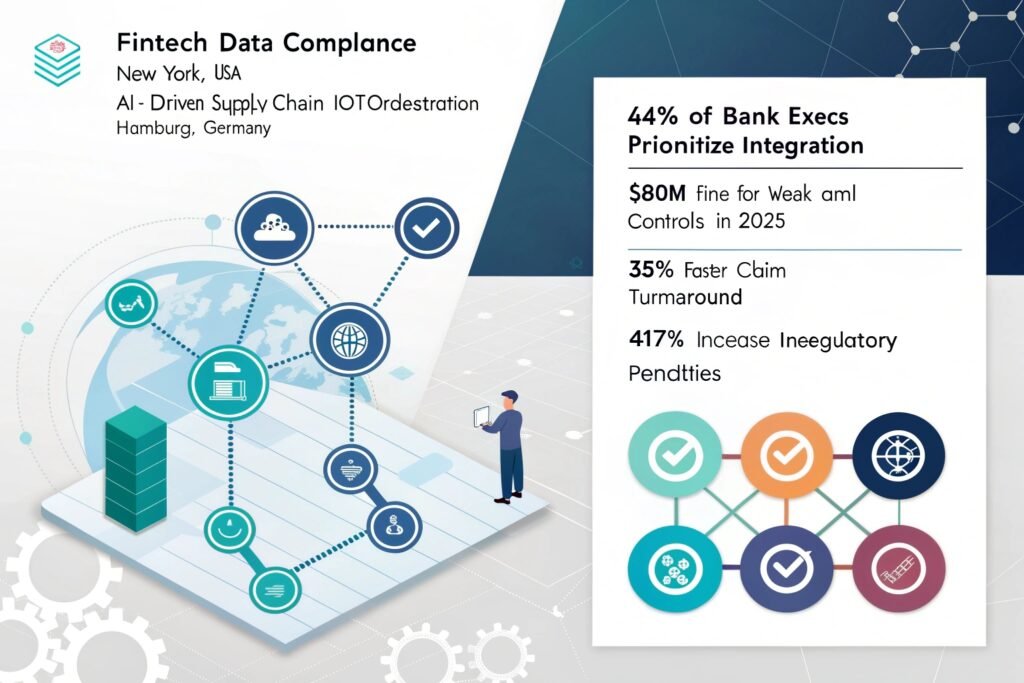

In 2026, treating prompt engineering as an art form is a liability; it must be an engineering discipline. Viston’s approach moves beyond basic “prompt crafting” to systematic Meta-Prompt Optimization and Gradient-Based Tuning. We treat prompts as executable code—versioning, testing, and optimizing them to ensure your AI agents behave predictably at scale. Whether you are deploying customer-facing agents in Fintech or predictive maintenance logs in Manufacturing, our engineers ensure your models understand context, nuance, and constraints without fail.

We deploy "LLM-as-a-Judge" pipelines to quantitatively score prompt performance before production.

Our architectures work across GPT-4, Claude 3.5, Llama 3, and proprietary enterprise models.

We implement "Tiered-Model Strategies" that route simple queries to cheaper models and complex reasoning to flagship LLMs.

Integrated PII sanitization and adversarial defense patterns embedded directly into prompt logic.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

GPT-4o

Claude 3.5

Llama 3

Mistral

DSPy

OpenAI

Anthropic API

REST

GraphQL

Hugging Face

AWS Bedrock

Azure AI

Vercel AI SDK

Docker

Kubernetes

Pinecone

Milvus

Weaviate

Elasticsearch

ChromaDB

AWS SageMaker

LangSmith

TruLens

Arize Phoenix

WhyLabs

FastAPI

Flask

GraphQL

gRPC

$22/hour

$2800/month

Custon Quote

Price: $22/hour

Best For: Maintenance, ad-hoc bug fixes, staff augmentation during peak periods

Engagement Type: Pay-as-you-go

Flexibility: Maximum flexibility – scale up or down instantly

Resource Allocation Time: Immediate

Project Manager: Not included

Account Manager: On-demand

QA Support: Not included

Post-Production Support: Available

Ideal Project Size: Small tasks, bug fixes, short-term needs

Billing Cycle: Weekly or bi-weekly

Contract Terms: No minimum commitment

Price: $2800/month

Best For: Long-term transformation, continuous workflow optimization

Engagement Type: Monthly retainer

Flexibility: Full integration with your team; retained knowledge of your business logic

Resource Allocation Time: 1–3 business days

Project Manager: Optional add-on

Account Manager: Allocated

QA Support: Available on request

Post-Production Support: 100% included

Ideal Project Size: Fixed-scope projects, large-scale migration, enterprise deployment

Billing Cycle: Monthly

Contract Terms: 3-month minimum recommended

Price: Custom Quote

Best For: Long-term digital transformation and center of excellence (CoE) setup

Engagement Type: Monthly retainer

Flexibility: Full-time certified developers with seamless DevOps integration

Resource Allocation Time: 3–5 business days

Project Manager: Included

Account Manager: Dedicated

QA Support: Included with guaranteed SLA

Post-Production Support: 100% included with delivery milestones

Ideal Project Size: Complex multi-phase projects, ongoing product development

Billing Cycle: Monthly

Contract Terms: 6-month minimum recommended

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

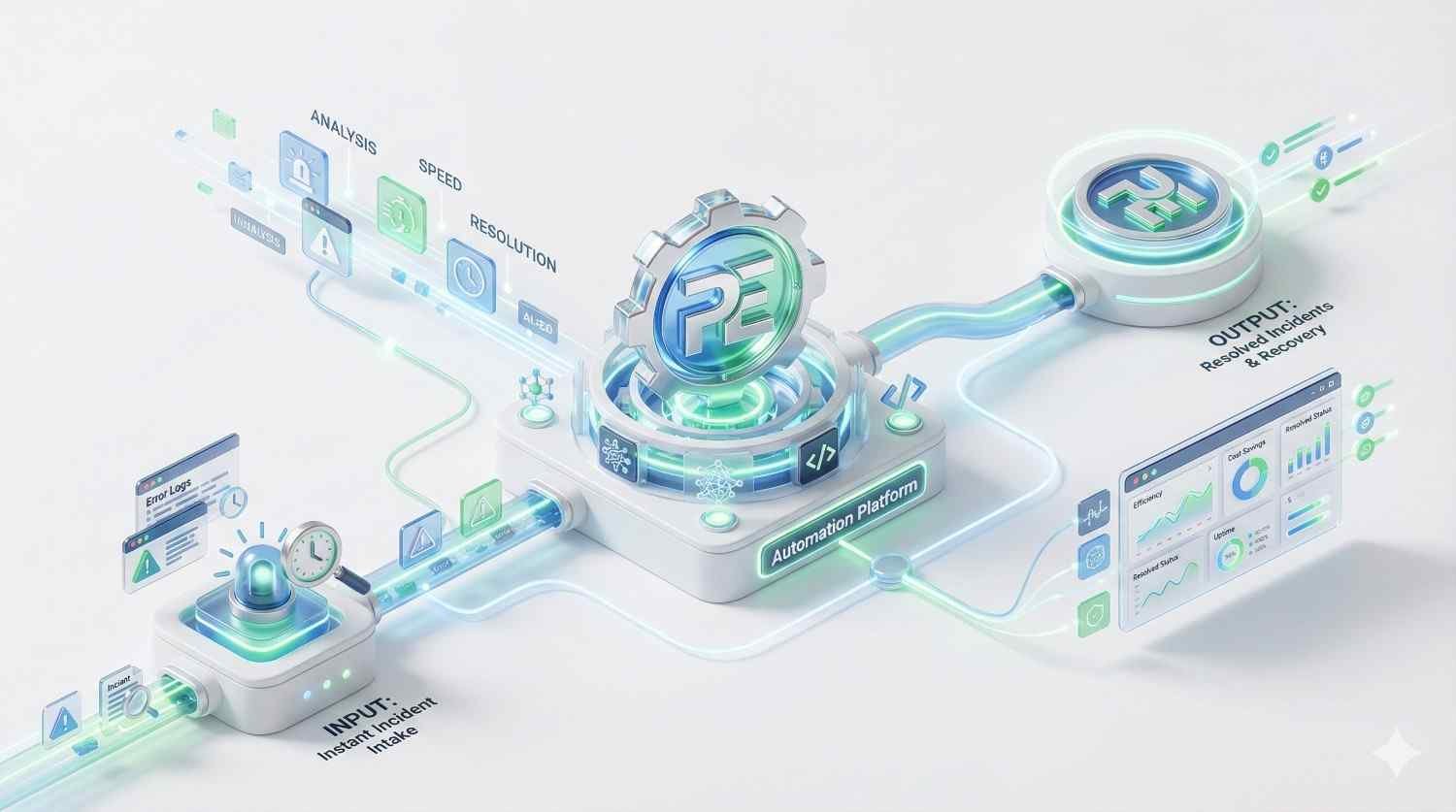

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

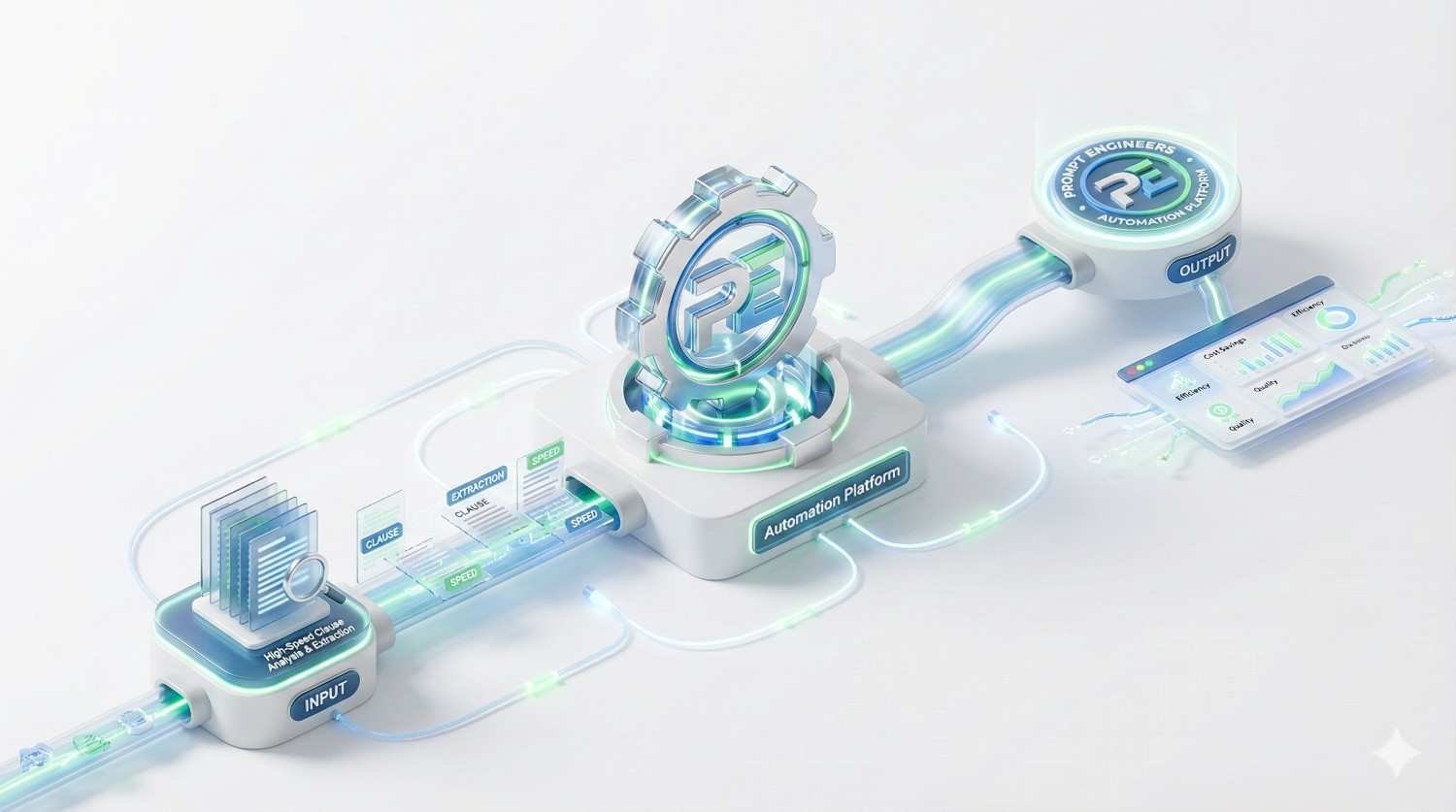

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

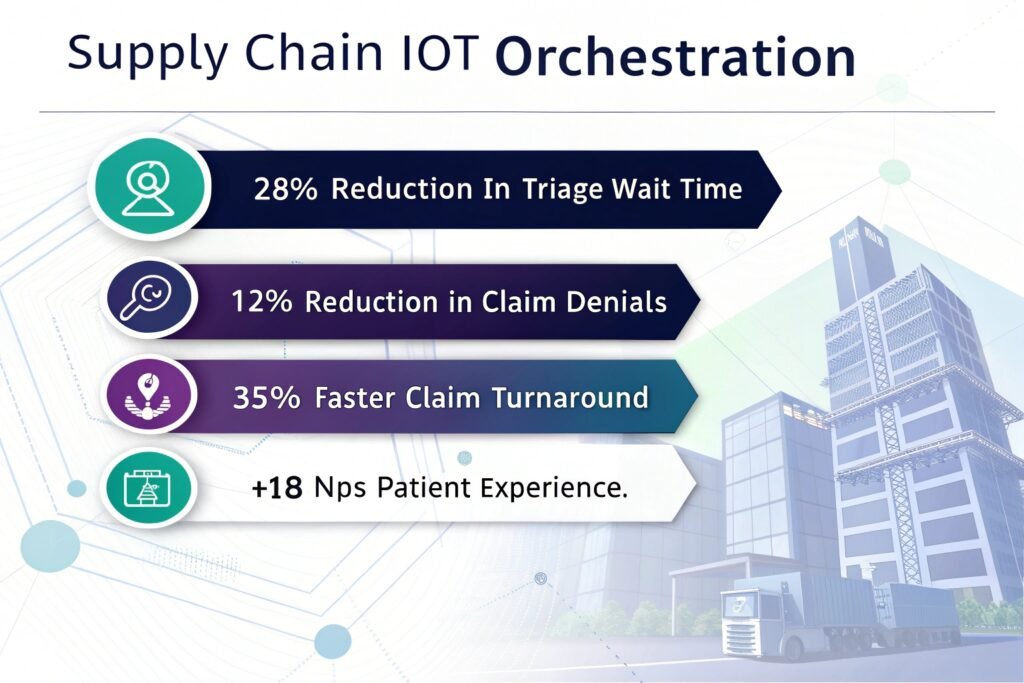

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Precision Cost Control

Stop overpaying for tokens. Our engineers are experts in “Prompt Compression” and model routing, ensuring you get GPT-4 quality at GPT-3.5 prices.

Metric-Driven Optimization

We don’t rely on “vibes.” Viston experts set up quantitative evaluation pipelines (accuracy, latency, faithfulness) to track prompt performance like traditional code.

Adversarial Hardening

Protect your brand. Our engineers proactively “Red Team” your prompts, injecting adversarial attacks to identify and patch vulnerabilities before deployment.

Context Window Mastery

Maximize the “needle in a haystack.” We use advanced techniques to structure long-context data, ensuring your model doesn’t “forget” critical middle instructions.

Cross-Regional Compliance

Deploy globally with confidence. Our experts build “Constitutional AI” guardrails that automatically adapt outputs to meet local regulations in the EU, USA, and Australia.

A prompt engineer specializes in the interaction layer between human intent and model behavior. While general developers focus on infrastructure, our Hire Prompt Engineers experts focus on semantic logic, ensuring the model actually understands and executes complex instructions reliably.

We define success metrics upfront: higher accuracy (%), reduced hallucination rates, lower cost-per-token, or faster user resolution times. We use tools like Ragas and LangSmith to report these metrics weekly.

Absolutely. In fact, we often recommend open-source models for privacy-sensitive B2B use cases. Our engineers are experts in fine-tuning prompts specifically for the unique tokenizers and attention patterns of open-source weights.

Yes. Viston operates under strict ISO 27001 certified processes. We design prompts that include PII scrubbing steps and can deploy “air-gapped” logic where sensitive data never leaves your secure VPC.

Most clients see a measurable improvement in output quality within the first sprint (2 weeks). For full architectural overhauls (like implementing a tiered router), expect a 4-6 week timeline to reach production maturity.

Yes. We can build “No-Code” prompt playgrounds for your subject matter experts (SMEs) to iterate on content, while our engineers handle the backend logic and optimization that connects those prompts to your app.

We offer ongoing “Prompt Maintenance” packages. As models update (e.g., GPT-5 release), prompts often drift in performance. We continuously monitor and re-optimize your libraries to maintain peak efficiency.

We have deep vertical expertise in Fintech (fraud detection), Healthcare (clinical coding), Legal (contract review), and E-commerce (personalized shopping agents).

Yes. We build systems that utilize cross-lingual embeddings and multi-lingual LLMs. This allows a single system to handle customer queries in English, Spanish, German, French, and more, often translating and processing intent in real-time without needing separate models for each language.

We don’t just translate; we “transcreate.” Our team includes native-level speakers for major European and Asian markets to ensure cultural nuances and idioms are correctly interpreted by the model in each region.

Ready to stop experimenting and start scaling? Join 2,860+ enterprise leaders who trust Viston for mission-critical AI. Whether you are in New York, Berlin, or Sydney, our 15+ years of expertise ensures your AI delivers real ROI.