Unlock Enterprise Velocity with Viston’s LLMOps & Agentic AI Experts

Scale your AI initiatives beyond simple chatbots into robust, stateful, and reliable multi-agent systems. Viston provides elite Hire LangGraph Developers services to engineering leaders and CTOs who demand deterministic control over their generative AI workflows. With 15+ years of expertise in data and ML engineering, we have transitioned over 2,860+ clients across the USA, Europe, and Australia from experimental prototypes to mission-critical, production-grade agent networks.

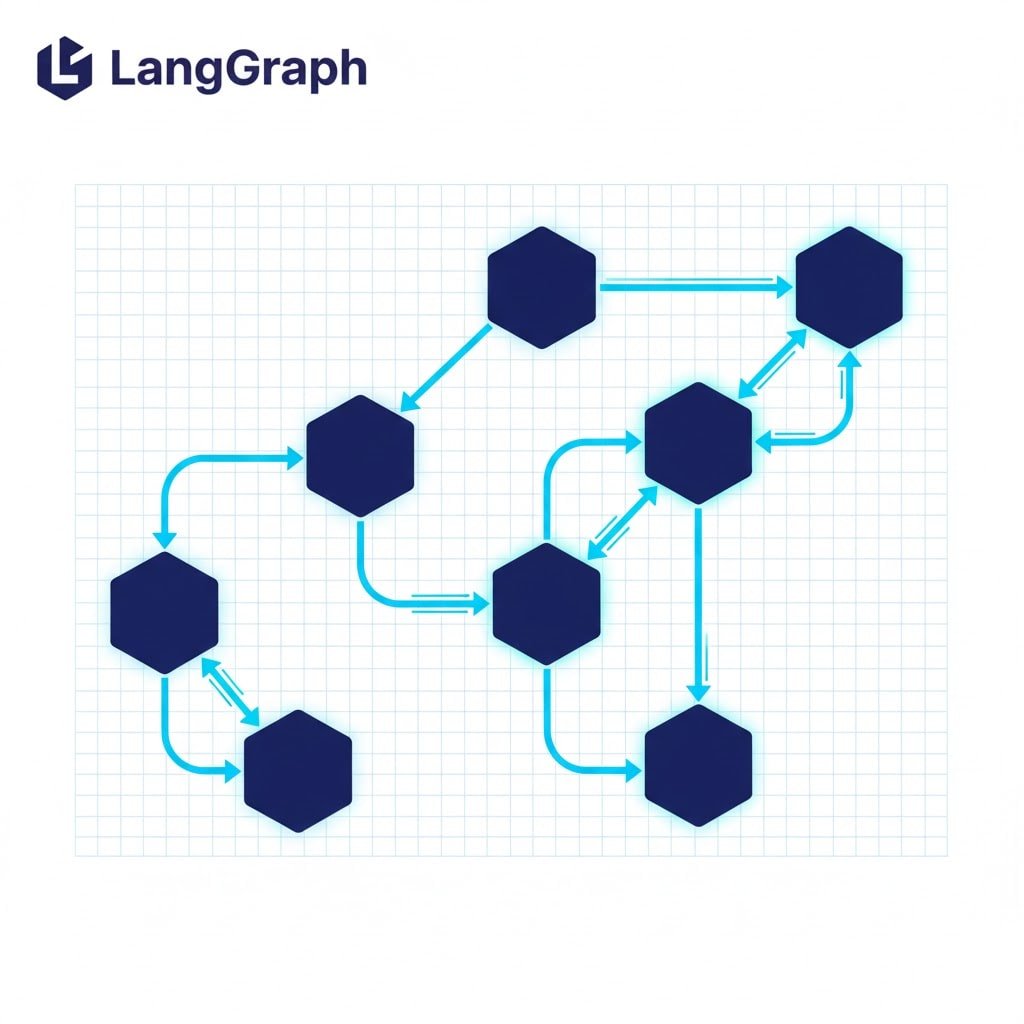

While basic LLM chains are sufficient for demos, real-world enterprise challenges—cyclic dependencies, persistent memory, and human-in-the-loop validation—require the sophisticated orchestration that only LangGraph can provide. Our developers specialize in defining explicit control flows, ensuring your AI agents behave predictably, maintain context over days or weeks, and integrate seamlessly with your existing legacy infrastructure.

In the 2026 AI landscape, stateless “one-shot” LLM calls are obsolete for complex business logic. Enterprises need agents that can plan, reflect, and iterate—capabilities that standard chains cannot offer. Hiring LangGraph developers allows you to build “cyclic” graphs where agents can loop back to previous steps to correct errors, ask for clarification, or refine outputs before final delivery.

Viston’s developers move you away from “black box” AI. We architect systems where you explicitly define the state schema and transition logic, giving you total visibility into why an agent made a specific decision.

Replace non-deterministic loops with explicit edges and conditional routing.

Enable long-running threads where agents "remember" context even after a server restart.

Coordinate specialized agents (e.g., a researcher, a writer, and a reviewer) in a unified graph.

Insert "breakpoints" where a human manager must approve sensitive actions (like executing a trade or sending an email).

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

LangChain

LangGraph

LlamaIndex

AutoGen

AutoGPT

OpenAI

Hugging Face

Llama 3

Anthropic

Mistral

Pinecone

Milvus

Weaviate

ChromaDB

Qdrant

Python

FastAPI

Django

Node.js

GraphQL

AWS Bedrock

Azure OpenAI

Docker

Google Vertex AI

LangSmith

Arize Phoenix

Weights & Biases

ServiceNow

GitHub Action

$22/hour

$2800/month

Custon Quote

Price: $22/hour

Best For: Maintenance, ad-hoc bug fixes, staff augmentation during peak periods

Engagement Type: Pay-as-you-go

Flexibility: Maximum flexibility – scale up or down instantly

Resource Allocation Time: Immediate

Project Manager: Not included

Account Manager: On-demand

QA Support: Not included

Post-Production Support: Available

Ideal Project Size: Small tasks, bug fixes, short-term needs

Billing Cycle: Weekly or bi-weekly

Contract Terms: No minimum commitment

Price: $2800/month

Best For: Long-term transformation, continuous workflow optimization

Engagement Type: Monthly retainer

Flexibility: Full integration with your team; retained knowledge of your business logic

Resource Allocation Time: 1–3 business days

Project Manager: Optional add-on

Account Manager: Allocated

QA Support: Available on request

Post-Production Support: 100% included

Ideal Project Size: Fixed-scope projects, large-scale migration, enterprise deployment

Billing Cycle: Monthly

Contract Terms: 3-month minimum recommended

Price: Custom Quote

Best For: Long-term digital transformation and center of excellence (CoE) setup

Engagement Type: Monthly retainer

Flexibility: Full-time certified developers with seamless DevOps integration

Resource Allocation Time: 3–5 business days

Project Manager: Included

Account Manager: Dedicated

QA Support: Included with guaranteed SLA

Post-Production Support: 100% included with delivery milestones

Ideal Project Size: Complex multi-phase projects, ongoing product development

Billing Cycle: Monthly

Contract Terms: 6-month minimum recommended

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

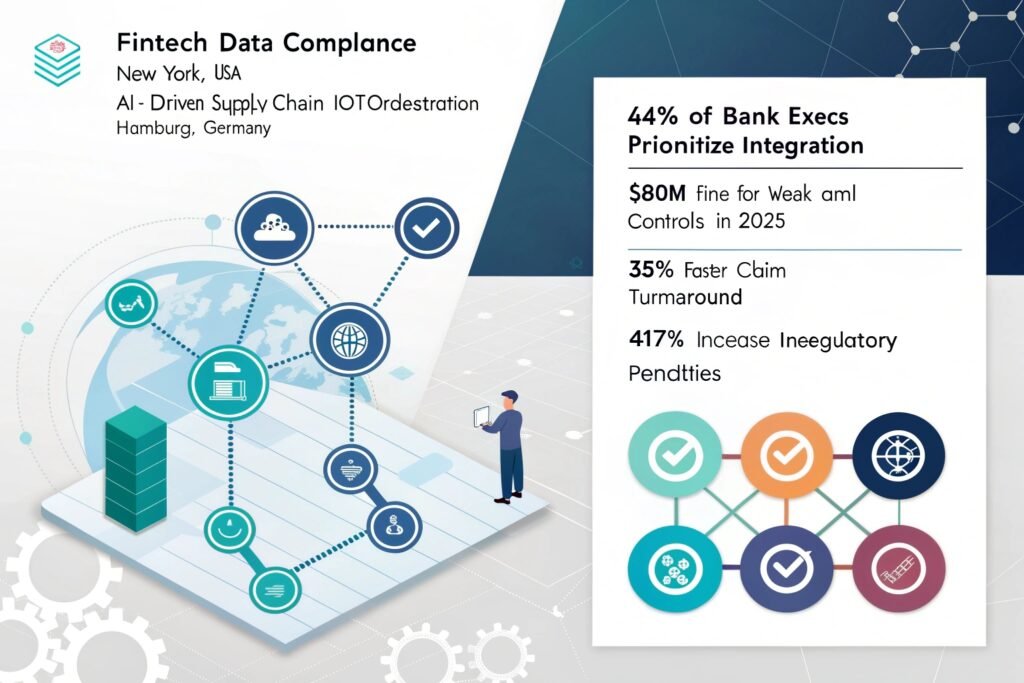

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

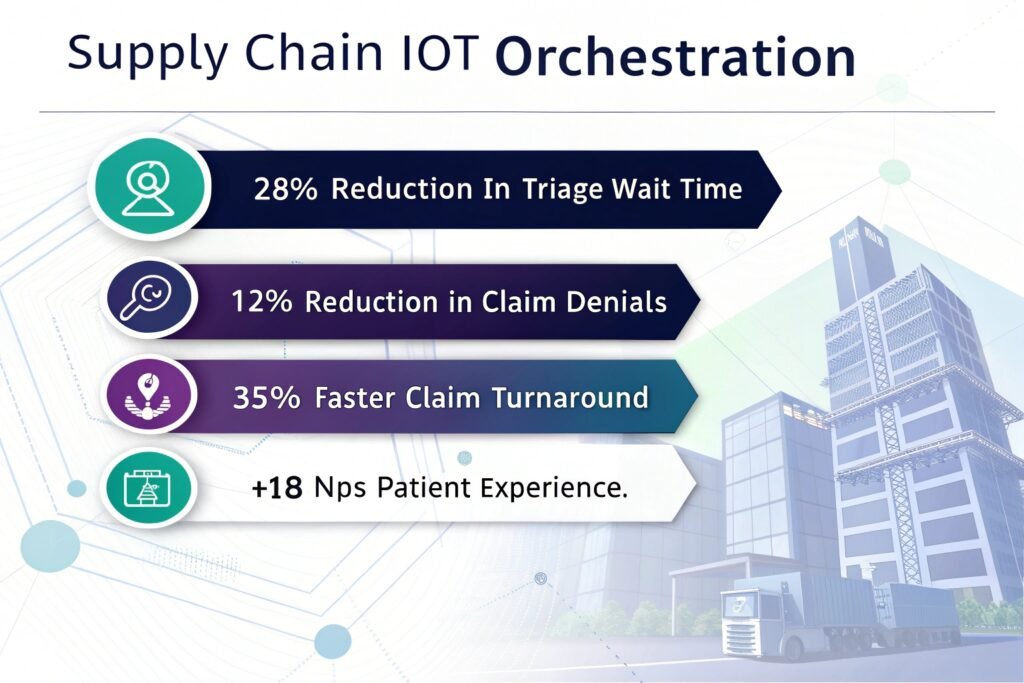

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

LangChain is great for linear sequences (DAGs), but LangGraph introduces cycles, allowing agents to loop, retry, and self-correct. This is essential for building autonomous agents that need to iterate on tasks rather than just executing a single pass.

Yes. We build “Tool Nodes” that allow the agent to query your SQL databases securely. We can implement read-only permissions and validation steps to ensure the agent doesn’t hallucinate data or execute harmful queries.

We use a “Validation Node” pattern. After an agent generates an answer, a second step (or a separate agent) validates it against source documents or strict rules. If it fails, the graph loops back to regenerate the answer, significantly reducing error rates.

It depends on the model. We can configure LangGraph to use private models hosted on Azure/AWS or open-source models (like Llama 3) hosted on your own VPC, ensuring zero data leakage to public API providers.

Absolutely. This is a core feature of LangGraph called “Human-in-the-loop.” We can set breakpoints before critical actions (like sending an email) that require a human API signal to proceed.

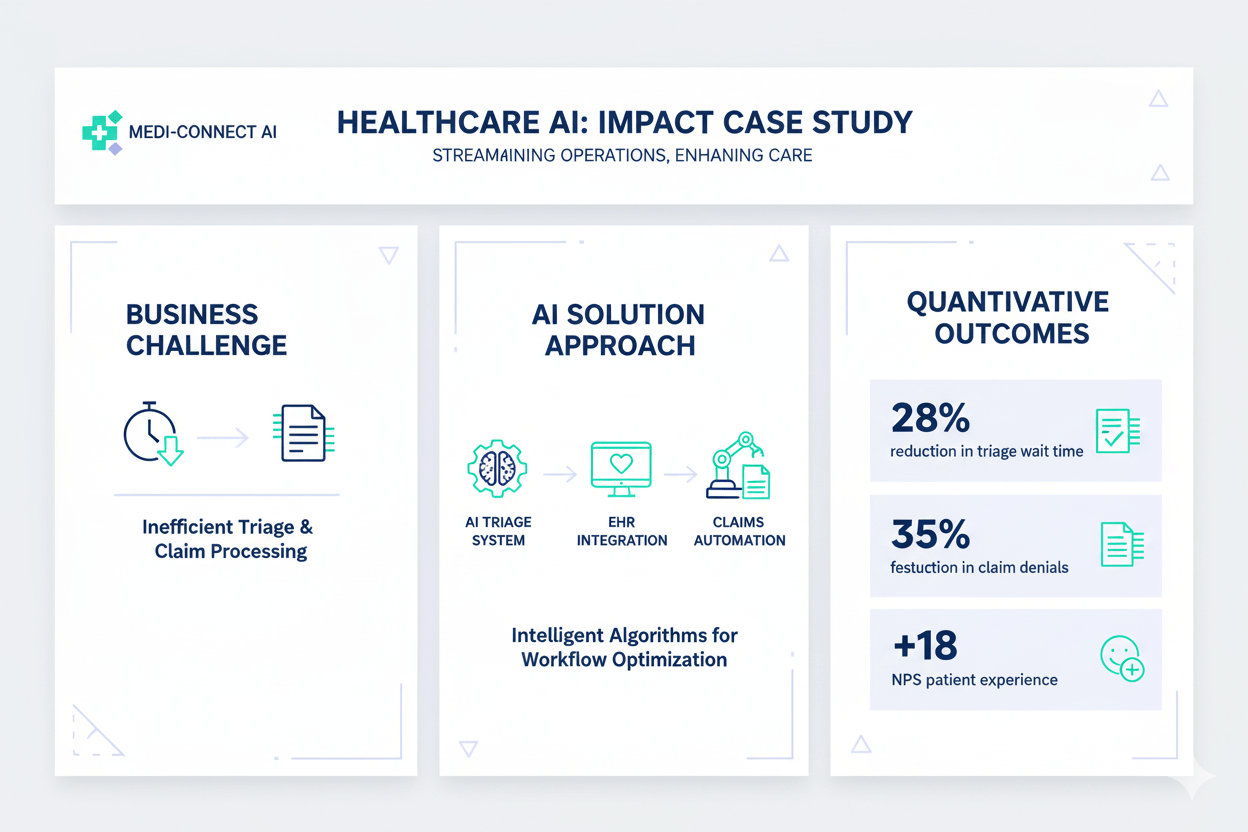

We have deep experience in Fintech (audit agents), Healthcare (clinical trial summaries), Logistics (supply chain routing), and Legal Tech (contract review agents).

We can typically deploy a senior developer within 3-5 business days. For full squads (3+ devs), we usually require 2 weeks to align the right mix of skills.

Yes. Our team is proficient in using tools like Ollama and vLLM to run powerful open-source models locally, orchestrated by LangGraph, for complete data sovereignty.

We define “recursion limits” and escape conditions in the graph configuration. If an agent fails to complete a task after $N$ attempts, the flow is routed to a fallback node or escalated to a human.

Yes. We offer ongoing “LLMOps as a Service” packages to monitor agent performance, update prompts as models evolve, and ensure the infrastructure remains stable.

Don’t let your AI strategy stall at the prototype phase. Partner with Viston to build resilient, self-correcting, and profitable AI agents. Join 2,860+ satisfied clients who trust our 15+ years of engineering excellence.