At Viston, we don’t just integrate API keys; we engineer intelligent ecosystems. With 15+ years of technical expertise and a track record of serving 2,860+ clients across the USA, UK, Germany, France, and Australia, we are the premier partner for companies seeking to hire LangChain developers who understand the nuance of Generative AI.

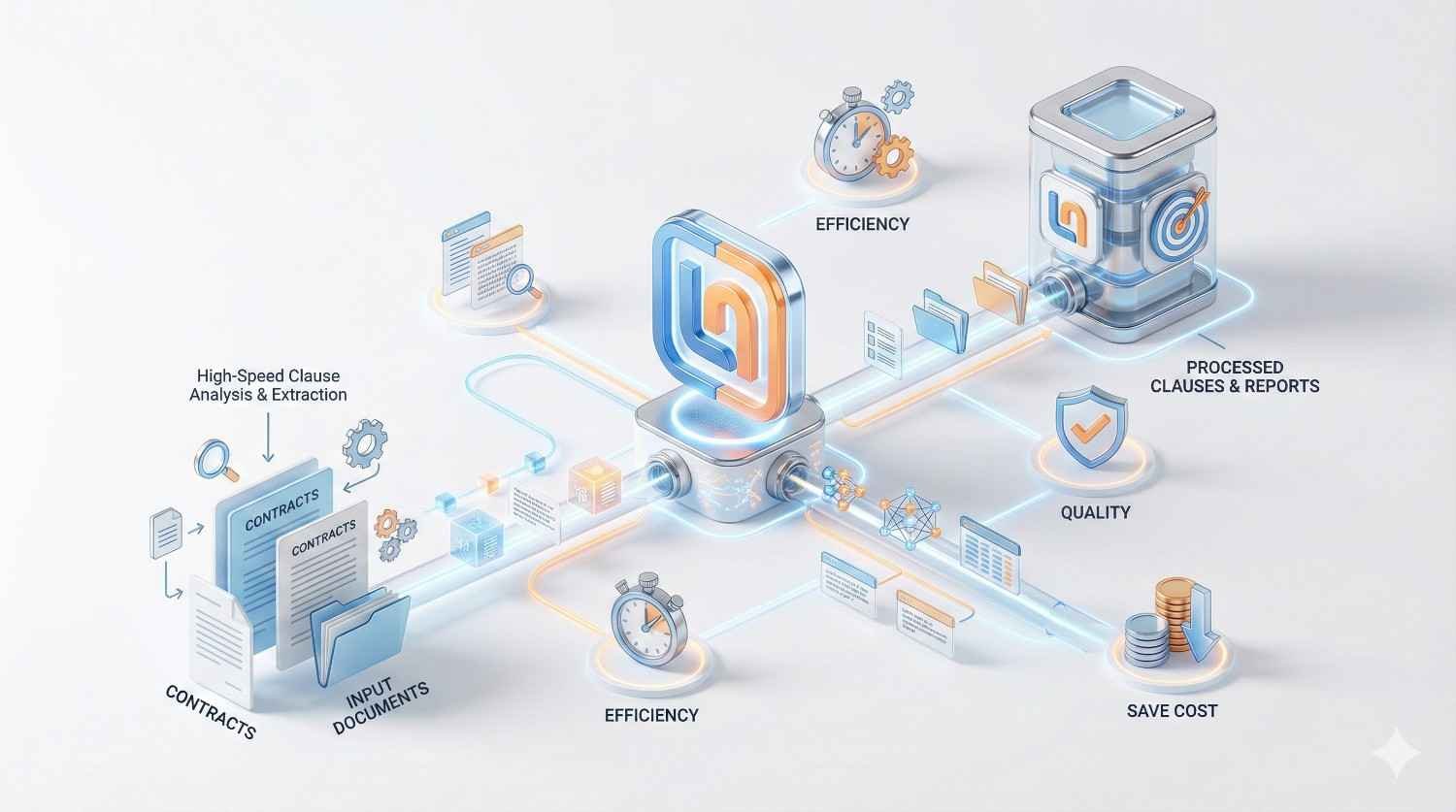

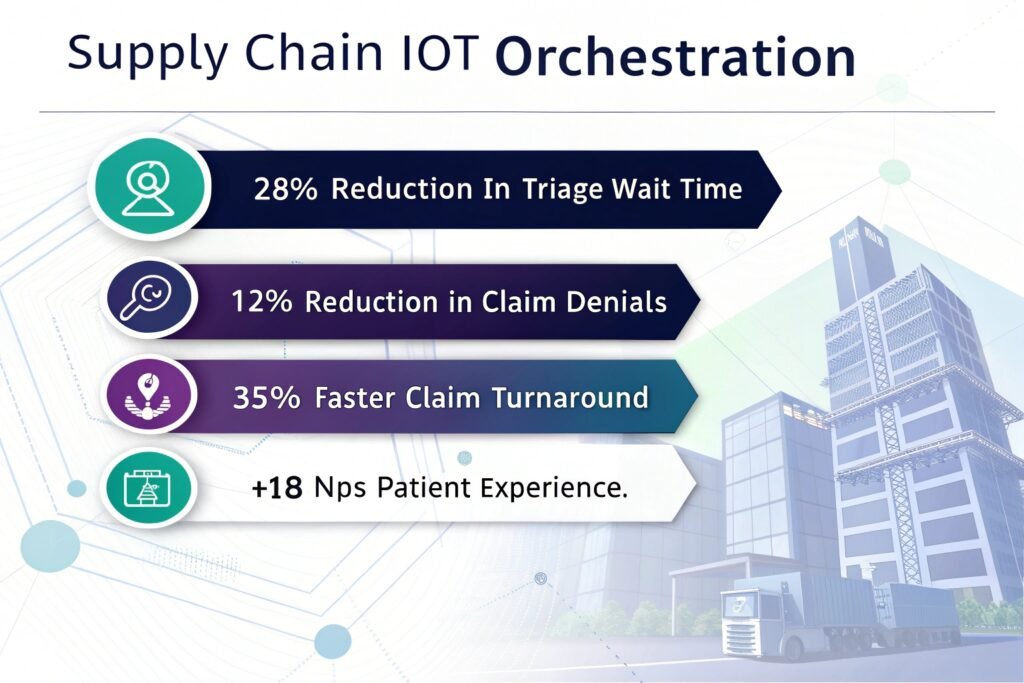

Our developers specialize in the LangChain framework to abstract complexity, enabling “LLMOps in a Box” solutions that bridge the gap between raw language models and actionable business logic. From building context-aware chatbots to deploying autonomous agents that manage supply chains, Viston provides the specialized talent needed to turn AI potential into competitive velocity.

In the rapidly evolving landscape of Generative AI, standard integration is no longer enough. To achieve Enterprise Velocity, you need infrastructure that supports memory, context, and multi-step reasoning. When you hire LangChain developers from Viston, you are securing experts capable of transforming siloed LLM interactions into cohesive, stateful applications.

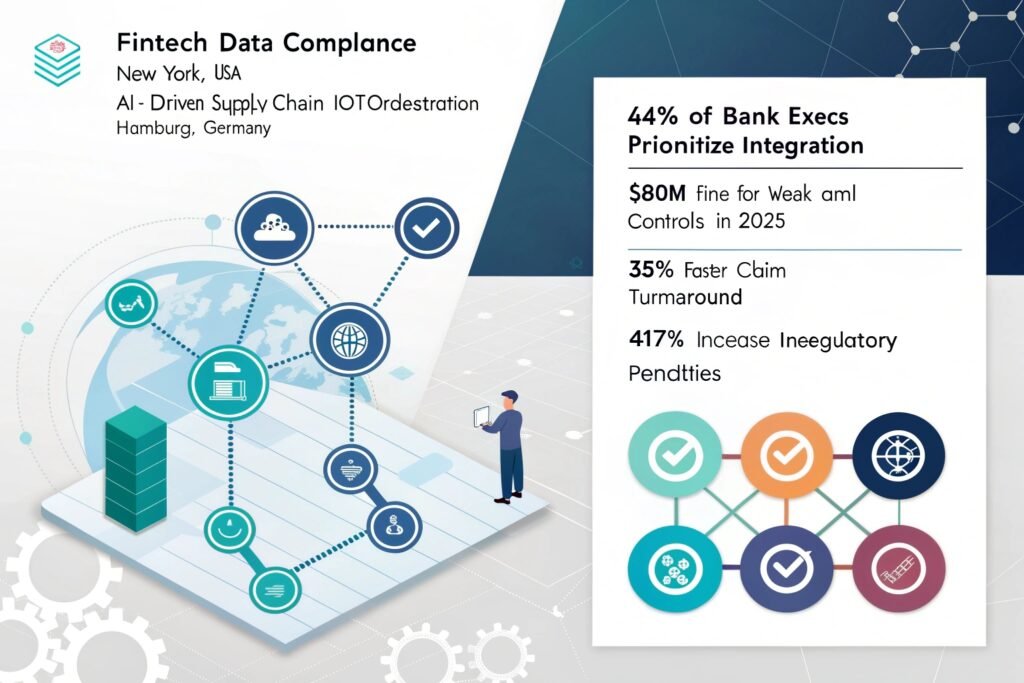

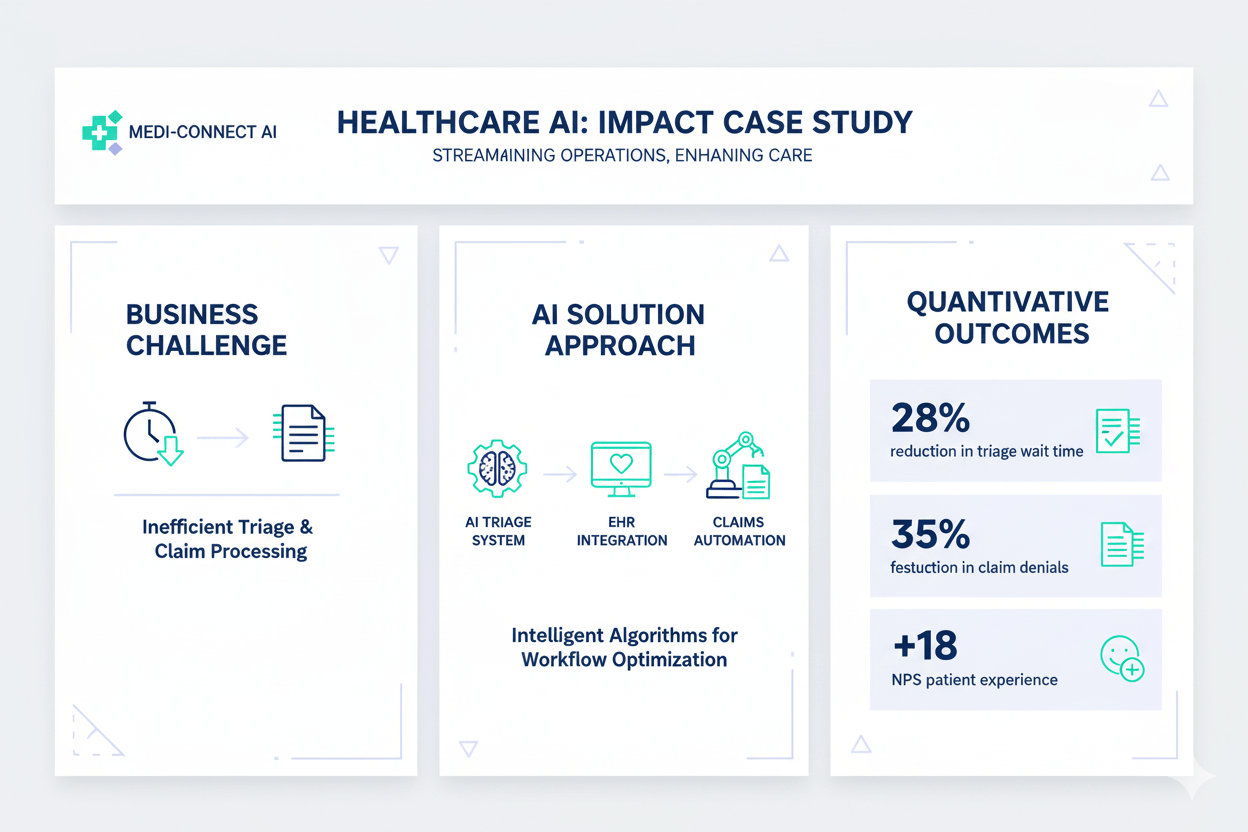

We move beyond basic prompting to build “End-to-End LLMOps platforms.” Our engineers utilize LangChain’s modular architecture to chain together multiple components—prompts, models, and output parsers—creating robust pipelines that solve real-world business problems. Whether you operate in Fintech, Healthcare, or SaaS, our global talent pool ensures your AI initiatives are scalable, compliant, and deployed with edge-ready efficiency.

We build reusable, chainable components that allow for rapid iteration and easy maintenance of complex AI workflows.

Our developers implement sophisticated memory buffers and vector stores, ensuring your AI retains context across long conversations.

We seamlessly connect LLMs to your proprietary data (PDFs, SQL, APIs) using top-tier vector databases for grounded, hallucination-free answers.

Serving clients from New York to Berlin and Sydney, we align with your time zone to deliver agile, continuous development.

Experience

Availability

Deployments

Experience

Availability

Projects Completed

Experience

Availability

Projects Completed

Testimonial: “Viston’s developers understood the strict compliance needs of Wall Street. The RAG agent they built is now our primary risk assessment tool.” — VP of Risk, Fintech Enterprise.

LangChain

LangGraph

LlamaIndex

BabyAGI

AutoGPT

OpenAI

Hugging Face

Llama 3

Serverless

Mistral

Pinecone

Milvus

Weaviate

ChromaDB

FAISS

Python

FastAPI

Flask

Node.js

GraphQL

AWS Bedrock

Azure OpenAI

Tools & Integrations

Google Vertex A

LangSmith

Arize Phoenix

Weights & Biases

ServiceNow

GitHub Action

$22/hour

$2800/month

Custon Quote

Price: $22/hour

Best For: Maintenance, ad-hoc bug fixes, staff augmentation during peak periods

Engagement Type: Pay-as-you-go

Flexibility: Maximum flexibility – scale up or down instantly

Resource Allocation Time: Immediate

Project Manager: Not included

Account Manager: On-demand

QA Support: Not included

Post-Production Support: Available

Ideal Project Size: Small tasks, bug fixes, short-term needs

Billing Cycle: Weekly or bi-weekly

Contract Terms: No minimum commitment

Price: $2800/month

Best For: Long-term transformation, continuous workflow optimization

Engagement Type: Monthly retainer

Flexibility: Full integration with your team; retained knowledge of your business logic

Resource Allocation Time: 1–3 business days

Project Manager: Optional add-on

Account Manager: Allocated

QA Support: Available on request

Post-Production Support: 100% included

Ideal Project Size: Fixed-scope projects, large-scale migration, enterprise deployment

Billing Cycle: Monthly

Contract Terms: 3-month minimum recommended

Price: Custom Quote

Best For: Long-term digital transformation and center of excellence (CoE) setup

Engagement Type: Monthly retainer

Flexibility: Full-time certified developers with seamless DevOps integration

Resource Allocation Time: 3–5 business days

Project Manager: Included

Account Manager: Dedicated

QA Support: Included with guaranteed SLA

Post-Production Support: 100% included with delivery milestones

Ideal Project Size: Complex multi-phase projects, ongoing product development

Billing Cycle: Monthly

Contract Terms: 6-month minimum recommended

Access top-tier developers from major tech hubs in Europe, North America, and Australia.

We offer a trial period to ensure the developer is the perfect fit for your stack.

All code and intellectual property created belongs 100% to your organization.

Our developers undergo weekly training on the latest LLM releases and security patches.

Connects incoming tickets to a vector database (Pinecone) via n8n to retrieve internal documentation context. The workflow passes this context to an LLM (OpenAI/Claude) to generate a technical response, drafts it in the helpdesk, and alerts a human for final approval.

Uses webhooks to listen for changes in Salesforce. The n8n workflow transforms the payload using custom JavaScript to match the ERP schema, handles complex nested JSON arrays, and updates the SAP/NetSuite database, ensuring inventory counts match sales commitments instantly.

Scheduled n8n cron jobs pull audit logs from 15+ distinct SaaS tools. The workflow parses, normalizes, and formats the data into a standardized PDF report, encrypts the file, and uploads it to a secure cold storage bucket while notifying the DPO (Data Privacy Officer).

Ingests high-frequency MQTT streams from factory floor machinery. The n8n workflow utilizes a Python node to run a lightweight statistical deviation model. If a threshold is breached, it triggers an urgent PagerDuty alert and creates a maintenance work order in Jira.

Edge AI & Optimization

For clients needing low latency, we optimize chains for speed and can deploy smaller, fine-tuned models on edge infrastructure, reducing dependency on expensive cloud LLMs.

Direct API integration is fine for simple prompts, but LangChain provides the “glue” for complex apps. It handles memory (context), connects to your private data (RAG), and allows the LLM to use tools (Google Search, Calculator). Hiring Viston developers ensures you utilize this framework to build robust, scalable applications, not just simple chatbots.

Yes, this is a primary use case. Our engineers use advanced chunking strategies and vector databases (like Pinecone or Weaviate) to index millions of documents. This allows the AI to answer questions based only on your data, providing citations and virtually eliminating hallucinations.

We take a “privacy-first” approach. We can implement local LLMs (using Llama or Mistral) that never send data to the cloud. Alternatively, for cloud models, we build redaction chains that strip PII (Personally Identifiable Information) before the prompt is sent to providers like OpenAI, ensuring full GDPR/CCPA compliance.

Absolutely. We are experts in LangGraph and multi-agent orchestration. We can build systems where one agent acts as a researcher, another as a writer, and a third as a reviewer, all collaborating to complete a complex task autonomously.

We have deep experience in Fintech (regulatory analysis), Healthcare (patient data processing), E-commerce (customer support agents), and Logistics (supply chain prediction). Our developers understand the specific data formats and compliance needs of these sectors.

Typically, we can present a shortlist within 48 hours. Once you select a candidate, onboarding can happen in as little as 3-5 days. We handle all the administrative overhead, so the developer is ready to commit code on day one.

Yes. Debugging LLM chains is difficult without the right tools. Our developers use LangSmith to trace complex chains, monitor token usage, identify latency bottlenecks, and run regression tests to ensure high-quality outputs.

Yes. LangChain is designed to be glue code. We can write custom tools that allow the AI to interact with your legacy SQL databases, ERP systems (like SAP), or internal APIs, allowing you to modernize without a full rewrite.

This is the beauty of LangChain. It provides an abstraction layer. If you want to switch from GPT-4 to Claude 3 or a local model, our developers can do so with minimal code changes, future-proofing your application against market shifts.

We can structure a dedicated team to provide 24/7 monitoring and incident response (NOC). This ensures that critical alerts are acknowledged and triaged immediately, regardless of the hour, protecting your uptime and customer experience around the clock.

Don’t let technical complexity stall your AI roadmap. Partner with Viston to access the top 1% of engineering talent. With 15+ years of expertise, 2,860+ clients, and a presence across the USA, Europe, and Australia, we deliver results that matter.